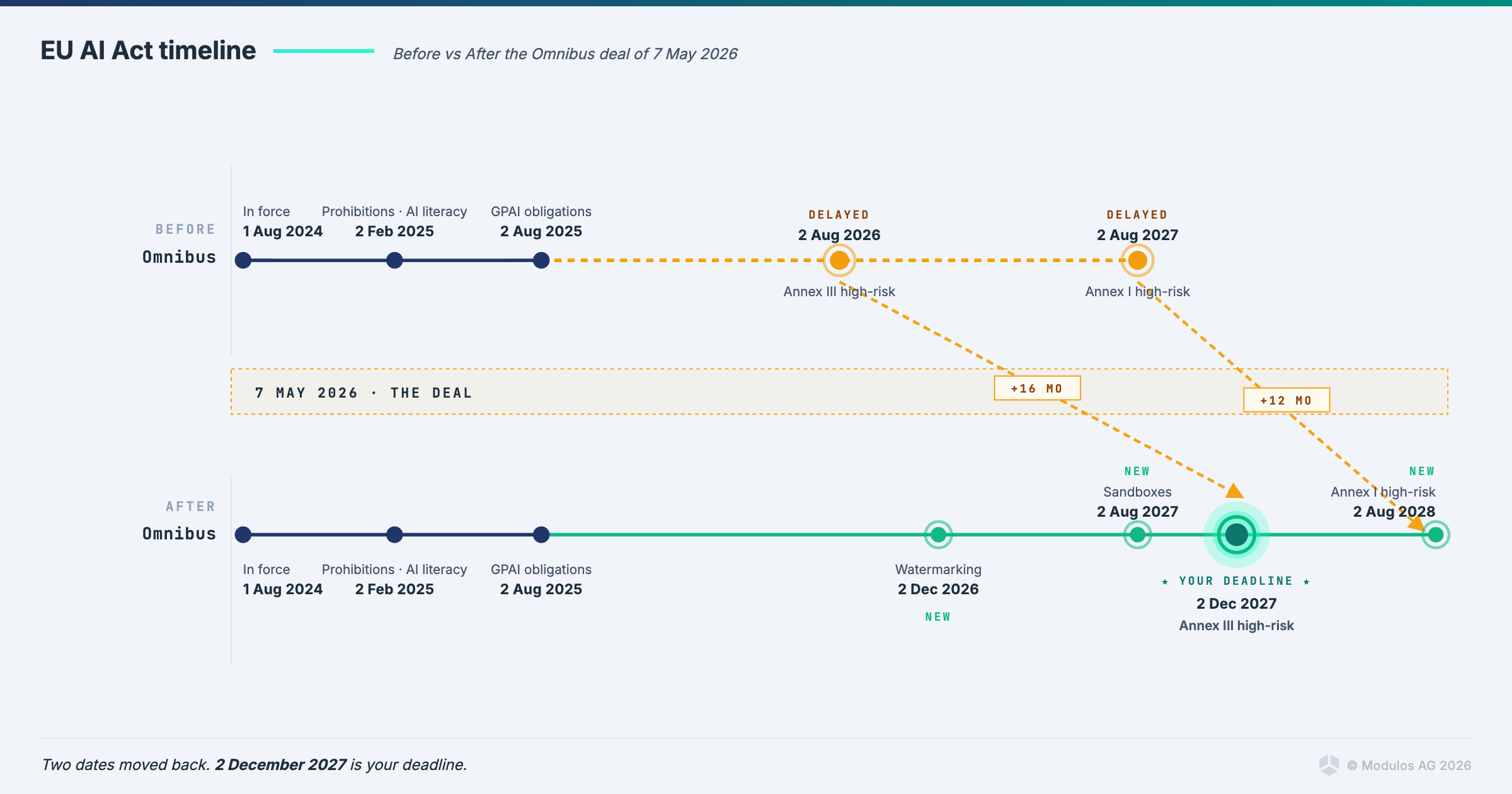

EU AI Act Delayed: The Omnibus Deal Closed on 7 May 2026

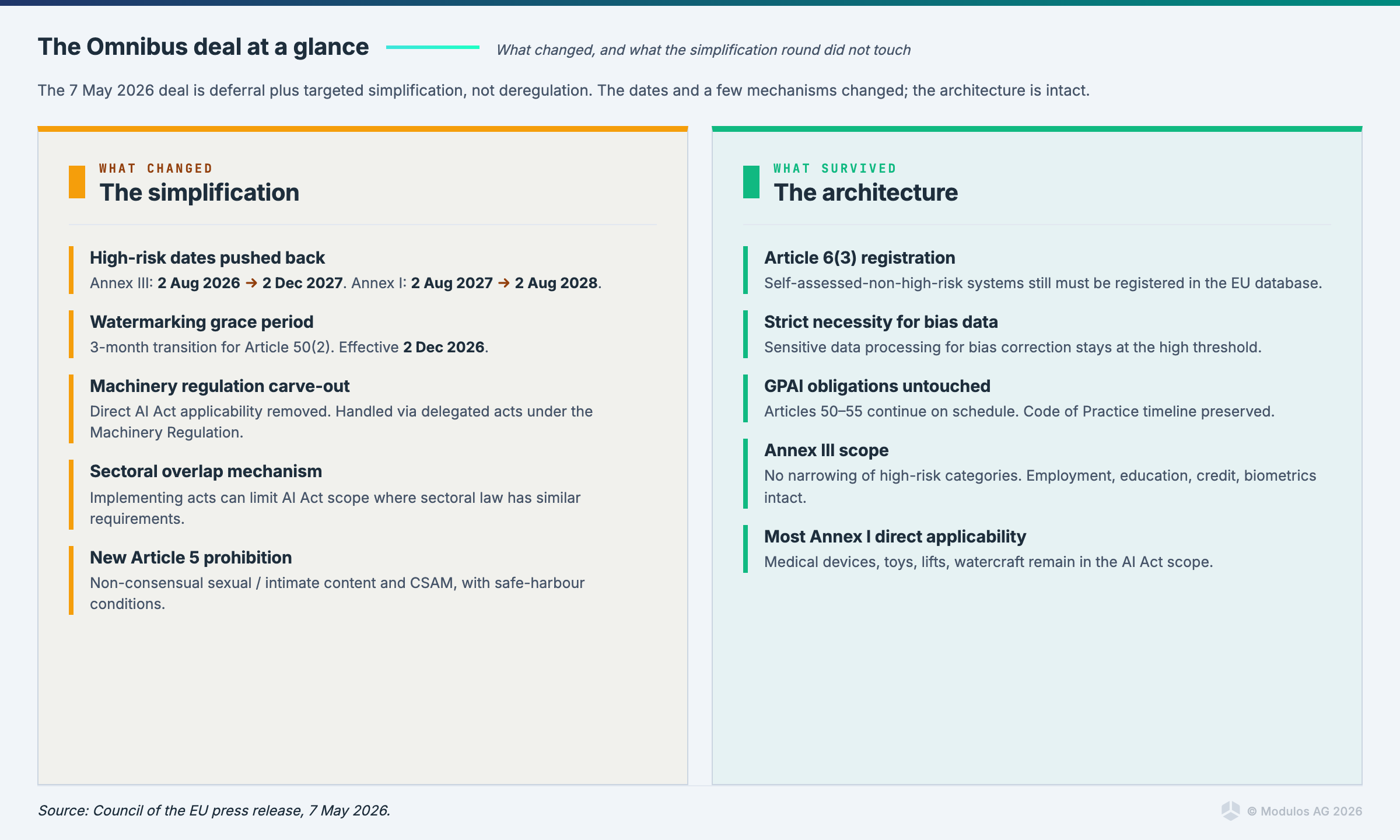

Yes, the EU AI Act has been delayed. On 7 May 2026, the Council of the EU and the European Parliament reached provisional political agreement on the Digital Omnibus on AI. High-risk obligations under Annex III now apply from 2 December 2027, and AI embedded in regulated products under Annex I applies from 2 August 2028. This is the deal, this is the new compliance horizon, and it is almost certainly the only delay you will get.

If you paused your AI Act preparation on the assumption that Brussels would keep moving the goalposts, the goalposts have stopped moving. The clock is running again, and it is running against the dates above.

What the Omnibus deal actually decided

The third political trilogue under the Cypriot Presidency closed roughly a week earlier than expected after the collapse of the 28 April session. The substance of the agreement was largely visible going in, with one open file (the conformity assessment architecture for Annex I products) settled through a face-saving compromise. The headline provisions of the provisional agreement are:

- Fixed application dates for high-risk AI systems. Stand-alone Annex III systems (employment, education, credit, biometrics, critical infrastructure, law enforcement, justice, migration) apply from 2 December 2027. AI embedded in regulated Annex I products (medical devices, machinery, toys, lifts, watercraft and others) applies from 2 August 2028.

- Watermarking and synthetic content marking. The grace period for Article 50(2) transparency obligations was compressed from six months to three. The new effective date is 2 December 2026, seven months from now.

- A new prohibited practice. Article 5 now prohibits AI systems that generate non-consensual sexual or intimate content, or child sexual abuse material, subject to a safe-harbour for systems with effective preventive safeguards.

- Article 6(3) registration retained. The Commission had proposed deleting the obligation to register self-assessed-non-high-risk Annex III-adjacent systems in the EU database. Both Council and Parliament rejected that. The registration obligation survives in a streamlined form under Annex VIII Section B.

- Strict necessity for bias correction. The standard for processing special categories of personal data for the purpose of bias detection and correction stays at "strictly necessary," not the looser "necessary" the Commission had proposed.

- Regulatory sandboxes. Member states have until 2 August 2027 to establish national regulatory sandboxes, a postponement from the original deadline.

- Annex I sectoral compromise. The machinery regulation is carved out from direct AI Act applicability, with health and safety requirements for high-risk AI added through delegated acts under the machinery regulation itself. For other Annex I sectors, the Commission can limit AI Act application through implementing acts where sectoral law already covers similar ground. The Commission is also obligated to publish guidance to minimise overlap.

- GPAI obligations untouched. Articles 50 to 55 continue on their current schedule.

The provisional agreement still requires endorsement by the Council and the European Parliament, then legal-linguistic revision, then publication in the Official Journal. Adoption is expected in the coming weeks. The political agreement is, in practice, what you should plan against.

Your new EU AI Act deadline calendar

Five dates now matter to compliance teams operating in the EU AI Act regime, in chronological order:

| Date | Obligation |

|---|---|

| Already in force | Article 5 prohibitions, Article 4 AI literacy, Articles 50-55 GPAI |

| 2 December 2026 | Article 50(2) watermarking and synthetic content disclosure for systems on the market before 2 August 2026 |

| 2 August 2027 | National regulatory sandboxes operational |

| 2 December 2027 | High-risk obligations apply for Annex III stand-alone systems |

| 2 August 2028 | High-risk obligations apply for AI embedded in Annex I regulated products |

The watermarking deadline is the nearest live obligation. Anyone shipping a generative AI feature into the EU market needs UI labelling, machine-readable metadata embedding, and detection capability operational by 2 December 2026. That is seven months of engineering work, not a paperwork exercise.

Why this is the last delay you will get

It is reasonable to ask whether the EU AI Act might be delayed again. The honest answer is that a second delay is very unlikely, and planning around the possibility of one is not rational. Five reasons.

The political argument for delay has been used. The case for the first postponement was that harmonised standards and conformity assessment infrastructure were not ready by 2 August 2026. The 2027 and 2028 backstop dates were negotiated specifically to absorb that gap. Using the same argument a second time would destroy its credibility.

The Cypriot Presidency framed this deal as a flagship deliverable. The Council statement explicitly calls it the first deliverable under the One Europe, One Market roadmap agreed by the three institutions last week. Reopening the file would mean conceding that the simplification flagship did not work. No incoming Council Presidency wants to inherit that signal.

The Annex I compromise was face-saving, not structural. The architecture of the AI Act, including direct applicability across most of Annex I, is preserved. Parliament's push to move Section A products into the sectoral track wholesale was rejected in favour of a narrower carve-out for the machinery regulation and a case-by-case implementing-act mechanism for the rest. The institutions held the line on the architecture. Holding the line on the dates is the natural consequence.

Two consecutive postponement rounds would terminate the Brussels-effect leverage. The AI Act was conceived as a global standard-setter. A regulation that gets delayed every time enforcement approaches stops setting standards. The institutions are aware of this. The competitiveness framing of today's deal is the framing they will defend.

Staged applicability has already worked once. The Article 4 AI literacy requirement and the Articles 50 to 55 GPAI obligations entered into force on 2 February 2025 and 2 August 2025 respectively, on schedule. The institutions have a working model for staged compliance and will use it.

The rational planning assumption from today onwards is that 2 December 2027 holds. The "Brussels will postpone again" thesis was always wishful thinking. It now has nowhere to go.

What survived the simplification round that most coverage will miss

The headline coverage of the deal will lead with the new dates and the nudification ban. The operationally consequential provision is buried lower in the press release: the registration obligation under Article 6(3) survived intact.

The Commission's original proposal would have deleted the obligation to register, in the EU database, AI systems operating in Annex III contexts that providers self-assess as not meeting the high-risk threshold. Both Council and Parliament rejected the deletion. The 7 May agreement reinstates registration with streamlined Annex VIII Section B content.

This converts self-assessment from a private internal memo into a public artefact. Every provider claiming that their HR, education, credit, essential-services, biometric or law-enforcement-adjacent AI does not meet the high-risk threshold will have to file that position in an EU database and stand behind it. National competent authorities and the AI Office will, from the registration date forward, have a queryable list of borderline classification calls perfectly arranged for thematic enforcement sweeps.

The "wait, classify out of scope, hope nobody notices" strategy that some industry advisors quietly recommended is now operationally dead. Either you are in scope and you comply, or you are out of scope and you publicly justify that position with documented reasoning that holds up under regulatory scrutiny. Both paths require the same governance evidence base.

The Annex I compromise, explained

For organisations producing AI-enabled regulated products, the Annex I compromise needs careful reading, because the architecture is not a clean carve-out.

The machinery regulation (Regulation (EU) 2023/1230) is exempted from direct applicability of the AI Act. Health and safety requirements for high-risk AI systems in machinery products are added through delegated acts under the machinery regulation itself, with the Commission empowered to draft them. Practically, this means that machinery manufacturers face one conformity assessment regime under sectoral law, with AI-specific requirements layered into that regime rather than running on a parallel AI Act track.

For other Annex I sectors (medical devices under MDR and IVDR, toys, lifts, watercraft, and others), the compromise empowers the Commission to limit AI Act application through implementing acts in cases where sectoral law has similar AI-specific requirements. The Commission also gets a new obligation to publish guidance helping economic operators of high-risk AI systems covered by sectoral harmonisation legislation comply with the AI Act in a manner that minimises compliance burden.

Most Annex I direct applicability is preserved. The carve-out is real for machinery and conditional for the rest, with the actual scope decisions deferred to 2027 implementing acts. If you produce regulated AI-enabled products outside the machinery regulation, your conformity assessment path will be defined by a Commission implementing act that does not yet exist. Engaging with the relevant Directorate-General now is the rational move.

What "this is it" means for your compliance program

A meaningful number of enterprises have spent the past nine months deferring AI Act readiness work on the rational expectation that the deadline would slip. The deadline has now slipped. The deferral argument has been used. Anyone treating December 2027 as a comfortable horizon is misreading the calendar.

Nineteen months sounds like a long time. The procurement-and-implementation cycle for enterprise AI governance, including platform selection, integration, evidence pipeline build-out, internal training, and notified body engagement where relevant, runs 12 to 18 months in practice. Anyone starting an RFP in Q1 2027 for a December 2027 go-live is not going to make it. Anyone starting now and treating 2 December 2027 as binding has comfortable runway.

The three concrete consequences of "this is it":

- The wait-for-the-next-omnibus strategy is no longer rational. Plan against 2 December 2027 as a hard date. Internal stakeholders who have been blocking AI governance investment on the basis of "they will delay it anyway" need a new conversation.

- Article 6(3) registration starts producing public artefacts. Build defensible classification reasoning now, before the registration database goes live, not after.

- ISO 42001 procurement gates are already in force at major buyers. The certification timeline runs 12 to 18 months independent of AI Act politics. If your enterprise customers require ISO 42001 in their RFPs, the new EU dates do not give you breathing room on that track.

What compliance teams should do this week, this month, this quarter

This week. Re-anchor your compliance program against 2 December 2027 for Annex III and 2 August 2028 for Annex I. If your team paused work on the assumption of indefinite delay, restart. Identify your single accountable owner for AI Act readiness in writing. Confirm provider versus deployer roles for every system in scope. For systems sitting near the high-risk classification boundary, document the classification reasoning to a standard you would defend in a public registration entry, because that is now the standard.

This month. Run a gap assessment against Articles 9 to 15 for systems classified as high-risk under the current law, including risk management, data governance, technical documentation, record-keeping, transparency, human oversight, accuracy, robustness and cybersecurity. For Annex I products, engage your notified body queue. The compromise reduces overlap but does not eliminate conformity assessment, and capacity is constrained. Begin synthetic-content disclosure engineering for the 2 December 2026 watermarking deadline. UI labels, metadata embedding, and detection at the edge all need to be production-ready by then.

This quarter. Stand up the Article 49 registration documentation pipeline, including the Section B streamlined registration content for self-assessed-non-high-risk systems. Anchor governance maturity against ISO 42001, which gives you scenario-independent maturity that holds whatever clock the institutions eventually settle on. Compliance teams managing multiple frameworks (EU AI Act, ISO 42001, NIST AI RMF, NIS2, DORA) should consolidate evidence into a single pipeline rather than running parallel processes.

The work is the same as it was on 6 May. The clock is now binding.

Frequently asked questions

Has the EU AI Act been delayed? Yes. On 7 May 2026, the Council and Parliament reached provisional political agreement on the Digital Omnibus on AI. High-risk obligations under Annex III now apply from 2 December 2027, and high-risk obligations for AI embedded in regulated Annex I products apply from 2 August 2028. The deal still requires formal adoption, but it is the operative planning baseline.

When does the EU AI Act apply to high-risk AI systems? Stand-alone high-risk AI systems under Annex III (employment, education, biometrics, critical infrastructure, law enforcement, justice, migration, essential services) apply from 2 December 2027. AI systems embedded as safety components in products under Annex I sectoral safety law apply from 2 August 2028.

When does the EU AI Act take effect? Different parts apply at different times. Article 5 prohibitions and Article 4 AI literacy obligations have applied since 2 February 2025. Articles 50 to 55 governing general-purpose AI models have applied since 2 August 2025. High-risk obligations now apply from 2 December 2027 (Annex III) or 2 August 2028 (Annex I) following the Omnibus agreement.

When do AI watermarking obligations under Article 50(2) take effect? 2 December 2026, following the three-month grace period agreed in the Omnibus deal. This applies to AI systems already on the market before 2 August 2026. Providers must implement transparency solutions for artificially generated audio, image, video and text content by that date.

Will the EU AI Act be delayed again? Almost certainly not. The political argument for delay has been used, the Cypriot Presidency framed the deal as a One Europe, One Market flagship deliverable, the Annex I compromise was face-saving rather than structural, and a second postponement would terminate the Brussels-effect leverage that makes the AI Act globally relevant. Plan against the new dates as binding.

Has the Omnibus deal been formally adopted? Not yet. The provisional agreement requires endorsement by the Council and the European Parliament, then legal-linguistic revision, then publication in the Official Journal. Adoption is expected in the coming weeks, well before the original 2 August 2026 deadline.

What was decided about industrial AI and sectoral safety law? The machinery regulation is carved out from direct AI Act applicability, with AI-specific health and safety requirements added through delegated acts under the machinery regulation itself. For other Annex I sectors including medical devices, toys, lifts and watercraft, the Commission can limit AI Act application through implementing acts where sectoral law already has similar requirements. The Commission is also obligated to publish guidance minimising compliance overlap.

Did the registration obligation for self-assessed-non-high-risk AI systems survive? Yes. The Commission proposed deleting the obligation, but both Council and Parliament rejected the deletion. The agreement reinstates the obligation with streamlined Annex VIII Section B content. Providers who self-assess Annex III-adjacent systems as non-high-risk under Article 6(3) must register them in the EU database and stand behind that classification.

What about general-purpose AI obligations? Unchanged. Articles 50 to 55 continue on their current schedule, with no substantive amendments in the Omnibus package. The Code of Practice on synthetic content is expected to finalise in the coming months.

What should compliance teams do this week? Re-anchor against the new dates as binding. Re-classify any systems you de-scoped on the assumption that the Omnibus would narrow Annex III. Confirm provider/deployer roles. Document classification reasoning to a standard you would defend in a public registration entry. Engage notified bodies if you have Annex I products in scope.

The bottom line

The EU AI Act has been delayed once. It will not be delayed twice. Plan against 2 December 2027 and 2 August 2028 as binding dates, build the registration and classification evidence the act will demand, and treat 2 December 2026 as the nearest live obligation for synthetic content disclosure. The simplification round is over. The next move is yours, not Brussels'.

If you want a practical AI governance program that delivers Annex III readiness against 2 December 2027 and watermarking compliance against 2 December 2026, book a 30-minute conversation with our team. We help organisations build evidence pipelines that hold across the EU AI Act, ISO 42001, NIST AI RMF, NIS2 and DORA in a single source of truth.

Editorial note: This post supersedes our 29 April analysis, which was written before the political agreement reached on 7 May 2026. The 29 April analysis reflected the regulatory state immediately after the failed 28 April trilogue. For the trilogue collapse analysis, see here.

Ready to Transform Your AI Governance?

Discover how Modulos can help your organization build compliant and trustworthy AI systems.