For rail, aviation, maritime, and logistics operators

AI governance for

transportation

AI governance for transportation sits at the centre of the EU AI Act high-risk regime. Annex III Part 2 classifies AI used as a safety component in the management and operation of road, rail, air, and maritime traffic as high risk. NIS2 and the CER Directive sit behind it for cybersecurity and physical resilience. ERA, EASA, and EMSA issue the sector safety overlay.

The Numbers Driving AI Governance in This Sector

Third-party figures from regulators, standards bodies, and industry sources. Tiles are colour-coded by type: EU AI Act, cyber and risk, market, and timeline. Every tile links to its source.

Why AI governance for transportation sits at the centre of the EU AI Act

Annex III Part 2 of the EU AI Act is explicit: AI systems intended to be used as safety components in the management and operation of road traffic, and in the supply of water, gas, heating, and electricity, are high risk. Rail, aviation, and maritime traffic management fall under closely related sector safety regimes that regulators align with the AI Act in practice. Predictive maintenance, traffic management, driver monitoring, and autonomous subsystems all sit inside the high-risk regime or adjacent to it, with obligations for conformity assessment, data governance, human oversight, logging, and post-market monitoring.

Cybersecurity and resilience pile on top. Transport is listed in Annex I of NIS2 as a sector of high criticality. Most rail, air, water, and road operators above the size threshold are essential entities with mandatory risk-management, 24-hour incident reporting, supply chain, and board accountability obligations. The Critical Entities Resilience Directive covers physical resilience for the same operators. Sector regulators, notably the European Union Agency for Railways, EASA, and EMSA, issue safety-assurance guidance for AI-enabled subsystems, including AI for signalling, air traffic control, or navigation.

The operational reality is harder than the regulation. Transport networks are geographically distributed, operated by subcontractors, and built on OT systems with decade-long lifecycles. AI governance only works when obligations are decomposed into controls that the depot, the control centre, and the vendor can all execute and evidence. Anchoring the programme on the AI Act, then mapping NIS2, CER, and sector safety rules onto the same control set, is the shortest path to an audit-ready posture.

Regulations and Frameworks in Scope

The EU AI Act is the primary driver. Sector regulations and standards sit around it. Each card links to a deeper primer where available.

EU AI Act

European UnionAnnex III Part 2 classifies AI used as safety components in the management and operation of critical digital infrastructure, road traffic, and the supply of water, gas, heating, and electricity as high risk. Transport management systems are directly named.

NIS2 Directive

European UnionTransport is named in Annex I as a sector of high criticality. Most rail, air, water, and road operators above the size threshold are essential entities with mandatory risk-management, 24-hour incident reporting, supply chain, and board accountability obligations.

CER Directive

European UnionThe Critical Entities Resilience Directive covers physical resilience for the same transport sectors NIS2 covers for cybersecurity, though specific entities are designated per Member State. Member States identify critical entities and require risk assessments, resilience measures, and incident notification.

ERA, EASA, EMSA Guidance

Sector regulatorsThe European Union Agency for Railways, Aviation Safety, and Maritime Safety agencies each issue guidance on AI assurance for safety-critical functions. Expect pre-market certification plus lifetime monitoring obligations for AI-enabled systems.

ISO/IEC 42001

ISO standardThe AI management system standard maps directly onto NIS2 governance and supply chain expectations, giving transport operators a certifiable baseline that covers both AI-specific and general cybersecurity hygiene.

GDPR and Worker Monitoring

EU data protectionDriver monitoring, fatigue detection, and biometric access control for depots and vessels all engage GDPR, Article 88 on employment, and in the EU AI Act, restrictions on emotion recognition in the workplace.

Where AI Governance Actually Bites

The pressure points driving board-level attention in this sector.

Safety-Critical AI

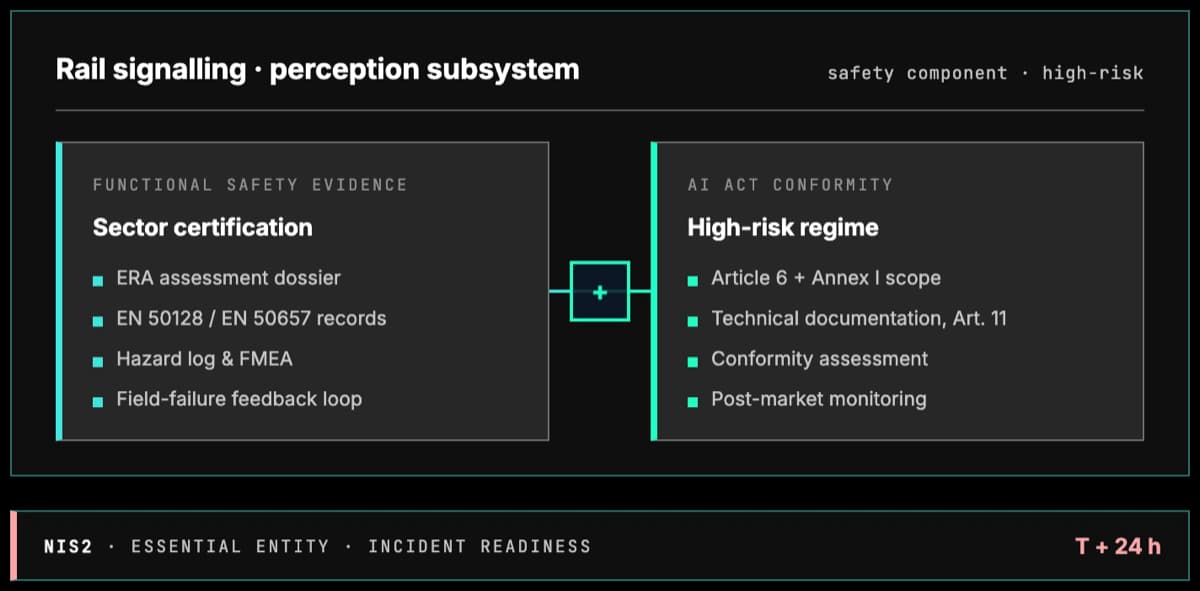

Signalling, collision avoidance, autonomous subsystems, and predictive maintenance for safety components fall under both AI Act high-risk rules and sector safety certification. Governance must bridge AI assurance and functional safety.

Operational Resilience

NIS2 and CER require operators to withstand and recover from cyber and physical incidents. AI-driven traffic management or routing systems are in-scope ICT assets, and their failure modes and dependencies must be documented and rehearsed.

Supply Chain and Vendor AI

NIS2 Article 21 treats supply chain as a mandatory risk-management topic. Every AI vendor (maintenance platforms, LLM copilots, vision systems) must be assessed, registered, and monitored for cybersecurity and governance posture.

Worker Monitoring and Biometrics

Driver fatigue detection, cabin-facing cameras, and depot biometric access all engage GDPR, AI Act Article 5 on emotion recognition at work, and collective bargaining rules in most Member States.

Incident Reporting

NIS2 imposes a three-stage reporting timeline: 24-hour early warning, 72-hour notification, one-month final report. AI-related incidents (degraded models, wrong routing, adversarial attack) fall inside this regime.

Board Accountability

NIS2 Article 20 requires the management body to approve and oversee cybersecurity risk management, complete training, and face personal sanctions for failures. AI governance needs a board view, not just a CISO dashboard.

High-Stakes AI Use Cases

Each use case is tagged with the AI Act gates it triggers. The Regulation runs four independent checks (Article 5 prohibitions, Annex III or Article 6 high-risk, Article 50 transparency, and Chapter V GPAI obligations) and the duties stack. A single system can hit several gates at once.

Emotion recognition in driver or crew cabins without safety justification

Article 5(1)(f) prohibits AI that infers emotions in the workplace outside medical or safety exceptions. Driver-state systems defended as safety measures must be tightly scoped to drowsiness and attention, not general affect inference.

AI as a safety component of road traffic or rail control

Explicitly high-risk under Annex III Part 2. Requires conformity assessment, technical file, human oversight, and continuous monitoring.

Autonomous and driver-assistance systems in fleet operations

Safety-critical ADAS and autonomy subsystems are high-risk under Article 6(1). Vehicles are Annex I Union harmonisation legislation and type approval is a third-party conformity assessment, so both cumulative conditions apply. UNECE type-approval requirements via UN Regulation 155, 156, and 157 run alongside.

Predictive maintenance on rolling stock, aircraft, vessels

No AI Act gate triggered when advisory. Still feeds safety-critical decisions, so needs documented data quality, drift monitoring, and human override. Pulled into Annex III Part 2 high-risk if outputs become a safety component.

Driver fatigue and cabin monitoring (AI biometrics)

High-risk as a safety component of the vehicle under Article 6(1), where Annex I and third-party type approval both apply. Article 50(3) transparency stacks on top because biometric categorisation is involved. Outside a defendable safety purpose, Article 5(1)(f) would apply instead. GDPR lawful-basis analysis and works-council consultation still required.

Generative assistants for dispatch and control-room staff

Article 50 transparency applies: users must be told they interact with AI and synthetic content must be labelled. Chapter V GPAI duties sit on the model provider. Pulled into Annex III Part 2 high-risk if outputs become a safety component of traffic management.

How Modulos Solves It

A single governance graph covering every obligation above, so controls written for one framework earn credit across the rest.

One control set for AI Act, NIS2, CER, and sector safety

A control written for signalling safety earns credit for NIS2 Article 21 and AI Act Annex III. Write once, satisfy many. Evidence is reusable across ERA, EASA, or EMSA certification and supervisory reviews.

Live inventory of AI-enabled subsystems

Risk classification, vendor, dependency, regulatory mapping, and safety-component flag in one place. The view that the CISO, safety director, and board all need in front of regulators.

Structured NIS2 incident workflow with AI triggers

24-hour early warning, 72-hour notification, and one-month final report run as a workflow. AI-specific triggers like model drift and adversarial attack are first-class incident types, not afterthoughts.

Article 20 management body trail

Training, approvals, and sign-offs NIS2 explicitly expects are produced alongside AI oversight records, not in parallel. Personal accountability is demonstrable, not anecdotal.

FAQ

Yes. Transport is a sector of high criticality listed in Annex I of NIS2, covering air, rail, water, and road transport. Medium and large entities in these sectors are automatically essential or important entities, with obligations taking effect once Member States transpose the directive into national law.

Further reading

Deeper context, platform pages, and framework documentation from docs.modulos.ai.

- AI governance platformHow Modulos ties EU AI Act, NIS2, CER, and sector safety evidence into one graph.

- Guide to AI governanceThe practice, the frameworks, and how to stand up a governance programme.

- NIS2 complianceAnnex I essential-entity obligations, Article 20 board duties, and 24-hour reporting.

- EU AI Act framework documentationTechnical guidance on conformity assessment for safety-component AI.

- ISO 42001 documentationClauses 4 to 10 mapped to Modulos controls, reusable under NIS2 Article 21.

Ready to Run AI Governance at Infrastructure Scale?

See how Modulos brings the EU AI Act, NIS2, CER, and sector safety guidance into a single governance graph built for transport operators with distributed sites, long asset lifecycles, and serious supervisory stakes.