You become a provider when you turn a system into a high-risk one.

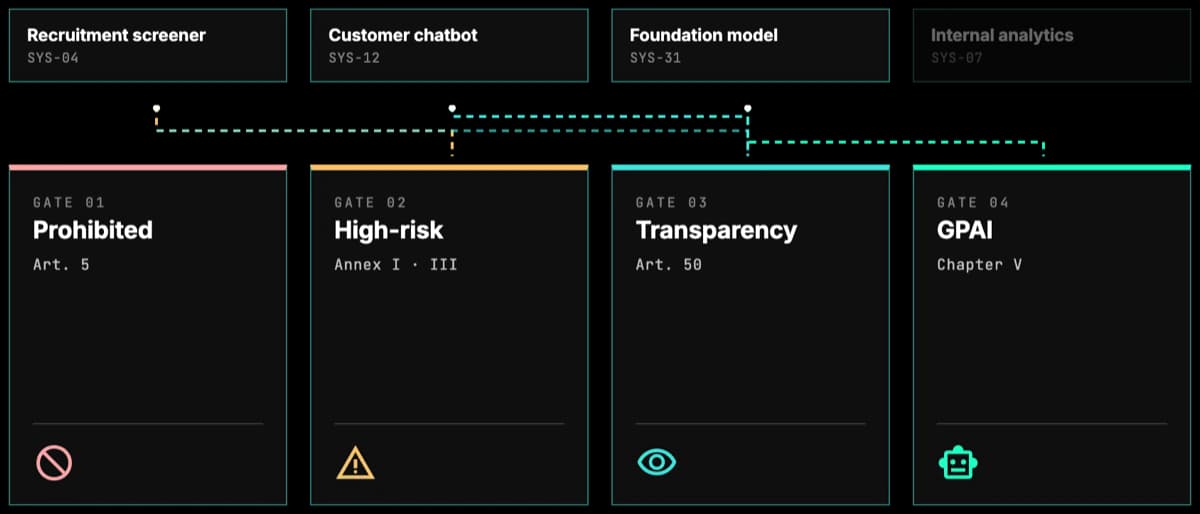

Fine-tune or integrate a foundation model into an Annex III use case. If the result qualifies as high-risk under Article 6, Article 25(1)(c) reclassifies you from deployer to provider. Substantially modify an already-high-risk system and Article 25(1)(b) does the same. Provider obligations are heavy: technical documentation, conformity assessment, the Articles 9 to 15 stack.