For OEMs, tier-1 suppliers, and mobility operators

AI governance for

mobility and automotive

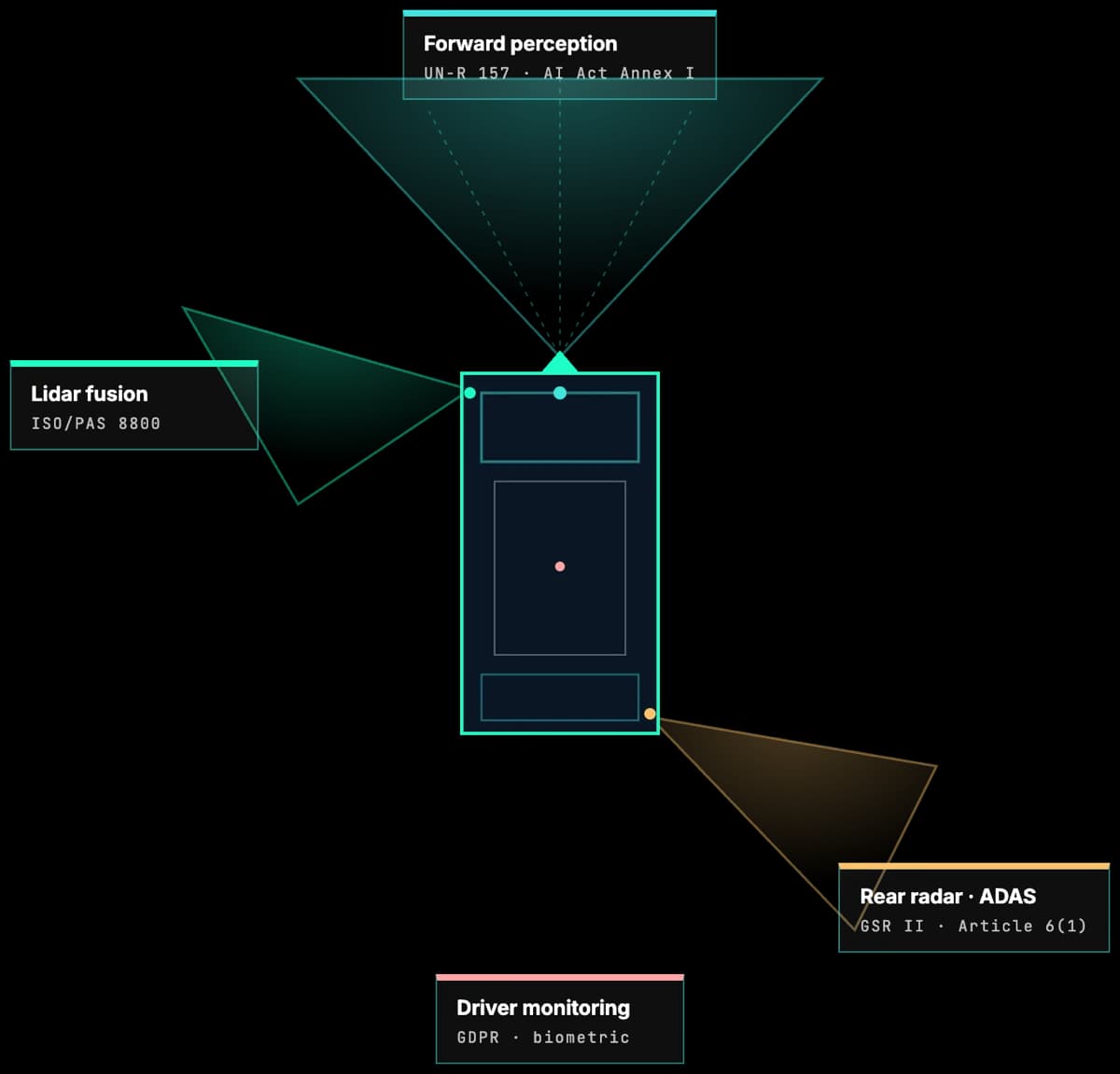

AI governance for mobility and automotive lands on every type-approved vehicle. Article 6(1) of the EU AI Act ties AI used as a safety component of a vehicle to Annex I Union harmonisation legislation. That pulls ADAS, automated driving, and driver monitoring directly into the high-risk regime, alongside UN-R 155, 156, 157 and the General Safety Regulation.

The Numbers Driving AI Governance in This Sector

Third-party figures from regulators, standards bodies, and industry sources. Tiles are colour-coded by type: EU AI Act, cyber and risk, market, and timeline. Every tile links to its source.

Why AI governance for automotive lands on every type-approved vehicle

Article 6(1) of the EU AI Act makes AI high-risk when it is a safety component of (or is itself) a product covered by Annex I Union harmonisation legislation and that product is subject to third-party conformity assessment. Vehicles under Regulation (EU) 2018/858 are Annex I and type approval is a third-party conformity assessment, so the test is met. That reaches the perception, planning, and control stacks of ADAS and automated driving, the driver monitoring systems mandated by the General Safety Regulation, and any AI subsystem integrated into type-approved hardware. Conformity assessment, technical documentation, data governance, human oversight, logging, post-market monitoring, and serious-incident reporting apply.

UN Regulation 155, 156, and 157 are the type-approval layer. UN-R 155 requires a certified cybersecurity management system across the vehicle lifecycle and has been mandatory for new vehicle registrations in the EU since 7 July 2024. UN-R 156 does the same for software updates, which reaches every over-the-air model update. UN-R 157 governs automated lane keeping systems and sets the template for higher-level automation. The General Safety Regulation (Regulation (EU) 2019/2144) mandates driver drowsiness and attention warning, advanced driver distraction warning, intelligent speed assistance, and emergency lane-keeping on new vehicles as of the same date. These obligations pre-existed the AI Act and now have to interoperate with it.

The operational problem is traceability. UN-R 155 already requires traceable risk treatment across the lifecycle of every vehicle type approval. The EU AI Act adds a technical file, a post-market monitoring plan, and a serious-incident reporting pipeline for each high-risk AI system. ISO/PAS 8800 and ISO/IEC 42001 give engineering-grade structure. Modulos gives the control graph that connects them, so a single control can serve type approval, AI Act conformity, and ISO certification at once.

Regulations and Frameworks in Scope

The EU AI Act is the primary driver. Sector regulations and standards sit around it. Each card links to a deeper primer where available.

EU AI Act

European UnionAI used as a safety component of vehicles, or as a product itself, is high risk via Article 6(1) and Annex I harmonisation legislation. Applies to ADAS, automated driving, and driver monitoring systems shipped in the EU.

UN Regulation 155

UNECERequires a certified cybersecurity management system across the vehicle lifecycle. Covers AI components indirectly by requiring risk treatment for all cybersecurity threats, including adversarial ML and model integrity.

UN Regulation 156

UNECESoftware update management system regulation. AI models deployed or updated over-the-air must be governed by a certified SUMS and tied back to type approval.

UN Regulation 157

UNECEAutomated lane keeping systems. Sets operational design domain, human-machine interface, and data-recording expectations for SAE Level 3 functionality. A precedent for future UN-R on higher-level automation.

General Safety Regulation

European UnionRegulation (EU) 2019/2144 mandates driver drowsiness and attention warning, advanced driver distraction warning, and intelligent speed assistance on new vehicles. These AI-enabled functions intersect with the AI Act and GDPR.

ISO/PAS 8800 and ISO 21448 (SOTIF)

ISO standardsEmerging and established standards for AI-in-road-vehicles and safety of the intended functionality. Provide engineering-grade assurance argumentation that maps cleanly onto AI Act documentation.

GDPR and Biometric AI Rules

EUDriver monitoring, cabin cameras, and biometric unlock all engage GDPR, Article 5 of the EU AI Act on emotion recognition in the workplace, and national data-protection guidance on vehicle telematics.

Where AI Governance Actually Bites

The pressure points driving board-level attention in this sector.

ADAS and Automated Driving

Perception, planning, and control AI are high-risk safety components under the EU AI Act and sit inside UN-R 155, 156, 157 type approval. Governance must tie AI risk assessment to homologation evidence.

Driver Monitoring and Biometrics

GSR II mandates drowsiness and distraction warning, but emotion recognition at work is prohibited by the EU AI Act. Fleet and shared-mobility deployments need a robust lawful basis and a worker-consultation trail.

OTA Updates and MLOps

Over-the-air AI model updates must live inside a UN-R 156 software update management system. Change management, rollback, and versioned evidence become regulated operational requirements.

Connected Services and Telematics

Usage-based insurance, driver profiling, and connected apps engage GDPR, AI Act transparency obligations, and national consumer protection. Governance has to cover the whole cloud-to-vehicle path.

Shared Mobility and Robotaxi

Biometric access, dynamic pricing, and generative assistants need cleaned-up lawful bases, transparency, and incident-reporting channels. Local licensing authorities increasingly ask for AI governance documentation.

Supplier and Type-Approval Traceability

Every tier-1 AI subsystem feeds into type approval. Modulos supports traceability from supplier AI risk assessment through to conformity evidence inside the manufacturer technical file.

High-Stakes AI Use Cases

Each use case is tagged with the AI Act gates it triggers. The Regulation runs four independent checks (Article 5 prohibitions, Annex III or Article 6 high-risk, Article 50 transparency, and Chapter V GPAI obligations) and the duties stack. A single system can hit several gates at once.

Emotion inference in commercial driver cabins without safety justification

Article 5(1)(f) prohibits AI that infers emotions in the workplace outside medical or safety exceptions. Commercial fleet and shared-mobility deployments have to tightly scope driver-state inference to drowsiness and attention, not broader affect.

AI safety components in ADAS and automated driving

High-risk under Article 6(1). Vehicles are covered by Annex I Union harmonisation legislation and type approval is a third-party conformity assessment, so both cumulative conditions of Article 6(1) are met. Requires conformity assessment, technical documentation, monitoring, logging, and serious-incident reporting.

Driver drowsiness, attention, and distraction warning (GSR)

Mandatory under the General Safety Regulation and treated as a safety component of the vehicle, pulling it into Article 6(1) high-risk. Article 50(3) transparency obligations stack on top when the system infers emotions or uses biometric categorisation. Outside a defendable safety purpose, Article 5(1)(f) would apply instead.

Biometric driver identification and access

High-risk under Annex III point 1 (biometrics) when used for identification or categorisation of natural persons. Article 50(3) transparency applies when biometric categorisation is involved. GDPR Article 9 and worker-monitoring law run alongside.

Usage-based insurance and telematics pricing

No AI Act gate triggered: non-life insurance pricing is outside the Annex III point 5(c) high-risk scope, which is limited to life and health. Consumer protection, GDPR Article 22, and national insurance rules still require transparency, explainability, and human review paths.

Generative AI in-car assistants

Article 50 transparency applies: drivers must be told they are interacting with AI and synthetic content must be labelled. Chapter V GPAI duties sit on the model provider. Safety risk when advice affects driving still requires logging, hallucination testing, and clear driver-safety guardrails.

How Modulos Solves It

A single governance graph covering every obligation above, so controls written for one framework earn credit across the rest.

AI Act, UN-R, GSR, and ISO mapped into one graph

A control line written once for UN-R 155 earns credit for AI Act Article 6(1) conformity and ISO/IEC 42001 certification. Type approval evidence and AI governance evidence become the same artefacts.

AI subsystem inventory with homologation traceability

Supplier, vehicle platform, model version, type-approval reference, Annex III classification, and Article 6(1) flag are in one record. The traceability notified bodies and homologation authorities expect.

Serious-incident and OTA change workflow

Post-market monitoring, serious-incident reporting, and OTA change approvals run as audit-grade workflows. AI updates flow through UN-R 156 and the AI Act in a single structured process.

Shared view for product, safety, and AI ethics boards

Open risks, supplier posture, and readiness are visible across the teams that actually own the vehicle program. AI governance aligns with cybersecurity and SOTIF governance instead of fighting for attention.

FAQ

In practice, yes. Article 6(1) classifies AI as high-risk when it is a safety component of (or is itself) a product covered by Annex I Union harmonisation legislation and the product is subject to third-party conformity assessment. Vehicles under Regulation (EU) 2018/858 are Annex I and type approval is a third-party conformity assessment, so both conditions are met. The perception, planning, and control stacks of ADAS and automated driving usually meet that definition and trigger the full conformity, documentation, monitoring, and serious-incident reporting regime.

Further reading

Deeper context, platform pages, and framework documentation from docs.modulos.ai.

- AI governance platformHow Modulos ties UN-R 155 cybersecurity, GSR II, and AI Act evidence into one control graph.

- Guide to AI governanceThe practice, the frameworks, and how to stand up a governance programme.

- ISO/IEC 42001The AI management system standard engineering and safety teams reuse across the lifecycle.

- EU AI Act framework documentationArticle 6(1) conformity assessment and CE marking for type-approved vehicles.

- ISO 42001 documentationClauses 4 to 10 mapped to Modulos controls and evidence.

Ready to Run Mobility AI Governance at OEM Scale?

See how Modulos maps the EU AI Act, UN-R 155, 156, 157, the General Safety Regulation, ISO/PAS 8800, and ISO/IEC 42001 into one graph, so AI homologation evidence and AI governance stop competing for engineering time.