For energy, water, gas, and district heating operators

AI governance for

utilities

AI governance for utilities reaches directly into the control room. Annex III Part 2 of the EU AI Act names AI used as a safety component in the supply of electricity, gas, water, and heating as high risk. NIS2 adds essential-entity cybersecurity obligations, the CER Directive adds physical resilience, and ISO/IEC 42001 gives you the audit-grade management system.

The Numbers Driving AI Governance in This Sector

Third-party figures from regulators, standards bodies, and industry sources. Tiles are colour-coded by type: EU AI Act, cyber and risk, market, and timeline. Every tile links to its source.

Why AI governance for utilities reaches into the control room

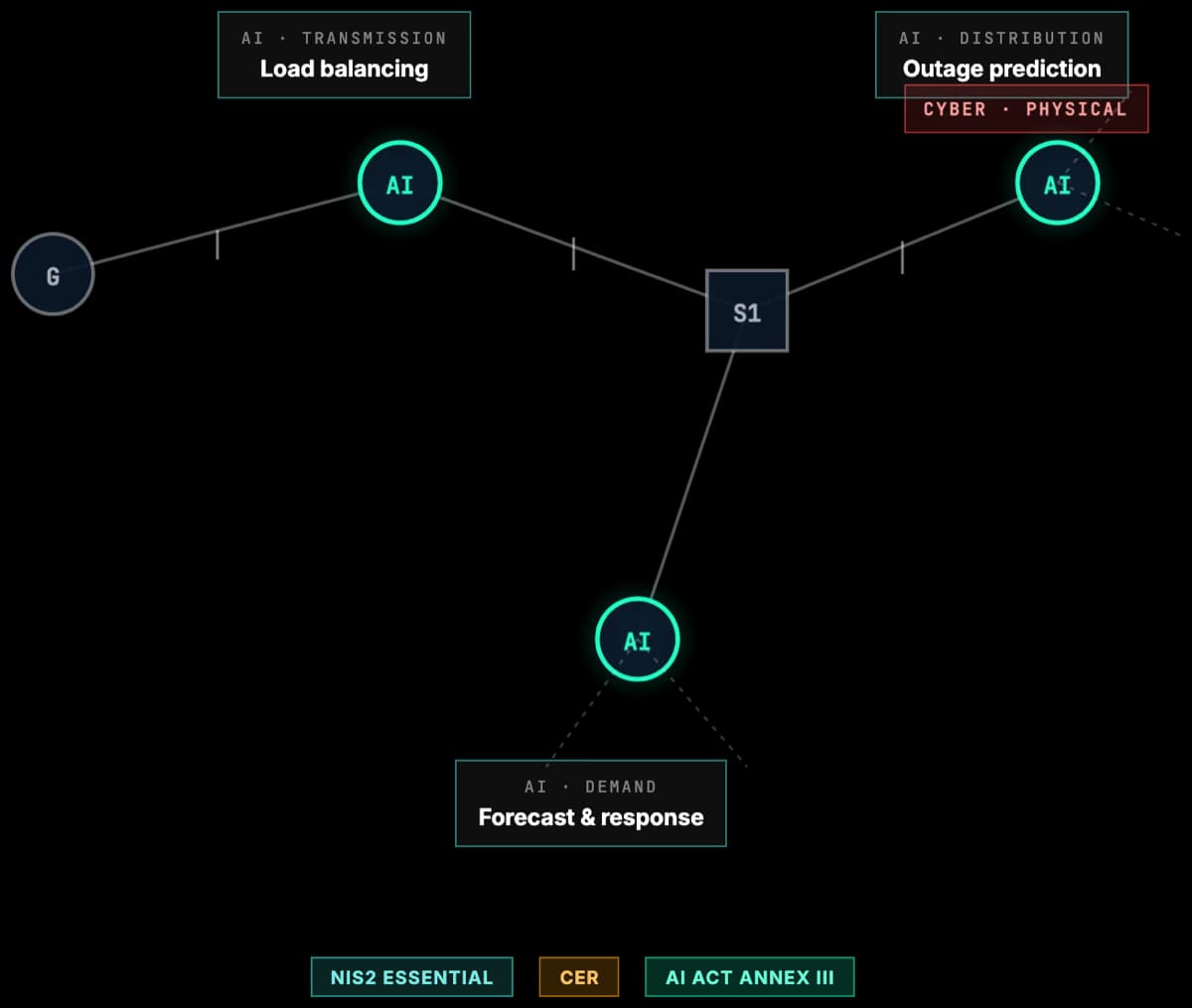

Of every sector named in Annex III Part 2 of the EU AI Act, utilities is the most explicit: AI systems used as safety components in the management and operation of critical digital infrastructure, road traffic, and the supply of water, gas, heating, and electricity are high risk. Grid forecasting, load balancing, outage prediction, and smart-metering analytics that influence operational decisions sit inside that scope. Conformity assessment, technical documentation, human oversight, logging, and post-market monitoring all apply.

Cybersecurity layers on top. Electricity (transmission, distribution, generation), oil, gas, district heating and cooling, hydrogen, drinking water, and waste water are all sectors of high criticality under NIS2. Most operators are essential entities with mandatory risk-management and 24-hour incident reporting duties. The Critical Entities Resilience Directive adds parallel physical resilience obligations. ENISA, national energy regulators, and ACER issue guidance that reaches into AI governance and data quality, not just generic ICT hygiene.

The governance challenge is the operating environment. Utility AI runs across IT, OT, DSO systems, retail platforms, and customer applications, often with different vendors for each. Turning an AI Act obligation into a working control means getting the same evidence out of a substation, a forecasting service, and a billing system. Modulos is built for exactly that: a shared graph where one requirement fans out to the right teams and the evidence rolls back up for the supervisor.

Regulations and Frameworks in Scope

The EU AI Act is the primary driver. Sector regulations and standards sit around it. Each card links to a deeper primer where available.

EU AI Act

European UnionAnnex III Part 2 classifies AI used as safety components in the management and operation of critical digital infrastructure and the supply of water, gas, heating, and electricity as high risk. Directly names the utility operational stack.

NIS2 Directive

European UnionAnnex I lists energy (electricity, oil, gas, district heating and cooling, hydrogen), drinking water, and waste water as sectors of high criticality. Most operators are essential entities with mandatory risk management, supply chain, and 24-hour incident reporting obligations.

CER Directive

European UnionCritical Entities Resilience Directive covers physical resilience for the same energy and water sectors NIS2 covers for cybersecurity, though specific entities are designated per Member State. Member States designate critical entities and require resilience plans, risk assessments, and notifications.

Clean Energy Package and Network Codes

ACER / ENTSO-ERegulation (EU) 2019/943 and related network codes govern data exchange, grid forecasting, and demand response. AI models feeding into day-ahead and intraday markets are subject to these rules and to market-abuse oversight.

ISO/IEC 42001

ISO standardThe AI management system standard aligns directly with NIS2 Article 21 risk-management measures. Certification gives utilities a defensible baseline across cybersecurity and AI governance.

GDPR and Smart Metering

EU data protectionSmart meter data, prepayment tariff decisions, and customer-vulnerability analytics all engage GDPR, including special-category data where energy-poverty flags are inferred.

Where AI Governance Actually Bites

The pressure points driving board-level attention in this sector.

Grid AI and Balancing

AI forecasting, state estimation, and load balancing feed directly into operational decisions. Under the EU AI Act these are high-risk safety components. Under NIS2 they are part of the ICT risk-management scope.

Renewables and Demand Response

ML-driven wind and solar forecasting and demand response platforms need data-quality controls, drift monitoring, and human override paths because their outputs move market positions and grid frequency.

Smart Metering Analytics

Customer consumption analytics used for tariff segmentation, fraud detection, or vulnerability scoring bring GDPR, consumer protection, and energy-poverty rules into the AI governance picture.

Cyber-Physical Resilience

NIS2 and CER require operators to prevent, withstand, and recover from cyber and physical incidents. AI failures and adversarial attacks need to be part of resilience planning, not treated as edge cases.

Supply Chain and Vendor AI

SCADA vendors, cloud forecasting services, and LLM copilots for control rooms are in-scope ICT suppliers under NIS2. The vendor risk process needs an AI-specific layer covering training data, drift, explainability, and exit strategy.

Incident Reporting and Transparency

The 24-hour early warning, 72-hour notification, and one-month final report regime under NIS2 applies to AI incidents. Utilities also have national-level market transparency rules if AI affects bidding.

High-Stakes AI Use Cases

Each use case is tagged with the AI Act gates it triggers. The Regulation runs four independent checks (Article 5 prohibitions, Annex III or Article 6 high-risk, Article 50 transparency, and Chapter V GPAI obligations) and the duties stack. A single system can hit several gates at once.

Social scoring of customers for tariff access or service decisions

Article 5(1)(c) prohibits AI used for social scoring of natural persons based on behaviour or personality traits where it leads to detrimental or unfavourable treatment in social contexts unrelated to the data source, or to treatment that is unjustified or disproportionate. Vulnerability scoring that gates access to supply or disconnection can reach this threshold if ungoverned.

AI safety components for electricity, gas, or water supply

Explicitly high-risk under Annex III Part 2. Full conformity, documentation, monitoring, and human-oversight obligations.

Grid forecasting and balancing models

High-risk under Annex III Part 2 when the output is used as a safety component. Even in advisory mode, drift or data poisoning has market and grid consequences.

Smart metering vulnerability scoring

High-risk under Annex III point 5 when used to evaluate access to, or continued supply of, essential services like electricity, gas, water, or heating. Worst-case uses that lead to detrimental treatment unrelated to context approach Article 5(1)(c) social-scoring territory. GDPR DPIAs and consumer protection law run alongside.

Outage prediction and predictive maintenance

No AI Act gate triggered when advisory. Still feeds operational decisions with resilience implications, so needs change control and explainability for operators. Pulled into Annex III Part 2 high-risk if outputs become a safety component.

Customer service generative AI assistants

Article 50 transparency applies: customers must be told they interact with AI and synthetic content must be labelled. Chapter V GPAI duties sit on the model provider. Consumer protection and complaint-handling rules still apply in full.

How Modulos Solves It

A single governance graph covering every obligation above, so controls written for one framework earn credit across the rest.

One governance graph for AI Act, NIS2, CER, and ISO 42001

Grid, market-operations, and retail-facing AI share reusable controls and evidence. A control written for NIS2 Article 21 also supports the AI Act technical file. Certification and supervisor reporting pull from the same source.

AI inventory across IT and OT

Every AI-enabled IT and OT system carries risk classification, vendor, data dependency, and safety-component flag. The view national regulatory authorities and ACER now expect during incident reviews and audits.

AI-aware NIS2 incident workflow

24-hour, 72-hour, and one-month reporting run as a single process with AI-specific triggers like model drift, data poisoning, and cascading OT failures. No duplicate playbooks for cyber and AI.

Article 20 management body evidence

Training, approvals, and sign-offs NIS2 explicitly requires are produced alongside AI oversight records. The management body view is auditable and current, not assembled from memory at inspection time.

FAQ

Energy (electricity, oil, gas, district heating and cooling, hydrogen), drinking water, and waste water are all sectors of high criticality under NIS2 Annex I. Medium and large entities in these sectors are essential entities. Certain entities including TSOs, DSOs, and producers above specified thresholds are in scope regardless of size.

Further reading

Deeper context, platform pages, and framework documentation from docs.modulos.ai.

- AI governance platformHow Modulos ties EU AI Act, NIS2, CER, and national energy rules into one graph.

- Guide to AI governanceThe practice, the frameworks, and how to stand up a governance programme.

- NIS2 complianceAnnex I essential-entity obligations, Article 20 board duties, and 24-hour reporting.

- EU AI Act framework documentationTechnical guidance on conformity assessment for grid and supply-chain AI.

- ISO 42001 documentationClauses 4 to 10 mapped to Modulos controls, reusable under NIS2 Article 21.

Ready to Close the Gap Between Grid AI and the EU AI Act?

Modulos lets utilities run the EU AI Act, NIS2, CER, ISO/IEC 42001, and national energy rules from one governance graph, so grid, market, and customer-facing AI share the same controls and evidence.