For banks, insurers, asset managers, and fintechs

AI governance for

financial services

AI governance for financial services moves first under the EU AI Act. Annex III Part 5 explicitly names creditworthiness assessment and life and health insurance pricing as high-risk AI systems. Every bank and insurer deploying AI for retail decisions sits inside the high-risk regime. DORA, ECB model risk guidance, and ISO/IEC 42001 stack on top.

The Numbers Driving AI Governance in This Sector

Third-party figures from regulators, standards bodies, and industry sources. Tiles are colour-coded by type: EU AI Act, cyber and risk, market, and timeline. Every tile links to its source.

Why AI governance for financial services moves first

Of every industry covered by the EU AI Act, financial services has the highest density of explicitly named high-risk use cases. Annex III Part 5(b) classifies AI systems used to evaluate the creditworthiness of natural persons or to establish their credit score as high risk. Annex III Part 5(c) does the same for risk assessment and pricing in life and health insurance. The only carve-out is AI used to detect financial fraud. This is why banks and insurers are the sector where AI Act readiness moves first.

High-risk classification is not a lighter version of existing model risk management. It adds conformity assessment, technical documentation, structured data governance, logged human oversight, fundamental rights impact assessments for public deployers, and post-market monitoring. Those deliverables layer on top of SR 11-7 and ECB guide-to-internal-models expectations that most banks already run. DORA, fully applicable since 17 January 2025, runs in parallel, with ICT risk management, a third-party register, resilience testing, and incident reporting duties for every AI/ML provider in the value chain.

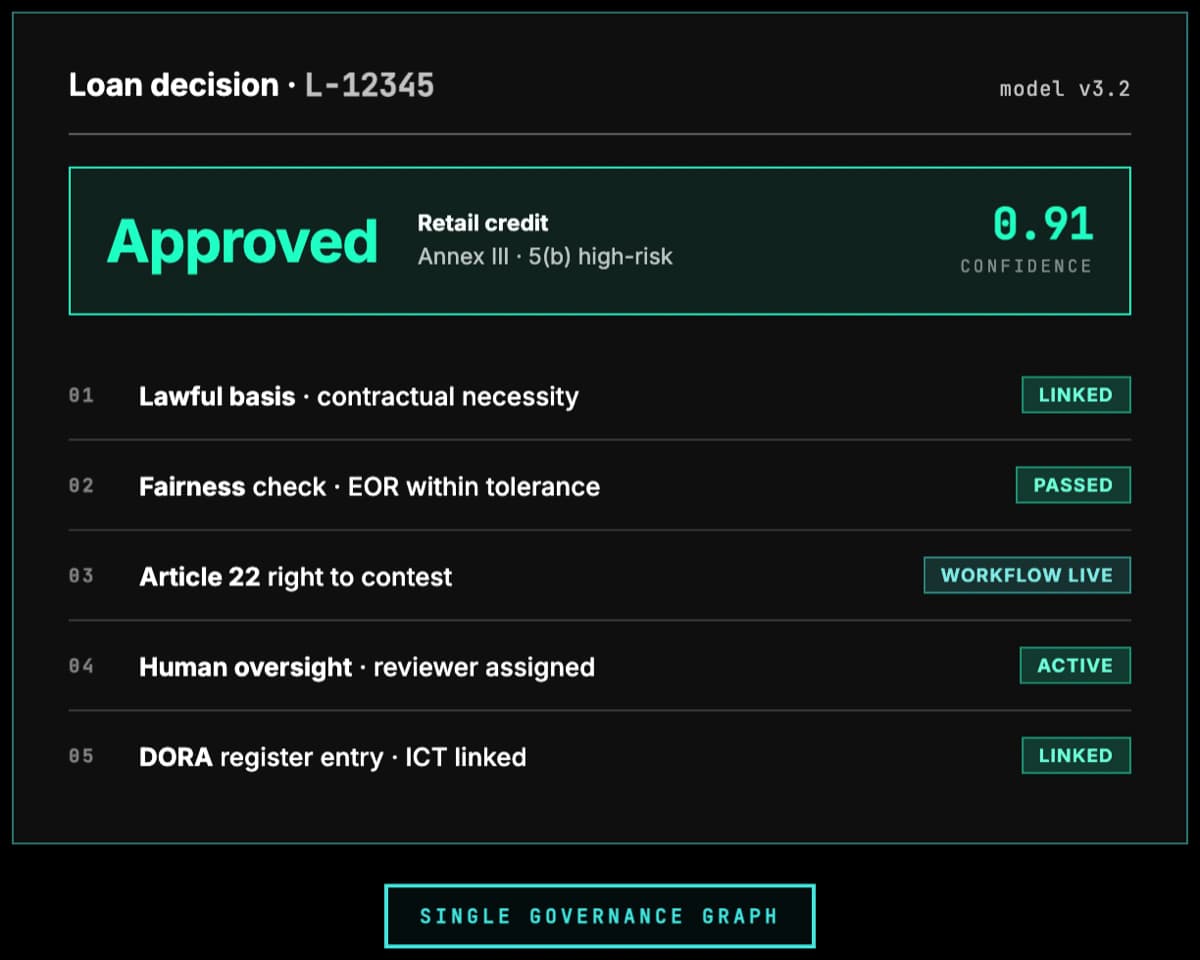

The practical challenge is not understanding any single framework. It is reconciling them. A single credit model needs to satisfy AI Act high-risk duties, model risk validation, DORA incident reporting, ISO/IEC 27001 controls, GDPR Article 22, and often national consumer-credit law at the same time. The cost of running those as separate programmes is measurable in headcount. The cost of treating them as a single governance graph, where one control earns credit across many frameworks, is what Modulos is built for.

Regulations and Frameworks in Scope

The EU AI Act is the primary driver. Sector regulations and standards sit around it. Each card links to a deeper primer where available.

EU AI Act

European UnionAnnex III Part 5(b) names credit scoring and Part 5(c) names life/health insurance pricing as high risk. High-risk obligations include conformity assessment, technical documentation, data governance, human oversight, logging, and post-market monitoring.

DORA

EU financial regulatorsDigital Operational Resilience Act, fully applicable since 17 January 2025. Covers ICT risk management, incident reporting, resilience testing, and a register of ICT third-party providers including AI and ML vendors.

ISO/IEC 42001

ISO standardThe AI management system standard supervisors increasingly reference for demonstrable governance. Offers a certifiable control set that maps cleanly to EU AI Act and NIST AI RMF obligations.

ECB Guide to Internal Models

European Central BankExpectations for IRB credit risk, market risk, and counterparty credit risk models. AI and ML internal models must meet the same validation, data quality, and documentation standards as traditional statistical models.

GDPR Article 22

EU data protectionRestricts solely automated decision-making with legal or similarly significant effects on individuals. Customers retain the right to human review, explanation, and contestation of AI-driven credit and insurance decisions.

NIST AI RMF

US guidanceWidely adopted by US banks and insurers. Aligns with OCC Bulletin 2011-12 and SR 11-7 model risk management expectations and supports firms that need combined US and EU coverage.

Where AI Governance Actually Bites

The pressure points driving board-level attention in this sector.

Credit and Risk Models

Retail credit scoring, SME lending, PD/LGD models, and insurance underwriting are named high-risk in Annex III. AI Act obligations sit alongside existing model risk management rules with no reduction in validation rigour.

Fraud and AML

Transaction monitoring, sanctions screening, and behavioural anomaly detection are carved out of the Annex III credit clause but still face GDPR Article 22, consumer protection, and supervisor expectations on bias and explainability.

Customer-Facing AI

Chatbots, robo-advisors, and generative customer service agents face MiFID II suitability rules and consumer duty scrutiny. Evidence of human oversight, logging, and disclosure becomes a regulatory deliverable.

DORA ICT and Vendor AI

Cloud-hosted LLMs and third-party ML platforms are in-scope ICT third-party providers under DORA. The register, exit strategies, and concentration risk analysis extend to every production AI dependency.

Market and Algo Trading

AI-driven execution, surveillance, and portfolio models trigger MiFID II algorithmic trading controls, kill-switch obligations, and NCA reporting. Backtesting and stress-testing evidence must be retained and auditable.

Board and Senior Manager Liability

SMCR in the UK, Article 20 NIS2, and Article 5 DORA all impose personal accountability on management for ICT and AI risk. Boards need concise dashboards tying AI programmes to regulatory and financial impact.

High-Stakes AI Use Cases

Each use case is tagged with the AI Act gates it triggers. The Regulation runs four independent checks (Article 5 prohibitions, Annex III or Article 6 high-risk, Article 50 transparency, and Chapter V GPAI obligations) and the duties stack. A single system can hit several gates at once.

Subliminal or manipulative nudging in retail finance

Article 5(1)(a) prohibits AI using subliminal, purposefully manipulative, or deceptive techniques that materially distort behaviour and cause significant harm. Article 5(1)(b) separately prohibits AI exploiting vulnerabilities tied to age, disability, or a specific social or economic situation with the same effect. Nudging in credit, debt, or investment flows can cross either line.

Retail credit scoring for natural persons

Annex III point 5(b) explicitly classifies consumer creditworthiness assessments as high-risk. Expect conformity assessment, technical documentation, human oversight, and bias-testing obligations.

Life and health insurance pricing

Annex III point 5(c) classifies risk assessment and pricing for natural persons in life and health insurance. Same high-risk obligations as retail credit.

Fraud detection and transaction monitoring

Carved out of the Annex III 5(b) credit clause, so no AI Act gate is triggered in typical deployments. GDPR Article 22, consumer protection, and supervisor expectations on explainability and human review still apply in full.

Generative AI customer service

Article 50 transparency obligations apply: customers must be told they interact with AI and synthetic content must be labelled. Chapter V GPAI duties sit on the model provider. MiFID II suitability, consumer duty, and GDPR obligations run alongside.

Internal analytics and reporting

No AI Act gate triggered when outputs are not used for customer decisions. DORA ICT risk management and internal model validation still apply.

How Modulos Solves It

A single governance graph covering every obligation above, so controls written for one framework earn credit across the rest.

Single control set across AI Act, DORA, ISO 42001, and NIST

Write a control once and map it to every framework it satisfies. Conformity assessment, DORA ICT register evidence, model risk validation artefacts, and ISO certification all read from the same source of truth.

Live AI system inventory with regulatory classification

Each AI system carries Annex III classification, intended purpose, provider and deployer role, DORA third-party register fields, and supporting documentation. Supervisor requests are answered in hours, not weeks.

Workflow-driven bias, explainability, and human oversight

Bias testing, explainability reviews, and human-oversight sign-offs run as structured workflows with tamper-evident evidence. No more shared folders or screenshots pasted into email chains.

Board dashboards SMCR and Article 5 DORA actually expect

Open controls, outstanding risks, third-party concentration, AI incident history, and training completion roll up to the management body view. Personal accountability is traceable, not anecdotal.

FAQ

Yes. Annex III Part 5(b) explicitly classifies AI systems used to evaluate the creditworthiness of natural persons or to establish their credit score as high risk. The only carve-out is AI systems used for the purpose of detecting financial fraud. High-risk classification triggers conformity assessment, technical documentation, data governance, human oversight, logging, and post-market monitoring.

Further reading

Deeper context, platform pages, and framework documentation from docs.modulos.ai.

- AI governance platformHow Modulos operationalises the EU AI Act, DORA, and model risk in one graph.

- AI risk managementMonetary risk quantification and model-risk workflows for credit, insurance, and algo trading.

- Guide to AI governanceThe practice, the frameworks, and how to stand up a governance programme.

- EU AI Act framework documentationTechnical guidance on conformity assessment and CE marking for high-risk AI.

- ISO 42001 documentationClauses 4 to 10 mapped to Modulos controls and evidence.

Ready to Operationalise the EU AI Act in Financial Services?

See how Modulos maps the AI Act, DORA, ISO/IEC 42001, and your internal model risk policy into a single governance graph, with control, evidence, and board reporting your supervisors will recognise.