For telecom operators, ISPs, MNOs, and network vendors

AI governance for

telecommunications

AI governance for telecommunications starts with the EU AI Act. Annex III Part 2 names AI used as a safety component in the management and operation of critical digital infrastructure as high risk. BEREC and ENISA add sector-specific guidance. NIS2 makes telecom operators essential entities.

The Numbers Driving AI Governance in This Sector

Third-party figures from regulators, standards bodies, and industry sources. Tiles are colour-coded by type: EU AI Act, cyber and risk, market, and timeline. Every tile links to its source.

- Modulos and Telefónica Tech: agreement to promote responsible AI governance. Read the announcement

Why AI governance for telecom starts with the EU AI Act

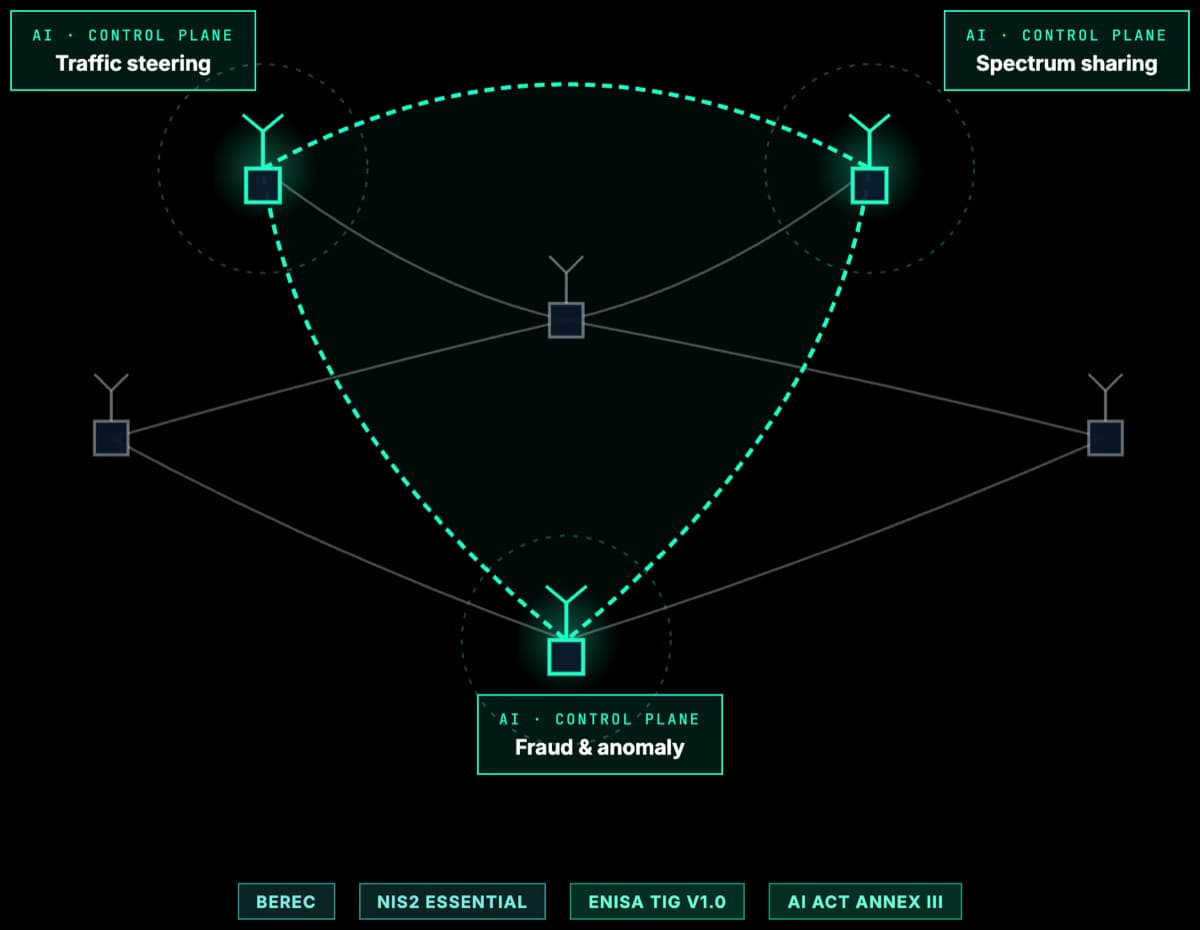

Annex III Part 2 of the EU AI Act classifies AI systems used as safety components in the management and operation of critical digital infrastructure as high risk. On the common interpretation, that reaches network-automation, security, and resilience AI deployed by telecom operators. Self-organising networks, dynamic spectrum sharing, anomaly detection, traffic steering, fraud models, and generative customer service all sit inside or adjacent to the high-risk regime. Conformity assessment, data governance, human oversight, logging, and post-market monitoring apply.

BEREC has been explicit about the sector exposure. Its June 2023 report on AI solutions in telecommunications names six priority use cases: network and capacity planning, channel modelling, dynamic spectrum sharing, QoS and traffic classification, security optimisation and threat detection, and fraud detection. BEREC's 2025 work programme follows up with a report on the impact of AI on internet openness and the environment. These are the exact workloads most likely to trigger AI Act obligations, GDPR scrutiny under the EDPB Opinion 28/2024 on AI models, and supervisory attention from Ofcom, BNetzA, and national NRAs.

Cybersecurity is the second layer. Providers of public electronic communications networks and services are in NIS2 Annex I as essential entities, and small or micro providers are in scope regardless of size under Article 2(2)(b). NIS2 repealed EECC Articles 40 and 41 in October 2024. Telecom security obligations now flow from NIS2, ENISA Technical Implementation Guidance (v1.0, June 2025), and Implementing Regulation (EU) 2024/2690. Anchoring the programme on the AI Act, then mapping NIS2 onto the same controls, is the shortest path to an audit-ready posture.

Regulations and Frameworks in Scope

The EU AI Act is the primary driver. Sector regulations and standards sit around it. Each card links to a deeper primer where available.

EU AI Act

European UnionAnnex III Part 2 classifies AI systems used as safety components in the management and operation of critical digital infrastructure as high risk. On the common interpretation, this reaches network automation, security AI, and resilience-critical ML deployed by telecom operators.

BEREC AI Reports

BERECThe June 2023 report identifies six priority AI use cases in telecoms: network and capacity planning, channel modelling, dynamic spectrum sharing, QoS and traffic classification, security optimisation and threat detection, and fraud detection. BEREC's 2025 follow-up focuses on the impact of AI on internet openness and the environment. Sets the expectations NRAs will carry into national enforcement.

NIS2 Directive

European UnionProviders of public electronic communications networks and services are Annex I essential entities. Small and micro providers are in scope regardless of size (Article 2(2)(b)). Mandatory risk-management, 24-hour incident reporting, supply-chain, and management-body obligations.

ENISA NIS2 Technical Guidance

ENISAENISA Technical Implementation Guidance for NIS2 (v1.0, June 2025) and Implementing Regulation (EU) 2024/2690 set sector-specific cybersecurity risk-management expectations for digital infrastructure and ICT service management.

EDPB Opinion 28/2024

EDPBCovers GDPR treatment of AI models: anonymity thresholds, legitimate-interest as a lawful basis, and the consequences of unlawful training data. Directly relevant to churn models, anti-fraud, and customer-analytics AI.

ISO/IEC 42001

ISO standardThe AI management system standard increasingly referenced by regulators as an evidentiary baseline. Maps cleanly onto NIS2 Article 21 risk-management measures when combined with ISO/IEC 27001.

GDPR and ePrivacy

EU data protectionTelco customer data, metadata, traffic data, and location data are among the most sensitive categories under GDPR and the ePrivacy Directive. AI customer analytics, behavioural fraud detection, and generative AI chatbots all need documented lawful bases.

Ofcom Strategic Approach to AI 2025/26

UK regulatorPublished June 2025. Prioritises synthetic media, personalisation, and network security and resilience. Ofcom opened a Call for Input on AI in telecoms in January 2026, signalling a more active supervisory posture for UK operators.

Where AI Governance Actually Bites

The pressure points driving board-level attention in this sector.

Network AI and Autonomy

Self-organising networks, dynamic spectrum sharing, traffic steering, and AI-driven capacity planning are the clearest candidates for the AI Act high-risk safety-component bracket for critical digital infrastructure. Governance has to produce evidence of data quality, drift monitoring, and human override.

Fraud and SIM-Swap AI

Behavioural fraud models and SIM-swap detection have real customer impact and engage GDPR Article 22, consumer protection, and emerging eIDAS 2.0 identity-wallet expectations. Explainability and human-review paths become regulated artefacts.

Generative AI Customer Service

LLM-based chatbots and generative assistants trigger Article 50 transparency duties (disclosure that users interact with AI, labelling of synthetic content) and, upstream, Chapter V GPAI obligations on the model provider. Consumer protection, complaint-handling, and mis-sell risks compound when used for regulated telco products. Logs, testing, and escalation protocols need to be auditable.

Network Security and Threat Detection

ENISA Threat Landscape 2025 flags telecommunications as a priority target for state-aligned espionage, even though public administration is the single most-impacted sector overall. AI used for defence is both part of the solution and part of the AI Act safety-component analysis.

Supply Chain and Vendor AI

NIS2 Article 21 and the EU 5G Cybersecurity Toolbox already frame vendor risk for telcos. AI extends the problem to cloud inference providers, foundation-model vendors, and RAN automation platforms. Contracts and registers need AI-specific clauses.

Board and Management Accountability

NIS2 Article 20 requires management bodies to approve and oversee cybersecurity risk-management, undergo training, and accept personal accountability for failures. AI governance needs a board view integrated with cyber, not a parallel programme.

High-Stakes AI Use Cases

Each use case is tagged with the AI Act gates it triggers. The Regulation runs four independent checks (Article 5 prohibitions, Annex III or Article 6 high-risk, Article 50 transparency, and Chapter V GPAI obligations) and the duties stack. A single system can hit several gates at once.

Untargeted scraping of subscriber facial images for identity databases

Article 5(1)(e) prohibits AI systems built by untargeted scraping of facial images from the internet or CCTV to create or expand facial recognition databases. Applies to identity-verification and fraud-analytics products built on scraped imagery.

AI as a safety component of critical digital infrastructure

On the common interpretation of Annex III Part 2, network-automation AI whose outputs significantly influence operational decisions is high-risk, with full conformity, documentation, monitoring, and human-oversight obligations.

Behavioural fraud and SIM-swap detection

No AI Act gate triggered in typical deployments. GDPR Article 22, consumer protection, and identity-wallet obligations still raise the bar. Bias testing and human-review records are increasingly expected.

Customer analytics and churn modelling

No AI Act gate triggered in typical analytics deployments. GDPR, ePrivacy, and the EDPB Opinion 28/2024 on AI-model data protection still apply, and exposure rises where inferences affect customer pricing or service suitability decisions.

Generative AI customer service agents

Article 50 transparency applies: customers must be told they interact with AI and synthetic content must be labelled. Chapter V GPAI duties sit on the model provider. Consumer protection and complaint-handling rules still apply in full.

Internal productivity and dev-tooling AI

No AI Act gate triggered when outputs do not feed customer or network decisions. ISO/IEC 42001 governance and NIS2 supply-chain considerations still apply where SaaS is involved.

How Modulos Solves It

A single governance graph covering every obligation above, so controls written for one framework earn credit across the rest.

One graph for AI Act, NIS2, ENISA, GDPR, and ISO 42001

Network, fraud, customer, and generative AI share a single set of controls and evidence. A control written for NIS2 Article 21 also covers the AI Act technical documentation and ISO certification. No duplicate programmes.

Live AI inventory with Annex III classification

Each AI system carries safety-component classification, supplier, model version, and the technical documentation the Act and NRAs are starting to ask for. Supervisor requests are answered in hours, not weeks.

Structured NIS2 reporting with AI triggers

24-hour, 72-hour, and one-month reporting runs as a single workflow. Model drift, data poisoning, and RAN-automation anomalies are first-class incident types, not an afterthought.

Article 20 management body trail

Training, approvals, and oversight records are produced alongside AI governance rather than bolted on in parallel. Personal accountability for board members is demonstrable, not improvised at inspection.

FAQ

Yes. Providers of public electronic communications networks and publicly available electronic communications services are listed as Annex I essential entities. Under Article 2(2)(b) NIS2, small and micro providers are also in scope regardless of size, because cybersecurity of public communications is considered critical in its own right. NIS2 repealed Articles 40 and 41 of the EECC in October 2024, so security obligations for telecoms now flow directly from NIS2.

Further reading

Deeper context, platform pages, and framework documentation from docs.modulos.ai.

- AI governance platformHow Modulos ties EU AI Act, NIS2, GDPR, and ENISA evidence into one graph.

- Guide to AI governanceThe practice, the frameworks, and how to stand up a governance programme.

- NIS2 complianceAnnex I essential-entity obligations, Article 20 board duties, and 24-hour reporting.

- EU AI Act framework documentationTechnical guidance on conformity assessment and CE marking for high-risk network AI.

- ISO 42001 documentationClauses 4 to 10 mapped to Modulos controls, reusable under NIS2 Article 21.

Ready to Bring Telecom AI Into a Single Governance Graph?

See how Modulos maps the EU AI Act, NIS2, ENISA guidance, GDPR, and ISO/IEC 42001 into one governance graph built for network operators, ISPs, and MNOs with live AI in production.