For compliance, risk, and product leaders

AI Governance

by Industry

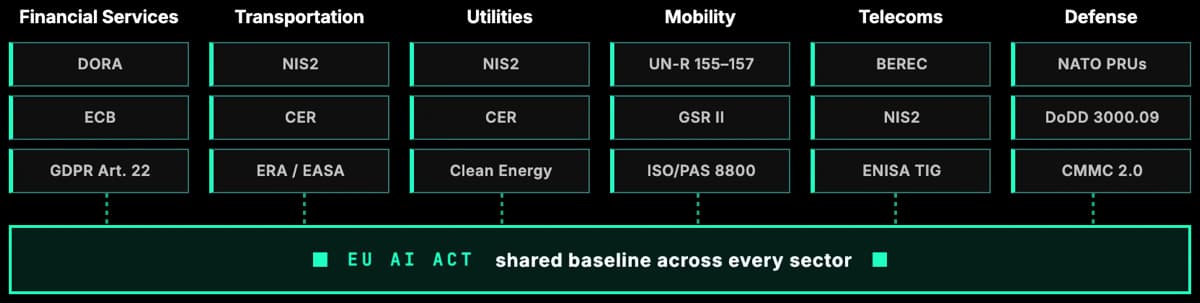

The EU AI Act is the primary driver of AI governance work in every regulated sector. How it lands depends on the industry: which Annex III category applies, which sector regulation layers on top, and which ISO or NIST control set gives you audit-grade evidence. Each industry page below explains exactly that.

Working With Key Players Across Regulated Sectors

Six industries where AI governance is already a board-level topic. Pick your sector to see the applicable EU AI Act article, the sector rules that stack on top, and the AI use cases driving regulator attention.

Financial Services

Annex III Part 5 makes credit scoring and insurance pricing high risk under the EU AI Act. DORA, ECB model risk guidance, and ISO/IEC 42001 layer underneath.

Transportation

Annex III Part 2 classifies AI as a safety component for road, rail, air, and maritime traffic. NIS2 and the CER Directive sit behind for resilience.

Utilities

Grid AI for electricity, gas, water, and heating is Annex III high risk. NIS2 adds essential-entity cyber duties and CER Directive adds physical resilience.

Mobility

Article 6 ties the EU AI Act to UN-R 155, 156, 157 and the General Safety Regulation. ADAS, autonomous driving, and driver monitoring are high risk by design.

Telecommunications

Annex III Part 2 reaches network AI as a safety component of critical digital infrastructure. BEREC, ENISA, and NIS2 add sector-specific obligations.

Defense & National Security

Article 2(3) carves out exclusively military AI, but Recital 24 pulls dual-use and civilian lines back in. NATO PRUs and ISO/IEC 42001 cover the rest.

Why One Governance Graph Beats Six Programmes

The EU AI Act sets the horizontal baseline, but every regulated sector adds its own layer. A bank running an AI underwriting model reconciles the AI Act high-risk regime with DORA, ECB model risk expectations, and GDPR. A rail operator runs the same AI Act obligations against NIS2, the CER Directive, and safety rules from the European Union Agency for Railways. A mobility OEM runs UN Regulation 155, 156, and 157 alongside the AI Act and ISO/PAS 8800.

The cost of treating AI governance as a single cross-cutting programme is duplicate work. Each team ends up maintaining its own spreadsheet of controls, its own incident playbook, its own evidence store. Modulos is built on a single governance graph, so a control written once for ISO/IEC 42001 also serves the EU AI Act, DORA, NIS2, and sector guidance. Everything traces back to the original requirement.

The industry pages below describe the specific regulatory pressure, the AI use cases most likely to be classified high-risk, and the governance pattern that actually works in that sector. They are starting points for AI governance strategy, board briefings, and vendor conversations, not a substitute for legal advice.

Map AI Governance to Your Industry

See how Modulos models sector-specific obligations, controls, and evidence in a single governance graph, so the EU AI Act, DORA, NIS2, and ISO/IEC 42001 stop competing for attention.