Is the EU AI Act Delayed? 2026 Status Check

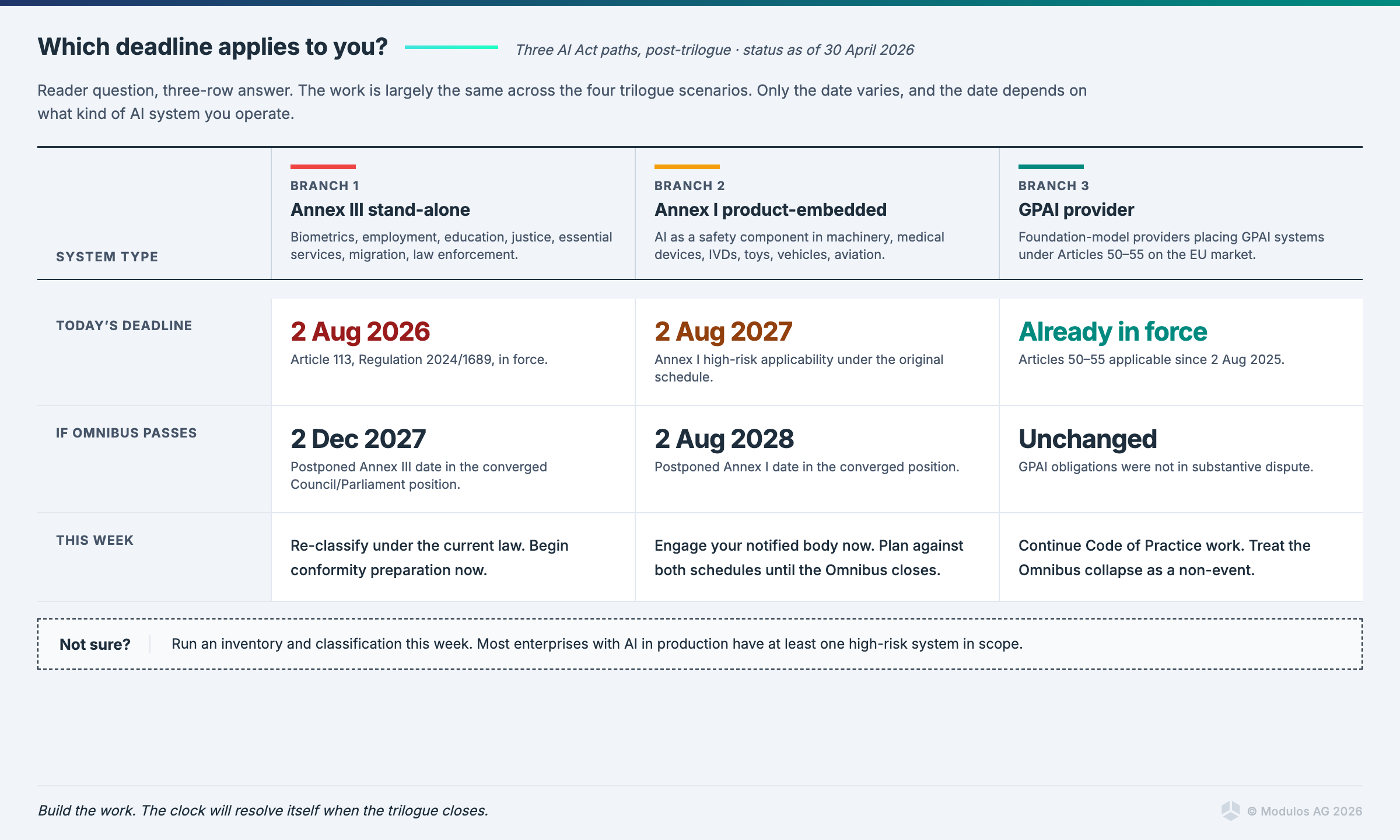

No, not yet, and not automatically. The EU AI Act's 2 August 2026 high-risk deadline remains legally in force after the Digital Omnibus trilogue failed on 28 April 2026. A delay is still likely, but it is no longer certain, and it will not happen by default. If you run a compliance program, the deadline you should plan against is the one already in the law, not the one the Omnibus might eventually replace it with.

What is the EU AI Act August 2026 deadline?

The EU AI Act (Regulation 2024/1689) came into force on 1 August 2024 and applies in stages. The headline date for compliance teams is 2 August 2026. On that day, obligations on high-risk AI systems under Annex III, transparency obligations under Article 50, the public registration database under Article 49, and a wide set of enforcement powers all become applicable. For most enterprises, this is the day the AI Act stops being a planning exercise and starts being a regulatory reality.

The Modulos documentation has a step-by-step readiness guide covering role identification, classification, technical documentation, conformity assessment, and post-market monitoring. The fastest first step is mapping your AI portfolio against the high-risk classification rules currently in force.

Why the Omnibus was supposed to delay it

In November 2025, the European Commission proposed the Digital Omnibus, a simplification package that would amend the AI Act and adjacent files (GDPR, Data Act, e-Privacy Directive). The political bargain on the AI portion was straightforward: industry and member states said the August 2026 deadline was unworkable without harmonised standards, and the Commission proposed a postponement.

By March 2026, both the Council and Parliament had broadly converged on fixed new dates: 2 December 2027 for stand-alone Annex III systems, and 2 August 2028 for AI embedded in products under Annex I. Other elements were also agreed: a streamlined registration database, a prohibition on non-consensual AI-generated intimate imagery, and a broader legal basis for bias detection. Most of the substantive shape of the deal was visible going into the 28 April session, which was expected to confirm it.

What happened in the 28 April trilogue

After roughly 12 hours of negotiation, Council and Parliament failed to converge on one file: the conformity-assessment architecture for AI in regulated products under Annex I. That file covers AI as a safety component in machinery, medical devices, in-vitro diagnostics, toys, and other products already governed by sectoral safety law. The disagreement was whether Section A products should move to Section B, shifting conformity assessment to the sectoral track. Council resisted, Parliament's negotiating position pushed for the move, and the institutions did not bridge.

The press conference scheduled for the morning after was cancelled, which was its own signal: there was no clean institutional line to communicate. A follow-up trilogue is scheduled for approximately 13 May 2026.

For the full breakdown of what was agreed before the collapse, what the Section A versus Section B question actually means, and the political dynamics around the Annex I file, see our news analysis from 29 April.

Will the EU AI Act be delayed before 2 August?

There are four plausible paths from here, with rough probability weights based on what is publicly known about the institutional dynamics around the Annex I file.

Scenario 1: Quick recovery on 13 May (~30%). The follow-up trilogue closes with a face-saving compromise on the Annex I architecture, most likely a partial split where some product categories stay in Section A and others move to Section B, or a reference to delegated acts that defers the architecture question. Deal is signed, OJ publication in July, deadlines apply as previously agreed (2 December 2027 for Annex III, 2 August 2028 for Annex I).

Scenario 2: Late delay under the Lithuanian Presidency (~25%). The 13 May trilogue does not close. The Cypriot Presidency ends on 30 June 2026 without a deal, Lithuania takes over on 1 July, and a compromise is reached during summer. The original 2 August 2026 deadline passes with no postponement legally in force, and the Commission likely issues forbearance or transitional guidance to manage the gap.

Scenario 3: Split deal (~15%). The Annex I file is carved off into a separate legislative track. The rest of the Omnibus, including the agreed Annex III postponement, registration changes, GPAI elements, and the deepfake ban, passes on the previously-converged terms. Industrial AI is handled separately, possibly via a Commission delegated act.

Scenario 4: No deal before 2 August (~30%). This was a 10% tail risk before the trilogue. After the cancelled press conference, the public political fracture, and the underlying institutional positions, it is now a real 30% scenario. The original AI Act high-risk obligations apply on schedule, with no harmonised standards in place and limited notified body capacity. The Commission would face significant pressure to issue transitional guidance, but compliance teams would be operating under the original Regulation 2024/1689 timeline.

In scenarios 1 through 3, the substantive obligations end up looking similar to what was already agreed before the collapse. In scenario 4, the substantive obligations are exactly what the AI Act says today. What varies across the four scenarios is the date by which compliance must be demonstrated, and the enforcement environment between now and then. The work compliance teams need to do does not change much across these scenarios; the clock does.

What should compliance teams do right now?

This week. Inventory your AI systems against the Annex III classification rules in force today, not the version a successful Omnibus would have produced. If you classified anything as out-of-scope on the assumption that the package would narrow the scope, re-check that classification now. Identify your single point of accountability for AI Act readiness, name the person in writing, and confirm the provider versus deployer role assignment for each system in scope.

This month. Run a gap assessment against AI Act Articles 9 through 15 for any system that is high-risk under the current law: risk management, data governance, technical documentation, record-keeping, transparency, human oversight, accuracy, robustness, cybersecurity. A structured risk management approach is the foundation; documentation pipelines plug in from there. For Annex I products specifically, engage your notified body now. Capacity is already constrained, and the post-collapse environment makes the queue worse, not better. The conformity assessment and CE marking documentation covers which path applies to which system type.

This quarter. Build the registration documentation pipeline. The Article 49 registration database survives in all four scenarios, so building toward it is robust to any outcome. Begin synthetic-content disclosure engineering, including UI labels, metadata embedding, and detection at the edge, which will be required regardless of when Article 50 obligations bite. Anchor your AI governance program against ISO 42001, which gives you scenario-independent maturity that holds whatever clock the trilogue eventually settles on. Compliance teams managing multiple frameworks (EU AI Act plus ISO 42001 plus NIST AI RMF) should consider centralising into a single evidence pipeline rather than running parallel processes.

Do the work that is robust to all four scenarios, and stop optimising for the one outcome you hoped would happen.

Frequently asked questions

Is the EU AI Act in force right now? Yes, in part. The Regulation came into force on 1 August 2024 and applies in stages. Article 5 prohibitions and the AI literacy requirement under Article 4 became applicable on 2 February 2025. General-purpose AI obligations under Articles 50 to 55 became applicable on 2 August 2025. Most other obligations, including high-risk system requirements, become applicable on 2 August 2026.

Has the EU AI Act been delayed? No. As of 30 April 2026, no delay has been adopted. The Digital Omnibus would have postponed key deadlines, but its trilogue failed on 28 April. Until a revised Omnibus is agreed and published in the Official Journal, the original deadlines in Regulation 2024/1689 apply.

When does the EU AI Act apply to high-risk AI systems? Currently, 2 August 2026 for stand-alone Annex III high-risk systems, and 2 August 2027 for AI embedded as a safety component in products under Annex I. The Omnibus would have shifted these to 2 December 2027 and 2 August 2028 respectively. Whether those dates apply depends on whether the Omnibus passes before the original deadlines.

What is the difference between the AI Act and the AI Omnibus? The AI Act is the regulation in force. The AI Omnibus is a proposed amendment package that would simplify and postpone parts of the AI Act. The Omnibus has not been adopted. Until it is, only the AI Act applies.

When will the next AI Omnibus trilogue happen? A follow-up political trilogue is scheduled for approximately 13 May 2026, two weeks after the failed 28 April session. The Cypriot Council Presidency will attempt to close the file before its term ends on 30 June 2026. If it does not, the Lithuanian Presidency takes over on 1 July 2026 and may continue negotiations.

What is high-risk AI under the EU AI Act? High-risk classification has two routes. Annex I covers AI as a safety component in products already regulated under EU sectoral safety law (machinery, medical devices, toys, vehicles, aviation, etc.). Annex III covers stand-alone AI systems in defined high-risk use cases including biometrics, critical infrastructure, education, employment, essential services, law enforcement, justice, and migration.

What does the AI Omnibus failure mean for general-purpose AI providers? Largely no change. GPAI obligations under Articles 50 to 55 were not in substantive dispute and continue on their current schedule. The Code of Practice on synthetic content is expected to finalise in May or June 2026. GPAI providers should treat the Omnibus collapse as a non-event for their compliance roadmap.

What should a compliance team do this week if they were planning around the Omnibus delay? Re-anchor against the original 2 August 2026 deadline. Re-classify any AI systems you de-scoped on the assumption of a narrower Annex III. Confirm provider/deployer roles. Engage notified bodies now if you have Annex I products in scope. Documentation pipelines, registration preparation, and conformity readiness should all proceed against the original Regulation 2024/1689 schedule until a revised Omnibus is adopted.

The bottom line

The regulatory target is unstable. Build evidence once, map it to the current AI Act, the delayed AI Act, sectoral regimes, and any Omnibus scenario. Whichever clock the institutions settle on, the underlying compliance work is largely the same. We help organisations build that evidence base in a way that is robust to outcome rather than optimised for any single scenario.

If you want a practical AI governance program that holds across the four scenarios above, book a 30-minute conversation with our team. The next trilogue will resolve whatever it resolves. Your portfolio readiness should not depend on it.

Ready to Transform Your AI Governance?

Discover how Modulos can help your organization build compliant and trustworthy AI systems.