AI governance tools in 2026: one category is splitting in two

AI governance tools are being redefined in real time. Underneath the marketing, the category is splitting in two.

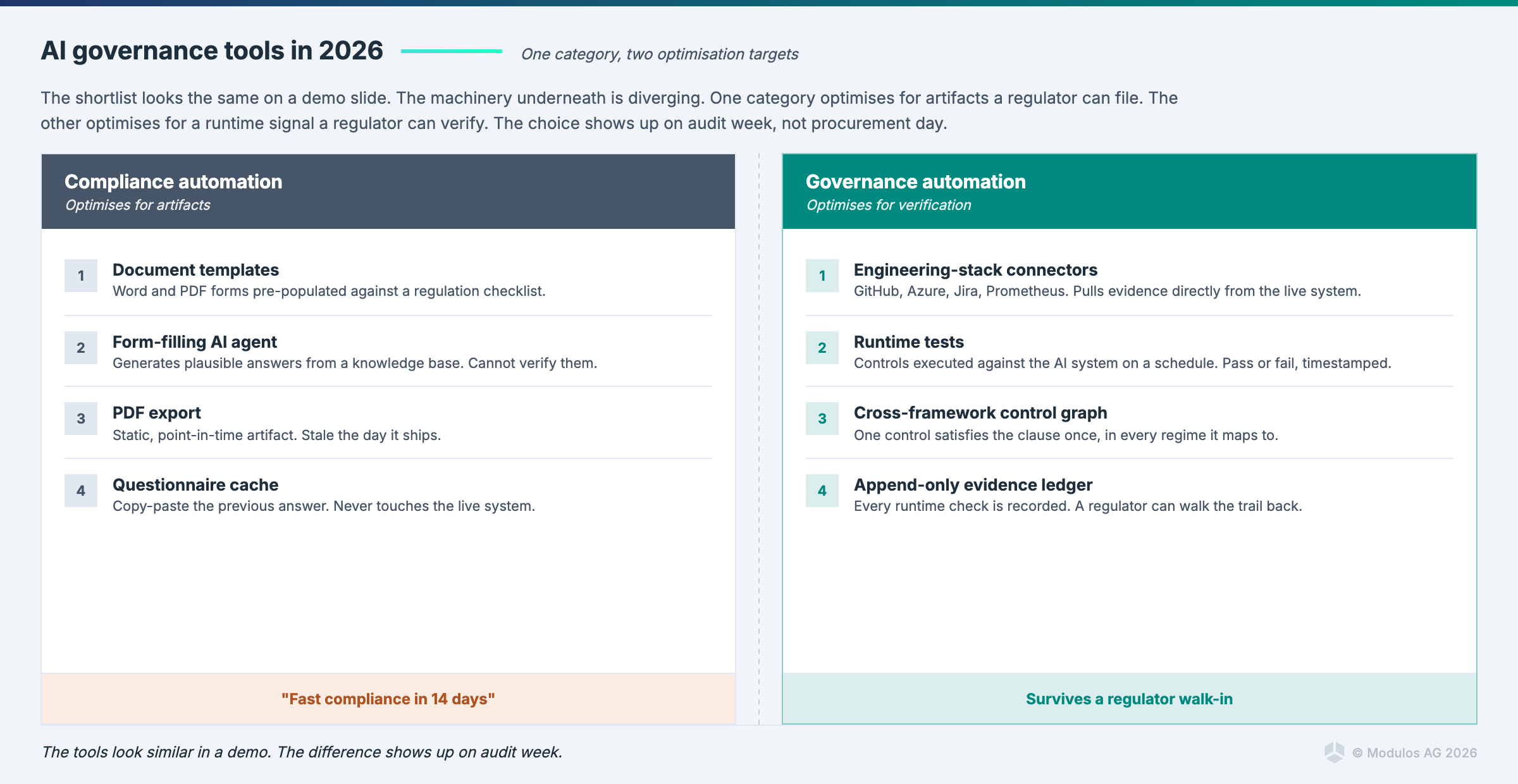

On one side, compliance automation: template generators, form-filling agents, dashboard wrappers, fast-compliance-in-days pitches. The output is a polished artifact and the platform has no way of knowing whether the underlying claim is true. On the other side, governance automation: systems that connect to your GitHub, your Azure tenant, your Jira, inspect what is actually deployed, reason across frameworks simultaneously, and produce evidence that survives an audit.

Both call themselves "AI governance platforms." Only one of them will survive contact with a regulator.

This post is the buyer's guide that names the split and gives you the evaluation criteria that separate the two sides. Six criteria, a 30-minute vendor stress test, and one sharp opinion per non-negotiable. It assumes you have already decided what your framework needs to do. If you have not, start with the AI governance framework post and come back.

What an AI governance tool is actually for

If you strip the category to its job description, an AI governance tool has three responsibilities.

Evidence collection. The tool must make it cheaper and more reliable to produce the evidence that proves your controls are operating. If the tool's evidence workflow is "humans upload screenshots", it is a filing cabinet with a marketing budget.

Risk quantification. The tool must express the risk associated with each AI system in terms that let you prioritise treatment. That means monetary impact. 5x5 matrices are not quantification and they cannot tell you whether a risk is worth 10x more treatment effort than another one.

Control mapping across frameworks. The tool must allow one control to satisfy requirements from multiple frameworks simultaneously. If you implement Article 9 risk management once and the platform makes you do it again for ISO 42001, the platform is selling duplicated work as a feature.

If a tool does not do all three, it is not an AI governance tool. It is an adjacent product pretending to be one.

Why the category is confused right now

The confusion is partly structural, partly deliberate.

Products built for AI operations monitoring (ML ops, model drift detection) are marketing themselves as "AI governance platforms." They are useful inputs to governance, but they do not govern. They tell you your model's performance is degrading. They do not map that observation to Article 15 of the EU AI Act or produce the evidence record your auditor asks for.

Products built for vendor risk management (third-party risk assessments, security questionnaires) are marketing themselves as "AI governance platforms." They assess external vendors' AI systems. They do not run your own AI governance program.

Products built for generic GRC (governance, risk, and compliance) have bolted on "AI modules" that let you tick boxes against the EU AI Act. These are filing systems for AI requirements. They do not connect to your engineering stack, they do not run tests, they do not quantify risk in money.

And then there are the compliance automation platforms. These are the most dangerous, because they look the most like the thing you want. Templates that produce polished-looking AI policies. Agents that fill in questionnaires. Dashboards that turn green. "AI Act compliance in 14 days" on the homepage.

The category looks confused because adjacent products are all claiming the same label. The split here is not a marketing claim. It is a structural distinction between products that generate artifacts and products that investigate your deployment. Buyers that can tell the two sides apart will make good decisions. Buyers that cannot will find out the hard way when a supervisor visits.

Compliance automation vs governance automation: the category split

The defining question is this: does the platform generate artifacts, or does it investigate your actual deployment?

An artifact generator produces documents. It takes a template, fills in your company name and a few details, and outputs a PDF that looks like an AI policy, a risk register, or a conformity assessment. The platform is optimised for speed of document production. Quality of the underlying claim is not something the platform can verify, because the platform has no connection to your actual systems.

A deployment investigator connects to your environment. It knows what models are running, what agents are deployed, what data they have access to, what they have done in the last 24 hours. It runs tests against governance-relevant properties. It produces evidence records that reference real events in real systems. The platform is optimised for whether the claim is true.

The hard problem of governance has always been verification, not document generation. Every regulator, auditor, and supervisor Modulos has spoken to in the last 18 months has said the same thing in slightly different words: documents are easy to produce and easy to falsify, evidence is hard. The platforms that survive the next three years will be the ones that solved the hard problem.

Six evaluation criteria that separate platforms from checklists

These are the criteria worth putting in your RFP. Each is paired with a concrete test a vendor must pass before the conversation continues.

1. Multi-framework shared control graph

The criterion. Do shared controls produce shared evidence across frameworks, or do they duplicate work?

The concrete test. Ask the vendor: if I implement Article 9 risk management once in your platform, does the same control count automatically for ISO 42001 Clause 6.1, NIST AI RMF Map 1.1, and the analogous requirement in DORA? Show me the evidence record, and show me it appearing in three framework dashboards at once.

What target answer looks like. A live demo where one control implementation populates evidence across the stack. Modulos supports 13+ frameworks in one graph today: EU AI Act, ISO 42001, NIST AI RMF, GDPR, NIS2, DORA, ISO 27001, ISO 27701, OWASP Top 10 for LLM, OWASP Top 10 for Agentic applications, UAE AI Ethics, MAS FEAT, Microsoft Supplier Data Protection Requirements. The quantified effect when shared controls actually share evidence is a 40 to 60% reduction in total compliance effort, compared with running each program independently.

Red flag. Vendor says "we map to multiple frameworks" but each control is entered separately per framework. That is a spreadsheet, not a graph.

2. Risk quantification in money terms, not colours

The criterion. Can the platform express AI risk as a monetary figure, and does it make that the default?

The concrete test. Show me a risk in your platform, for an AI system of a kind I operate. Tell me the expected loss in euros, and show me how the platform derived it.

What target answer looks like. A risk module that asks for threat probability, asset value, and impact range, and produces an expected monetary loss. Organisation-level taxonomies configure the impact scales once. Project-level risks inherit the scales and quantify each threat in money.

Red flag. 5x5 matrices. Red / amber / green dashboards. "High / medium / low" as the primary risk language. This is decoration. Supervisors have stopped accepting it. Insurers have stopped accepting it. Your CFO has stopped accepting it. The platform should too.

3. Agent-aware architecture

The criterion. Can the platform inventory and govern autonomous agents, not just static models?

The concrete test. Show me how your platform represents an agent that has tool access to Jira, memory persistence across sessions, and operational authority to modify issues. Is it a row in the same table as a static classifier, or does the platform have an object model for agent-specific risk? Does it support OWASP Top 10 for Agentic applications as a first-class framework, or only OWASP for LLM?

What target answer looks like. Agents are inventoried with their tools, their memory configuration, their credentials, and their authority scope. OWASP Top 10 for Agentic applications is a supported framework mapped into the shared control graph. The Nannini, Smith, and Tiulkanov paper (April 2026) set out the legal position clearly: high-risk agentic systems with untraceable behavioural drift cannot currently be placed on the EU market. The platform should reflect that reality in its data model.

Red flag. Vendor treats agents as "advanced AI systems" with no object-model difference from static models. That is 2024 governance on 2026 systems.

4. Regulator-ready reporting and immutable audit trail

The criterion. Can the platform produce a defensible, scoped report for a supervisor, auditor, or customer on demand, with an immutable audit trail behind every control status change, evidence item, and approval?

The concrete test. A regulator sends a 48-hour information request for all high-risk systems, their classifications, the evidence behind their Article 9 risk management, and the review history on each control. Show me the report your platform produces, and show me the audit log for one control as it was updated over the last six months.

What target answer looks like. Scoped export by framework, by system, by jurisdiction. Every control-status change, every evidence item, and every reviewer captured with a tamper-evident timestamp. Report production measured in minutes, not days. The platform is the system of record for a supervisor visit, not an input into a slide deck the team then assembles by hand. This is also what ISO 42001 Clause 7.5 and EU AI Act Article 12 on record-keeping assume is in place.

Red flag. "You can export the dashboard to PDF" offered as a regulator-ready report. PDFs of dashboards are not audit trails. Ask to see the underlying log and who can modify it.

5. Evidence automation at the source

The criterion. Does the tool collect evidence from your engineering stack automatically, or do humans produce and upload it?

The concrete test. Show me how the platform collects evidence from GitHub when a model is deployed. From Jira when a risk is discussed. From a monitoring system when a performance threshold is breached. How does an engineer's commit become evidence for Article 15 of the EU AI Act?

What target answer looks like. Project-level sources connect to GitHub, GitLab, Jira, Google Drive, SharePoint. A second layer, Runtime Inspection, connects to Prometheus, Datadog, GitHub, Azure, and pushed metrics from the Modulos Client. Tests run on a cadence. Results flow back to the controls they satisfy as evidence.

Red flag. Evidence is a file upload field. Humans do not scale and do not pass audits.

6. Human-in-the-loop AI assistance, not replacement

The criterion. Does the platform use AI agents for acceleration, with human approval preserved, or do the agents change compliance status autonomously?

The concrete test. Show me what the agents in your platform do. Can they approve a control status change without a human review step? Do their outputs go through the same review workflow as non-AI work?

What target answer looks like. Agent outputs are drafts. Every status change goes through the review workflow. Modulos's agents are Scout (conversational assistant grounded in project context, with connector access to your integrated data sources), Evidence agent (summarises evidence and suggests control mappings), Control assessment agent (produces structured assessment drafts). None of them approves status changes. Humans do. Audit logs preserve the distinction.

Red flag. Vendor describes agents that "automate compliance" in a way that sounds like they take approvals off the human critical path. Walk. The regulator will ask who approved this, and "our AI" is not the answer that keeps the certificate.

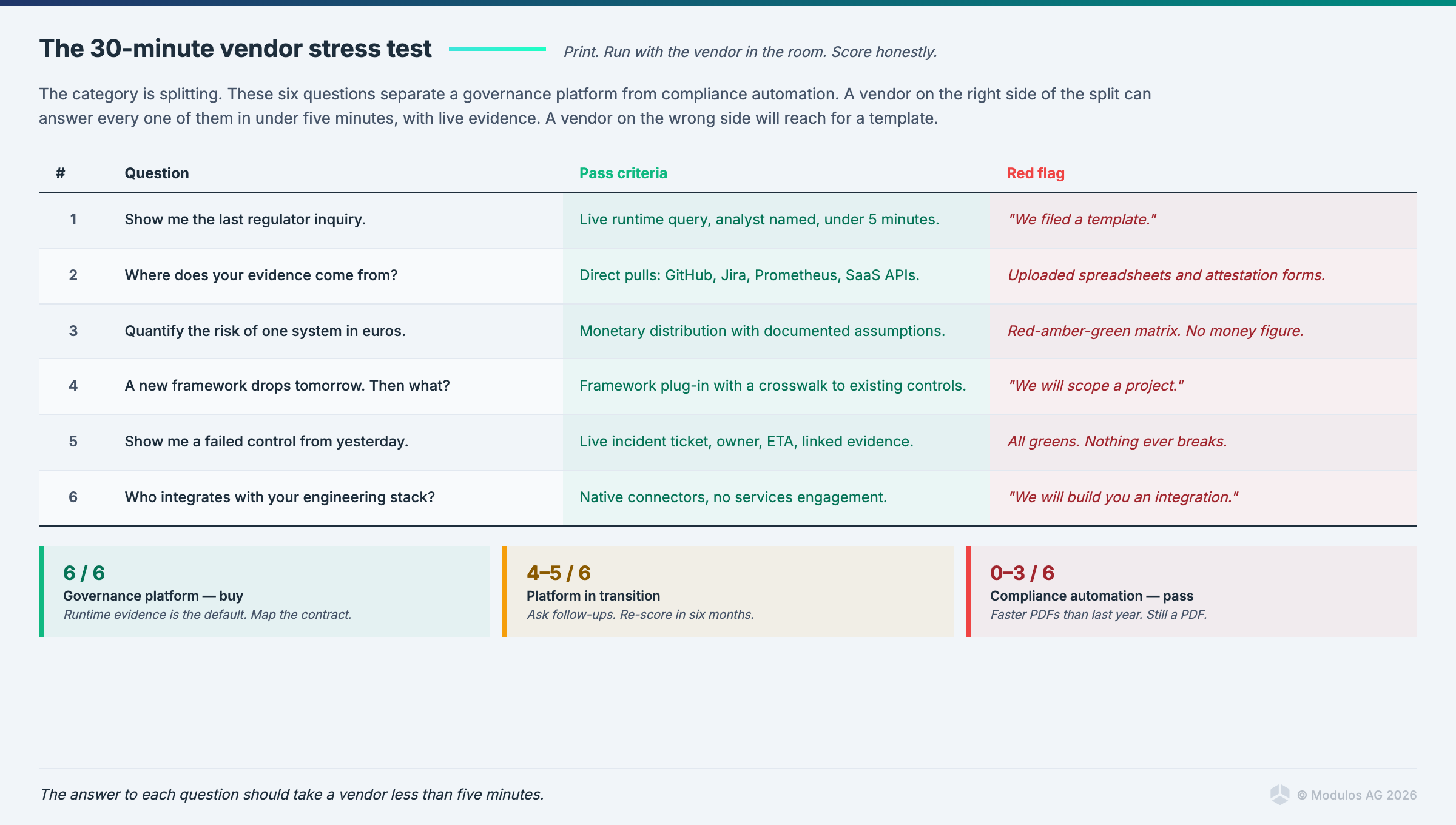

How to stress-test a vendor in 30 minutes

These are the six questions to ask in a first call, after the demo. They are designed to separate demo magic from production-grade governance.

Q1. "Show me the exact evidence artifact your platform would produce for Article 9 of the EU AI Act, for a system of my kind. Not a template. The filled artifact, from a real customer, scrubbed."

Q2. "If I implement one control in your platform, walk me through which framework dashboards update automatically as a result. Demonstrate on a control that spans at least three frameworks."

Q3. "What is the expected monetary loss for a hypothetical risk on system X in my environment? Show me the inputs and show me the derivation."

Q4. "How does your platform inventory and govern an autonomous agent that has tool access and memory? Show me the data model."

Q5. "Describe the incident-response workflow when a regulator sends a 48-hour information request. Which reports can your platform produce? How long does it take?"

Q6. "Show me one case where a customer's supervisor audited them using your platform as the system of record. What did the supervisor ask for? What did the platform produce?"

A vendor that can answer all six in a 30-minute call is a governance automation vendor. A vendor that dodges three or more of them is selling compliance automation with a better design team.

The non-negotiables

There are opinions and there are non-negotiables. The non-negotiables are short.

Quantified risk is non-negotiable. If the platform cannot put a euro figure on a risk, it is not a risk management platform. It is a dashboard.

The shared control graph is non-negotiable. If implementing Article 9 once does not automatically count toward the ISO 42001 and NIST mappings, the platform is selling duplicated work as a feature. You will pay for it every time you add a framework.

"Our platform supports the EU AI Act" with no control-level mapping is marketing, not governance. Ask for the mapping. If it is not there, the support is notional.

Where Modulos lands on each criterion

Factually, briefly, and with no adjectives.

- Shared control graph: 13+ frameworks supported in one graph. One control can satisfy many requirements simultaneously. Verified against the docs.

- Monetary risk quantification: built into the risk module. Organisation-level taxonomies define impact scales. Project-level risks express threats in money.

- Agent-aware: OWASP Top 10 for Agentic applications is a supported framework. Agents are inventoried as first-class objects.

- Regulator-ready reporting and audit trail: scoped exports by framework, system, and jurisdiction. Every control-status change, evidence item, and reviewer captured in an immutable audit log. Supports the record-keeping assumptions of ISO 42001 Clause 7.5 and EU AI Act Article 12.

- Evidence at the source: project-level integrations for GitHub, GitLab, Jira, Google Drive, SharePoint. Runtime Inspection for Prometheus, Datadog, GitHub, Azure, pushed metrics from the Modulos Client.

- Human-in-the-loop AI: Scout, Evidence agent, Control assessment agent. Agents draft. Humans approve through the review workflow.

External credentials. Xayn (first ISO 42001 certification in Germany, four weeks via SGS). CertX product conformity attestation. CAISI consortium WG1 and WG5. EU AI Office GenAI Code of Practice contributor. European Commission AI Pact signatory.

FAQ

What is the difference between AI governance and AI compliance? Compliance is the state of meeting a specific set of requirements. Governance is the ongoing organisational capability to make and keep AI systems compliant as both systems and requirements change. A compliance program can run without governance. It will not survive the first change in either direction.

How is an AI governance tool different from an AI ethics tool? Ethics tools measure properties of AI systems (bias, fairness, explainability). Governance tools run the organisational program that determines what ethical properties matter, documents them as controls, and produces evidence. You need ethics instruments as inputs into your governance program. Neither replaces the other.

Do we need a separate AI governance tool if we already have a GRC platform? Most GRC platforms are document management systems for compliance requirements. They do not connect to engineering stacks, they do not quantify AI-specific risks, they do not understand agents, and their "AI modules" are usually questionnaires pre-populated with EU AI Act articles. If your organisation is running serious AI, the GRC platform is adjacent to the work, not the work itself.

What does an AI governance tool typically cost? Per-user or per-project-seat pricing, typically in the low-to-mid five figures annually for a mid-sized enterprise program, scaling with the number of AI systems governed. The cost of the tool is usually less than 10% of the total cost of the governance program (most of the cost is internal headcount). Buying the wrong tool costs much more than buying the right one, because the refactoring burden is substantial.

Is the Modulos platform available on-premises? Deployment options vary by customer configuration. Speak to a specialist for regulated-industry and air-gapped scenarios.

Closing

The category will consolidate within 18 months. The platforms that survive will be the ones built for where supervision is going, not where it was.

Buy the wrong tool today and the reputational cost compounds well before any fine is assessed. When a visible AI incident surfaces, customers, partners, and boards ask the same question: how did the governance program not catch this? "Our platform produces templates" is not the answer that protects the brand. The AI Act fines are material. For most enterprises, the reputational exposure is larger.

The first governance tool you pick becomes the audit trail for years. Choose once.

Ready to see how Modulos handles AI governance at enterprise scale? Request a demo and we will walk you through how the AI governance platform operationalises EU AI Act, ISO/IEC 42001, and NIST AI RMF on one shared control graph. See the compliance platform and the guide to AI governance.

Cross-links: AI governance platform, compliance platform, guide to AI governance, global AI compliance guide.