For defense primes, ministries, and dual-use vendors

AI governance for

defense and national security

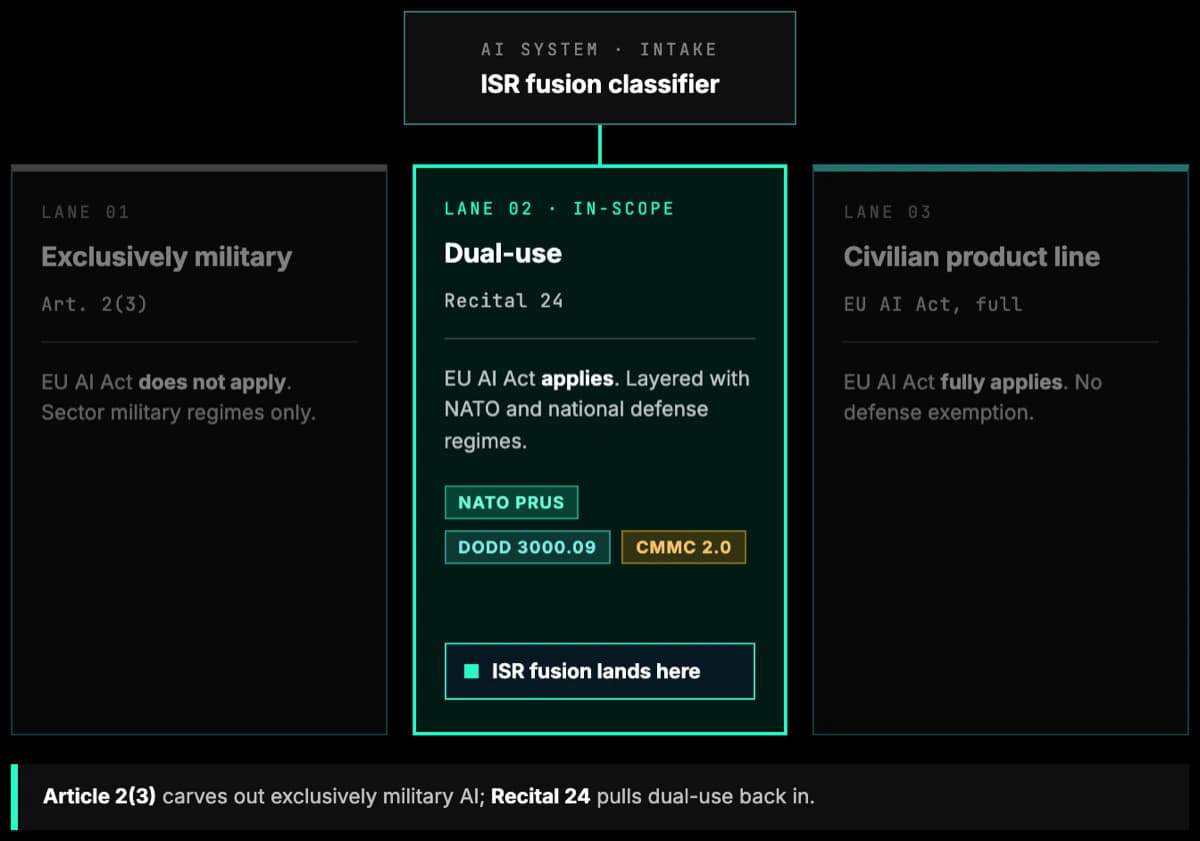

AI governance for defense and national security sits on a narrow carve-out. Article 2(3) of the EU AI Act excludes AI used exclusively for military, defense, or national-security purposes. Recital 24 narrows it further: dual-use, enterprise, and civilian product lines fall back into scope. NATO Principles of Responsible Use and DoDD 3000.09 govern the rest.

The Numbers Driving AI Governance in This Sector

Third-party figures from regulators, standards bodies, and industry sources. Tiles are colour-coded by type: EU AI Act, cyber and risk, market, and timeline. Every tile links to its source.

Why AI governance for defense starts with the EU AI Act

The instinct to assume defense is outside the EU AI Act is understandable but wrong for most programmes. Article 2(3) excludes AI systems placed on the market, put into service, or used exclusively for military, defense, or national-security purposes, regardless of the type of entity. Recital 24 grounds this in Article 4(2) TEU. In practice the carve-out is narrow. A system placed on the market for an excluded purpose and one or more non-excluded purposes falls back into scope. A military-only system later re-used for civilian, law-enforcement, or humanitarian purposes also falls in scope. Only truly exclusive military use escapes. Most AI that defense primes and ministries actually build, buy, and run has a dual-use or civilian footprint.

Where the AI Act applies, it applies in full. HR and recruitment AI used by ministries or primes is named high-risk under Annex III. Enterprise, procurement, and civilian product lines follow the same high-risk and GPAI obligations as any non-defense company. Dual-use systems have to be assessed under the Act for each intended purpose. The Act is still the anchor of the governance programme, with sector-specific military doctrine layered on top.

For the exclusively military portion, NATO and US doctrine fill the gap with equivalent rigour. NATO revised its AI Strategy in July 2024 and restated the six Principles of Responsible Use: Lawfulness; Responsibility and Accountability; Explainability and Traceability; Reliability; Governability; and Bias Mitigation. DoD Directive 3000.09, reissued January 2023, governs autonomy in weapon systems and requires appropriate levels of human judgment over the use of force. The DoD Responsible AI Strategy and CDAO Toolkit operationalise five ethical principles across the lifecycle. The Political Declaration on Responsible Military Use of AI and Autonomy commits signatories to auditability, lifecycle testing, senior review, and deactivation capability. ISO/IEC 42001 gives defense organisations the audit-grade management system that maps cleanly to all of them.

Cybersecurity runs underneath. In the US, CMMC 2.0 program rule (32 CFR 170, effective 16 December 2024) and the final DFARS rule (effective 10 November 2025, phased over three years) make cybersecurity posture contractually binding across roughly 338,000 defense industrial base contractors and subcontractors per the DoD final-rule estimate. In the EU, NIS2 and DORA apply to civilian arms of defense primes. The mandate is clear: anchor the programme on the EU AI Act where it applies, on NATO PRUs and DoDD 3000.09 where it does not, and bring them both under a single governance graph.

Regulations and Frameworks in Scope

The EU AI Act is the primary driver. Sector regulations and standards sit around it. Each card links to a deeper primer where available.

EU AI Act Article 2(3)

European UnionExcludes AI used exclusively for military, defense, or national-security purposes. Recital 24 makes the carve-out narrow. Dual-use, HR, enterprise, and civilian product lines of defense organisations remain in scope for high-risk and GPAI obligations.

NATO AI Strategy (2021, revised 2024)

NATOThe revised AI Strategy (July 2024) restates the six Principles of Responsible Use: Lawfulness; Responsibility and Accountability; Explainability and Traceability; Reliability; Governability; and Bias Mitigation. Baseline for Allied AI adoption and vendor supply.

DoDD 3000.09

US DoDReissued January 2023. Governs autonomy in weapon systems, requires appropriate levels of human judgment over the use of force, and imposes a senior-review process for certain autonomous and semi-autonomous systems. Binding across the US defense enterprise.

DoD Responsible AI Strategy

US DoD / CDAOOperationalises the five DoD AI Ethical Principles across the lifecycle through CDAO lines of effort and the CDAO Responsible AI Toolkit (November 2023). Applies to DoD components and increasingly to suppliers via contract terms.

Political Declaration on Responsible Military Use of AI

Multi-stateNon-binding but influential political commitment launched at REAIM (February 2023). 58+ endorsing states by late 2024. Covers auditability, defined uses, lifecycle T&E, senior review for high-consequence applications, and deactivation capability.

ISO/IEC 42001

ISO standardThe first AI management-system standard (December 2023). Framework-agnostic and applicable to defense primes, mission integrators, and ministries. Increasingly referenced as an evidentiary baseline where AI Act obligations do not apply.

NIST AI RMF

NIST / USAI Risk Management Framework 1.0 (January 2023) and the Generative AI Profile (July 2024). Widely adopted across the US defense industrial base. Aligns with the DoD RAI strategy and commercial governance programmes.

Dual-Use Export Controls

EU 2021/821 / US BISEU Regulation 2021/821 Article 5 cyber-surveillance catch-all received Commission guidelines in October 2024, first annual implementation report in January 2025. US BIS rescinded the AI Diffusion Rule in May 2025, replacement policy in development. Both shape exportable defense AI.

CMMC 2.0 and DFARS

US DoD32 CFR 170 program rule effective December 2024. Final DFARS rule effective 10 November 2025, phased over three years. Makes cybersecurity maturity contractually binding across the defense industrial base.

Where AI Governance Actually Bites

The pressure points driving board-level attention in this sector.

Weapons and Targeting Decision Support

LAWS, targeting recommenders, and ISR fusion fall outside the EU AI Act under the military carve-out but squarely inside DoDD 3000.09, NATO PRUs, and the Political Declaration. Auditability, lifecycle T&E, and senior review are mandatory artefacts, not optional.

Intelligence and Classified GenAI

Generative AI in classified environments has to reconcile NATO PRU reliability and traceability with intelligence-community information-handling rules. NSM-25 (October 2024) set a US framework for AI in national security. Status under subsequent executive orders should be monitored.

Dual-Use and Civilian Product Lines

Enterprise AI, HR, procurement, and civilian product lines of defense primes remain in scope of the EU AI Act and GPAI rules. High-risk classification under Annex III is common for HR and recruitment AI used by ministries or primes.

Export Controls and Model Weights

Dual-use Regulation (EU) 2021/821 and the evolving US BIS posture after the AI Diffusion Rule rescission directly shape what frontier model weights and AI-enabled systems can be exported, to whom, and under what licence.

Defense Industrial Base Cybersecurity

CMMC 2.0 and the DFARS final rule (November 2025) make cybersecurity contractually binding. On the EU side, civilian arms of primes carry NIS2 and DORA obligations. AI supply-chain risk extends these into governance over model vendors and cloud inference providers.

Logistics, Sustainment, and HR AI

Predictive maintenance, sustainment analytics, and recruitment AI are lower-visibility but regulator-visible. HR and recruitment AI is listed in AI Act Annex III as high risk whenever used for civilian deployment, and NATO PRUs apply even where the AI Act does not.

High-Stakes AI Use Cases

Each use case is tagged with the AI Act gates it triggers. The Regulation runs four independent checks (Article 5 prohibitions, Annex III or Article 6 high-risk, Article 50 transparency, and Chapter V GPAI obligations) and the duties stack. A single system can hit several gates at once.

Lethal and semi-autonomous weapon systems (LAWS)

Out of scope of the EU AI Act under Article 2(3) when used exclusively for military purposes. Governed instead by DoDD 3000.09, NATO PRUs, and the Political Declaration. Senior-level review, lifecycle T&E, and deactivation capability are baseline expectations.

Targeting decision support and ISR fusion

Out of scope of the EU AI Act when use is exclusively military (Article 2(3)), but still subject to NATO PRUs and allied doctrine on meaningful human control. Governance evidence is operationally essential.

HR, recruitment, and talent AI used by ministries and primes

High-risk under Annex III point 4 (employment and worker management). Full conformity, documentation, and bias-testing obligations apply independently of the military carve-out.

Dual-use civilian products of defense primes

In scope of the EU AI Act. Any mixed-purpose system falls back into scope under Recital 24. The applicable gate depends on intended use, most often Annex III high-risk. Chapter V GPAI duties sit separately on the model provider.

Generative AI in classified and cleared environments

Out of scope of the AI Act when exclusively national security (Article 2(3)), but governed by NSM-25-style frameworks, NATO PRUs, and classified information-handling rules. Chapter V obligations still sit on the model provider upstream.

Predictive maintenance, logistics, and sustainment AI

No AI Act gate triggered when advisory and not a safety component of a weapons system. NATO PRUs and ISO/IEC 42001 still provide the governance template even where the Act does not apply.

How Modulos Solves It

A single governance graph covering every obligation above, so controls written for one framework earn credit across the rest.

AI Act, NATO PRUs, DoDD 3000.09, and ISO 42001 in one graph

A single control set fulfils many obligations. Dual-use systems map to the AI Act. Exclusively military systems map to NATO and DoD doctrine. Both share evidence through ISO/IEC 42001 and NIST AI RMF.

AI inventory that distinguishes scope boundaries

Each AI system is tagged exclusively-military, dual-use, or civilian, with version, supplier, mission context, and classification fields. AI Act applicability is decided once and recorded, not re-litigated each audit.

Senior review and lifecycle T&E workflows

DoDD 3000.09 senior review, Political Declaration lifecycle T&E, and deactivation capability run as structured workflows with tamper-evident audit trails. No more scattered memoranda and email chains.

Defense industrial base cyber alignment

Controls satisfy CMMC 2.0, NIS2, and DORA where civilian obligations apply. Primes and their enterprise functions run one programme instead of three, and AI governance sits inside it rather than alongside.

FAQ

Article 2(3) excludes AI systems placed on the market, put into service, or used exclusively for military, defense, or national-security purposes, regardless of the type of entity. Recital 24 narrows this in practice. Mixed-purpose systems placed on the market for an excluded and one or more non-excluded purposes fall back into scope. Military-only systems later re-used for civilian, law-enforcement, or humanitarian purposes also fall in scope. Most dual-use, enterprise, and civilian product lines of defense organisations remain fully in scope.

Further reading

Deeper context, platform pages, and framework documentation from docs.modulos.ai.

- AI governance platformHow Modulos operationalises the EU AI Act, NATO PRUs, and NIST AI RMF in one graph.

- Guide to AI governanceThe practice, the frameworks, and how to stand up a governance programme.

- NIST AI RMFThe US framework referenced across DoD Responsible AI and the defense industrial base.

- EU AI Act framework documentationTechnical guidance on conformity assessment and CE marking for dual-use AI.

- ISO 42001 documentationClauses 4 to 10 mapped to Modulos controls and evidence.

Ready to Run Defense AI Governance Across the Carve-Out?

See how Modulos maps the EU AI Act dual-use portion, NATO PRUs, DoDD 3000.09, NIST AI RMF, ISO/IEC 42001, the Political Declaration, and CMMC 2.0 into one governance graph.