AI Governance Platforms aren't the Brake. They're the Steering Wheel

AI Governance is The Business Enabler You Have Been Looking For

Why governance accelerates effective AI use, not blocks it

An AI governance platform is not an overhead cost. It is the infrastructure that lets enterprises move AI from isolated pilots to production at scale, with quantified risk, automated compliance, and board-level confidence. The default assumption that governance slows innovation down is wrong, and the organisations clinging to it are the ones falling behind.

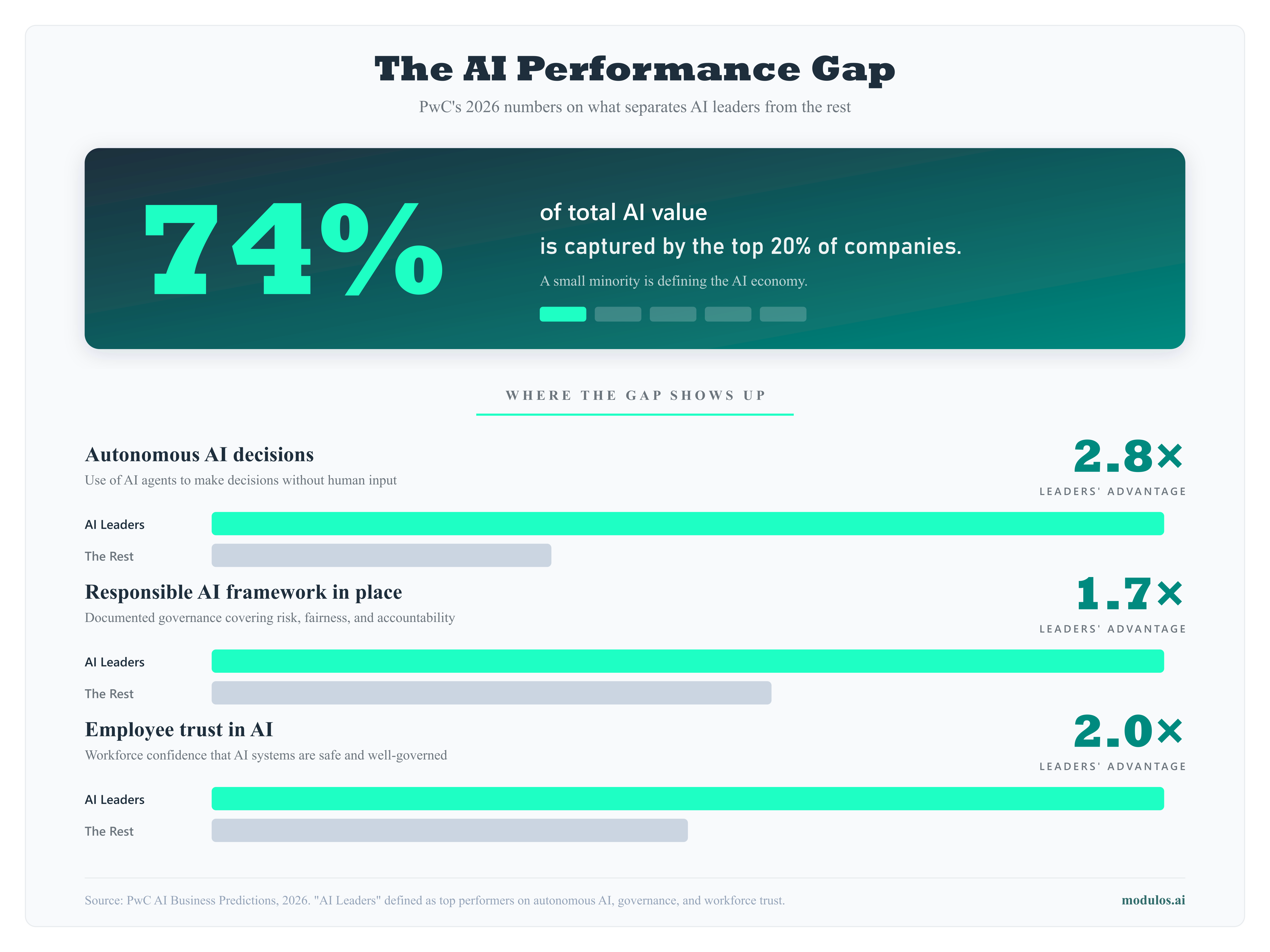

This is not a fringe view. PwC's 2026 AI Performance study, covering 1,217 senior executives across 25 sectors, found that 74% of AI's economic value is captured by just 20% of organisations. The difference between leaders and laggards is not more AI tools. It is stronger foundations around data, governance, and trust. The leaders are 1.7 times more likely to have a responsible AI framework and 1.5 times more likely to have a cross-functional AI governance board. Their employees are twice as likely to trust AI outputs. IBM's Responsible Technology Board has reached the same conclusion: the ability to use responsible AI as part of business operations is now key to remaining competitive, attracting talent, and retaining investor confidence.

This post breaks down seven ways a structured governance platform turns AI from a stalled experiment into a scalable, insurable, sellable investment.

Why AI stalls without a governance platform

Every enterprise that has deployed more than a handful of AI systems hits the same wall. The first few projects move quickly. A proof of concept here, a pilot there, usually driven by a single enthusiastic team. Then the questions arrive.

Who approved this? What data does it use? Is it compliant with the frameworks we are subject to? What happens if it fails? Who is accountable?

Without governance infrastructure, each question triggers an ad-hoc process. Legal gets pulled in. Risk gets pulled in. Security gets pulled in. The same conversations happen for every project, from scratch, with no institutional memory and no consistent methodology.

The result is not responsible caution. It is organisational paralysis. Projects sit in review queues. Teams stop proposing AI initiatives because the approval process is unpredictable. The organisation falls behind competitors who have figured out how to move AI through a structured pipeline.

The numbers confirm it. According to RAND Corporation's 2025 analysis, over 80% of AI projects fail to deliver their intended business value, at twice the failure rate of non-AI IT projects. S&P Global found that 42% of companies abandoned at least one AI initiative in 2025, up from 17% the year before, with the average sunk cost per abandoned initiative reaching $7.2 million. The root cause is rarely the technology itself. Of 140 enterprise AI implementations analysed, only 23% of failures were caused by model performance, data quality, or integration issues. The remaining 77% were organisational: missing governance structures, undefined success criteria, and no clear accountability.

A purpose-built AI governance platform solves this by making the process repeatable. A standardised intake workflow, a clear classification methodology, a pre-mapped control framework, and a defined risk tolerance give every project a known path from proposal to production. The first project through the pipeline takes effort to set up. The twentieth takes a fraction of the time.

How AI governance assessments kill bad projects early

One of the least appreciated benefits of an AI governance assessment is its ability to prevent wasted investment. Not by prohibiting projects, but by quantifying their risk-adjusted value before significant resources are committed.

Most organisations evaluate AI projects on expected benefit alone. The business case shows projected cost savings or revenue uplift, leadership approves, and the team builds. Risk enters the conversation only after something goes wrong.

Governance changes the sequencing. When you quantify AI risk in monetary terms (probability of failure multiplied by financial impact across governance, technical, ethical, legal, and operational categories) you get a risk-adjusted ROI. Some projects that look like winners on a benefit-only analysis become clear losers when risk is factored in.

That is not governance blocking innovation. That is governance saving the organisation from investing six months and a significant budget into a project that was always going to fail. The project dies on paper, early, cheaply. The budget and the team get redirected to a project with a better risk-adjusted return.

Two outcomes, both wins: the risk-adjusted ROI is negative and you kill early, or you de-risk and proceed with full visibility. The only losing move is proceeding without quantification.

Governance turns AI from a cost conversation into an investment conversation

The most common complaint from AI leaders is that their board sees AI governance as a cost centre. Budget requests for governance tooling get scrutinised as overhead. Risk management is treated as a tax on innovation.

This framing persists because most governance outputs are qualitative. A heat map showing red, amber, and green risks does not give a CFO anything actionable. It does not connect to P&L. It does not inform capital allocation. It exists in its own world, disconnected from the financial reasoning that drives every other decision.

Monetary risk quantification changes the conversation entirely. When a platform like Modulos shows that an AI system carries EUR 2.4M in quantified risk exposure, and that a specific set of mitigations would reduce that to EUR 800K at a cost of EUR 200K, the board is not looking at a compliance expense. They are looking at an investment with a clear return. The EUR 200K spend is risk reduction with a measurable payoff.

This is the shift a purpose-built AI governance platform enables: from "how much do we have to spend on compliance?" to "where should we invest to maximise risk-adjusted returns from AI?" The first question is a cost conversation. The second is a strategy conversation. Boards engage very differently with each.

The market is moving accordingly. The global AI governance market was valued at approximately USD 308 million in 2025 and is projected to exceed USD 3.5 billion by 2033, growing at a CAGR of around 36% (Grand View Research). That growth reflects a shift from governance as an afterthought to governance as core infrastructure for AI investment.

AI governance tools as a competitive advantage in enterprise sales

For organisations selling AI-powered products or services, AI governance tools are not just an internal capability. They are a market differentiator.

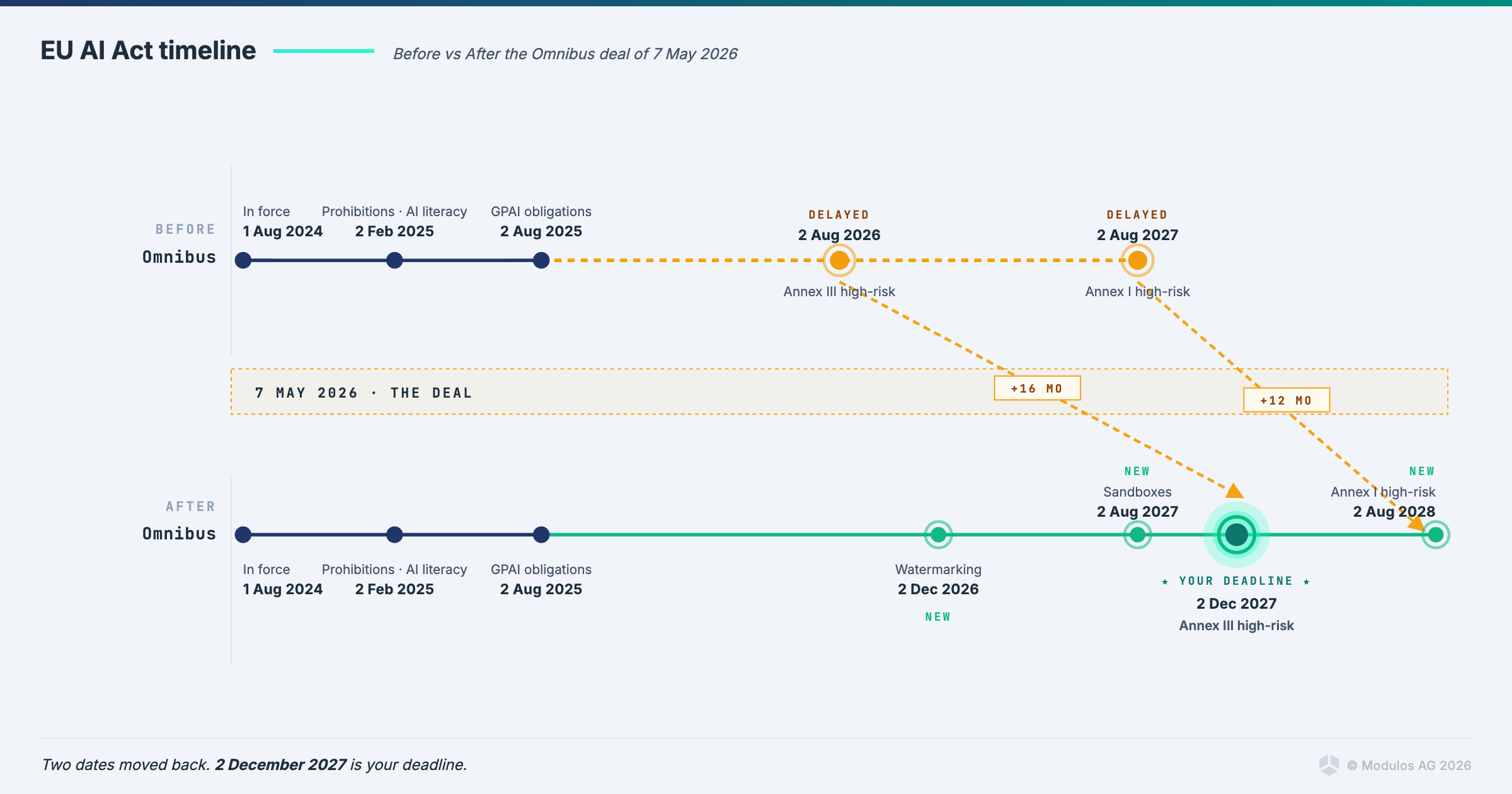

Enterprise buyers in financial services, healthcare, and government are increasingly requiring evidence of AI governance as part of procurement. They want to see risk assessments, fairness testing, compliance documentation, and audit trails before they will sign a contract. The EU AI Act formalises this for high-risk systems with enforcement beginning August 2026, but the market expectation is already ahead of the legislation.

Organisations that can produce this evidence on demand, from a live governance platform rather than a hastily assembled PDF, close deals faster. They pass vendor security reviews more smoothly. They satisfy due diligence requirements without pulling engineers off product work to answer questionnaires.

When a prospect asks "how do you govern the AI in your product?" and the answer is a live dashboard showing risk classification, control execution, fairness metrics, and compliance status across relevant frameworks, the conversation gets shorter in the right direction.

Governance attracts talent and retains investor confidence

The competitive advantage of an AI governance platform extends beyond sales cycles. It shapes how an organisation attracts people and capital.

Engineers and data scientists increasingly want to work at organisations where AI is developed responsibly. When governance is operational rather than aspirational, teams spend less time on repetitive compliance tasks and more time on creative, high-impact work. IBM's Responsible Technology Board has noted that AI governance, when integrated properly, frees employees to focus on complex problems requiring critical thinking rather than routine documentation. That is a talent retention argument, not just a compliance one.

The investor and shareholder angle is equally direct. Boards and institutional investors are asking harder questions about AI risk exposure. An organisation that can demonstrate quantified risk, continuous monitoring, and auditable compliance across its AI portfolio signals maturity. One that cannot signals unmanaged liability. The difference affects valuations, funding rounds, and board confidence in approving further AI investment.

Governance is how an organisation proves, to its own people and its investors, that it is scaling AI deliberately rather than recklessly.

Insurance is the accelerating signal

The insurance market is sending a signal that most organisations have not fully absorbed. Major insurers are actively seeking to exclude AI-related liabilities from corporate policies.

This is not theoretical. AIG, W.R. Berkley, and Great American have each sought regulatory clearance for new policy exclusions that would allow them to deny claims tied to the use or integration of AI systems (Financial Times, November 2025). W.R. Berkley's proposed exclusion would bar claims involving "any actual or alleged use" of AI, even if the technology forms only a minor part of a product or workflow. In January 2026, Verisk's ISO division released new general liability endorsements (CG 40 47 and CG 40 48) giving carriers standardised language to exclude generative AI from commercial general liability policies. With ISO forms underpinning approximately 82% of US property and casualty policies, rapid adoption is expected across the market.

The liability chain has fractured. AI developers disclaim liability in their terms of service. Deployers can no longer transfer that risk to insurers. The exposure sits on the deployer's balance sheet.

This matters because governance frameworks are becoming prerequisites for coverage, following the same pattern the industry saw with cyber insurance a decade ago. As Lexology noted in January 2026, AI governance is now the only practical pathway to reduce risk, retain insurance coverage, and maintain defensibility against AI-driven claims. Organisations that can demonstrate operational AI governance through quantified risk, continuous monitoring, documented controls, and auditable evidence will remain insurable. Those that cannot will face exclusions, higher premiums, or uncovered liabilities.

Unlike regulation, which phases in over years with transition periods, insurance exclusions take effect at the next policy renewal. No transition period. Universal application.

Governance creates trust, and trust creates speed

The deepest value of an AI governance platform is not in any single feature. It is in the trust it creates across the organisation.

When leadership trusts that AI risks are being quantified and managed, they approve projects faster. When legal trusts that classification and compliance are handled systematically, they stop reviewing every deployment from scratch. When security trusts that AI systems are monitored continuously and integrated into the governance framework, they stop acting as a bottleneck. When the board trusts that AI risk management is reported in the same financial language as every other business risk, they fund AI investment with confidence.

This organisational trust is the real accelerator. PwC's data backs this up directly: AI leaders are almost three times (2.8x) more likely than peers to have increased the number of decisions made without human intervention. They have not achieved this by removing oversight. They have achieved it by building governance structures that make oversight systematic rather than ad-hoc. The result is that their employees are twice as likely to trust AI outputs, which in turn accelerates adoption and impact.

The choice is not governance or innovation. It is governance as innovation infrastructure.

There are two paths organisations take with AI.

The first is to avoid risk by constraining AI adoption: limiting use cases, requiring manual approvals for every deployment, or avoiding AI altogether in regulated areas. This leads to stagnation. Competitors who govern AI properly will move faster.

The second is to manage risk quantitatively by building governance infrastructure that classifies systems, quantifies risk, maps controls across frameworks, monitors continuously, and reports in financial terms. This is where ISO/IEC 42001 certification, EU AI Act conformity assessment, and NIST AI RMF alignment converge inside a single platform.

The technology to take the second path exists today. Enterprises that treat responsible AI as a core business strategy, not as a side project owned by legal, are the ones building durable competitive advantages. The question is whether you build the infrastructure now, when governance is a strategic differentiator, or later, when it is a regulatory requirement and everyone is scrambling to catch up.

What separates a purpose-built AI governance platform from alternatives

| Criterion | Purpose-built AI GP (Modulos) | Generic GRC tool | Manual / spreadsheet |

|---|---|---|---|

| Multi-framework support | Yes. EU AI Act, ISO 42001, NIST AI RMF, OWASP in one platform | Partial. Requires configuration per framework | No |

| Quantitative risk scoring | Yes. Monetary values via Monte Carlo simulation | No. Qualitative only | No |

| Evidence automation | Yes. AI agents collect from GitHub, Confluence, cloud infrastructure | No | No |

| EU AI Act scoping | Built-in questionnaire with automated classification | Manual | Manual |

| Deployment options | SaaS, private cloud, on-premise | Usually SaaS only | N/A |

| ISO 42001 certification | Supported. Modulos is Europe's first certified AI governance platform | Not supported | Not supported |

| Cross-framework deduplication | Yes. One control satisfies requirements across multiple frameworks simultaneously | No | No |

Ready to see how governance becomes a steering wheel instead of a brake? Request a demo and we will walk you through how the AI governance platform operationalises risk quantification, multi-framework compliance, and AI portfolio management for your organisation.

Modulos is an AI governance platform that helps organisations classify, assess, monitor, and demonstrate compliance for their AI systems. Europe's first ISO/IEC 42001-certified platform. Visit modulos.ai to learn more.