The 4 Stages of the AI governance maturity model: Where Are You on This Journey?

It's probably not where you want to be

AI governance maturity model: from awareness to advantage

Modulos · May 2026 · 9 min read

An AI governance maturity model gives organisations a structured way to measure how far they have progressed from ad-hoc AI adoption to fully governed, audit-ready operations. With the EU AI Act's high-risk system requirements now enforceable and penalties reaching up to €35 million or 7% of global annual turnover, knowing where you sit on the maturity curve is no longer optional - it is a financial imperative.

This guide presents a practical framework based on what we observe across hundreds of conversations with CISOs, compliance officers, and technology leaders. It is not theoretical. It reflects real patterns in how enterprises move from unmanaged AI adoption to governance that accelerates deployment rather than blocking it.

Why your organisation needs an AI governance maturity model

The gap between AI adoption and AI governance is widening in most enterprises. Technology teams have moved fast - piloting AI, deploying models, integrating generative tools into workflows. But governance has not kept pace.

That gap creates three compounding risks:

- Regulatory exposure. The EU AI Act's high-risk system deadline is approaching. Organisations that cannot classify their AI systems by risk level, demonstrate conformity, or produce audit-ready documentation face substantial penalties.

- Operational blind spots. Shadow AI - employees adopting AI tools outside IT's visibility - creates untracked compliance gaps and data leakage vectors that grow with every new tool deployed.

- Strategic drag. Without governance infrastructure, every new AI deployment requires manual risk assessment, ad-hoc evidence collection, and framework-by-framework compliance work that scales linearly with portfolio size.

A maturity model turns these risks into a measurable trajectory. It tells you where you are, what is missing, and what specific capabilities you need to move forward.

The four levels of AI governance maturity

Each level represents a distinct stage in your governance journey, from awareness to competitive advantage.

Most enterprises sit at Level 2 or Level 3 today. The question is not whether to reach Level 4 - it is how fast, and with what tooling.

Level 1: AI aware

This is where most mid-market organisations still sit. AI is not absent - it is embedded in the tools they already use. Email spam filters, chatbot widgets, recommendation features inside SaaS platforms. But nobody has mapped these as AI systems, and nobody is applying a governance lens.

Level 1 Characteristics

- AI inventory None. Systems with AI components are not identified as such.

- Governance No formal AI governance framework. AI decisions happen ad hoc within teams.

- Regulatory readiness The EU AI Act is on the radar but not yet a workstream.

- Accountability No designated owner for AI risk or oversight.

To move to Level 2: Start by acknowledging that AI is already in your organisation - even if you did not deploy it intentionally. Identify where AI-powered tools are being used, by whom, and for what purpose. This is the seed of your AI inventory.

Level 2: AI exploring

This is the most common stage we see in enterprise conversations today. Innovation teams are running proofs of concept. Employees are experimenting with generative AI tools - sometimes with approval, often without. There is genuine executive enthusiasm, but governance is lagging behind the adoption curve.

The biggest risk at this level is shadow AI: employees adopting tools independently, outside IT's visibility. Every untracked tool is a potential compliance gap, a data leakage vector, and a governance blind spot.

Level 2 Characteristics

- AI inventory Partial at best. Known pilots are tracked; shadow AI is not.

- Governance Informal. A policy document may exist but there is no enforcement mechanism.

- Regulatory readiness Awareness of the EU AI Act exists but no risk classification or conformity assessment work has started.

- Accountability Interest from the CISO or CDO but no dedicated function or resource.

To move to Level 3: Build a complete AI inventory - including shadow AI. Establish a basic intake process so that new AI tools pass through triage before deployment. Assign someone explicit responsibility for AI governance, even if it is part of an existing role.

Level 3: AI operational

At this level, AI is delivering real business value - fraud detection models, recommendation engines, automated document processing, customer-facing chatbots. There is some structure around deployment, but governance tends to be reactive: triggered by incidents, audit findings, or regulatory deadlines rather than embedded into the development lifecycle.

This is where the EU AI Act conversation becomes urgent. With high-risk system requirements now in force, organisations at Level 3 often find themselves needing to retrofit governance onto systems already in production — a significantly more expensive and disruptive process than building it in from the start.

Level 3 Characteristics

- AI inventory Exists but may be incomplete. Production systems are tracked; experimental ones drift.

- Governance Reactive. Assessments happen when triggered, not continuously.

- Regulatory readiness Risk classification has started. Conformity assessment gaps are becoming visible. The approaching deadline for high-risk AI systems is now a board-level concern.

- Accountability A designated owner exists but often lacks tooling, budget, or board visibility.

To move to Level 4: This is the critical transition — and where purpose-built tooling makes the difference. Manual processes (spreadsheet-based risk assessments, email-driven evidence collection) do not scale. You need automated risk assessments, continuous control monitoring, multi-framework mapping, and a platform that connects your governance posture to your actual AI infrastructure.

Level 4: AI governed

Governance is integrated into the AI lifecycle - not a checkpoint at the end, but a continuous process from intake through deployment through continuous runtime inspection. Risk is quantified in monetary terms, not colour-coded heat maps. Controls are mapped across frameworks simultaneously, so a single assessment satisfies the EU AI Act, ISO/IEC 42001, and NIST AI RMF without tripling the work.

Critically, organisations at Level 4 treat governance as a competitive advantage, not a compliance burden. They ship AI faster because governance is built into the pipeline. They win customer trust because they can demonstrate compliance on demand. They make better investment decisions because they understand the financial exposure of every AI system in their portfolio.

Level 4 Characteristics

- AI inventory Complete, continuous, and connected to the infrastructure where AI systems live.

- Governance Proactive and automated. Risk assessments, evidence collection, and control mapping run continuously.

- Regulatory readiness Full conformity assessment capability. Audit-ready documentation available at all times. CE marking requirements met for high-risk AI systems.

- Accountability Board-level visibility. Financial risk quantification. Clear ownership and escalation paths.

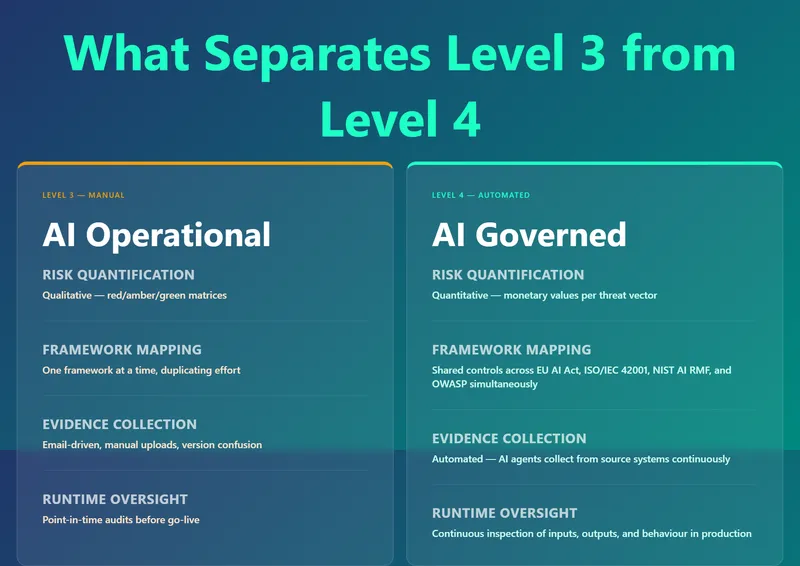

What separates Level 3 from Level 4

The jump from Level 3 to Level 4 is where most organisations stall. The reason is tooling. At Level 3, governance work is typically manual: risk assessments in spreadsheets, evidence stored in shared drives, compliance tracked per framework in separate workstreams. This approach breaks down as the AI portfolio grows.

Level 4 requires four capabilities that manual processes cannot deliver:

Level 3 vs Level 4 comparison: Manual vs Automated governance

Level 3 vs Level 4 comparison: Manual vs Automated governance

The shift from qualitative to quantitative risk management enables board-level decision-making about AI governance.

The shift from qualitative to quantitative risk management is particularly significant. When risk is expressed in currency - cost of non-compliance, exposure per system, trend over time - management can make investment decisions. Traffic-light matrices do not enable that conversation.

How to assess where your organisation sits today

If you recognised your organisation in the descriptions above, you are not alone. Three diagnostic questions cut through ambiguity:

- Do you have a complete inventory of every AI system in use? If the answer involves "we think so" or "mostly," you are at Level 2 at best.

- Can you classify each AI system by risk level under the EU AI Act? If classification has not started, the approaching high-risk system deadline is a concrete problem.

- Can you demonstrate compliance to an auditor on demand? If producing evidence requires a multi-week sprint, governance is reactive — Level 3.

If the answer to all three is yes, and your controls are automated with risk quantified in monetary terms, you are at Level 4.

How Modulos accelerates the journey to Level 4

The Modulos AI governance platform automates the transition from fragmented, reactive governance to a continuous, integrated, audit-ready posture. It is purpose-built for organisations at Level 2 or 3 that need to reach Level 4 before the EU AI Act deadline.

Automated intake and inventory. Every new AI tool passes through Scout, the Modulos AI agent, which triages submissions in seconds - profiling risk, identifying gaps, and routing to the right reviewer. Shadow AI is eliminated at the front door.

Multi-framework shared controls. A single control implementation satisfies overlapping requirements across the EU AI Act, ISO/IEC 42001, NIST AI RMF, and OWASP LLM Top 10 simultaneously. No duplicate work. See how Xayn achieved ISO 42001 certification using this approach.

Quantitative risk management. Risk is expressed in monetary terms per threat vector - not traffic lights. Management gets numbers they can act on: cost of non-compliance, exposure per system, trend over time. You can conduct a high level risk calculation for your business for free at https://www.modulos.ai/tools/risk-calculator/

Continuous runtime inspection. After go-live, the platform continuously inspects AI system inputs, outputs, and behaviour against fairness, performance, safety, and policy thresholds. Evidence is captured over time for audit — not reconstructed after the fact.

ISO/IEC 42001. Modulos is the first platform globally to be compliant against ISO/IEC 42001, independently evaluated by CertX. The platform meets the same standards it helps customers achieve.

© 2026 Modulos AG. All rights reserved.