AI governance frameworks compared: EU AI Act, ISO 42001 and NIST AI RMF

Short answer: three frameworks dominate AI governance today. The EU AI Act is binding law for AI systems placed on the EU market. ISO/IEC 42001 is a voluntary, certifiable management system standard. The NIST AI Risk Management Framework is a voluntary US guidance document. They were written independently but overlap heavily at the control level. Most organisations end up needing at least two. The efficient path is to run one set of atomic controls and map the evidence into each framework, rather than running three parallel programmes.

Three frameworks now dominate the global standards for AI governance: the EU AI Act, ISO/IEC 42001, and the NIST AI Risk Management Framework. Most organisations building AI compliance programmes will need to engage with at least two of them. Many will need all three.

The three were written by different bodies for different purposes, but they target the same underlying problem: giving organisations a structured way to identify, manage, and evidence the risks of AI systems. This post compares them on the dimensions that matter for enterprise buyers, then shows where they overlap in practice and what that overlap is worth in engineering time.

AI governance frameworks at a glance

| EU AI Act | ISO/IEC 42001 | NIST AI RMF | |

|---|---|---|---|

| Type | Regulation (binding law) | Voluntary standard (certifiable) | Voluntary framework (guidance) |

| Scope | EU market, extraterritorial | Global, any organisation | US-focused, any organisation |

| Approach | Risk-based classification with specific obligations per tier | Management system (AIMS) with clauses and controls | Risk management lifecycle across four functions |

| Enforcement | Fines up to €35M or 7% of global turnover | Certification audits by accredited bodies | No enforcement; adoption is voluntary |

| Key focus | Product safety and market access | Organisational governance and accountability | Govern, Map, Measure, Manage |

| Status | In force, phased rollout through 2026–2028 | Published December 2023 | Published January 2023 |

The EU AI Act

The EU AI Act is the only one of the three that is binding law. It applies directly in all 27 EU member states and to any organisation whose AI system's output is used in the EU, regardless of where the organisation is headquartered.

The Act's architecture borrows from EU product safety legislation, specifically the frameworks used for medical devices and industrial machinery. AI systems are classified into four categories: prohibited practices, high-risk, limited-risk (transparency obligations), and minimal-risk. High-risk systems face the bulk of the obligations: risk management (Article 9), data governance (Article 10), technical documentation (Article 11), logging (Article 12), transparency to deployers (Article 13), human oversight (Article 14), accuracy and cybersecurity (Article 15), and a quality management system (Article 17). Before placing a high-risk system on the market, the provider must complete a conformity assessment, issue an EU Declaration of Conformity, affix a CE mark, and register the system in the EU database.

For a deeper treatment of how the Act's risk classification works in practice, see our post on EU AI Act risk categories.

ISO/IEC 42001

ISO/IEC 42001 is the first international management system standard for AI. Published in December 2023, it is voluntary but certifiable: organisations can be audited against it by an accredited certification body and issued a formal certificate of conformity.

The standard follows the Harmonised Structure used by other ISO management system standards (ISO 27001 for information security, ISO 9001 for quality, and others). It defines an AI Management System (AIMS) with clauses covering context, leadership, planning, support, operation, performance evaluation, and improvement. Annex A provides a control set specific to AI (roughly analogous to ISO 27001 Annex A for information security) covering areas like AI policies, roles and responsibilities, impact assessments, data management, system lifecycle processes, and third-party AI.

ISO 42001's value is that it is a recognised, auditable governance wrapper that can be applied regardless of jurisdiction. It does not tell you which AI systems are high-risk under a specific regulation. It tells you how to run the management system that answers that question consistently and credibly. Modulos powered the first ISO 42001 certification in Germany (Xayn, June 2025) and holds the first product conformity attestation from CertX against the standard.

For the full treatment, see our post on ISO/IEC 42001 explained.

NIST AI Risk Management Framework

The NIST AI RMF is a voluntary framework developed by the US National Institute of Standards and Technology, published in January 2023. It is not a standard and not enforceable, but it has become the de facto reference for AI risk management in the US and is widely cited in enterprise governance programmes globally.

The framework is organised around four core functions:

- Govern establishes the policies, roles, and organisational culture that make risk management possible. This is the function that sets context and authority for everything else.

- Map identifies the AI system's context, intended use, stakeholders, and risks. The output is a characterisation of what the system is, what it is supposed to do, and what could go wrong.

- Measure assesses the risks identified in Map using quantitative and qualitative methods, including testing, evaluation, verification, and validation.

- Manage prioritises risks, applies treatment strategies, and monitors the system over time.

NIST's approach is less prescriptive than either the EU AI Act or ISO 42001. It does not mandate specific controls or require certification. It gives you a vocabulary and a lifecycle model. That flexibility is both its strength and its limitation: easier to adopt, harder to point to as evidence of compliance.

For more detail, see our post on the NIST AI Risk Management Framework.

Where the three AI governance frameworks overlap

The three frameworks were written independently. Each has its own language, its own structure, and its own level of specificity. Organisations subject to more than one of them often run parallel compliance programmes, duplicating effort.

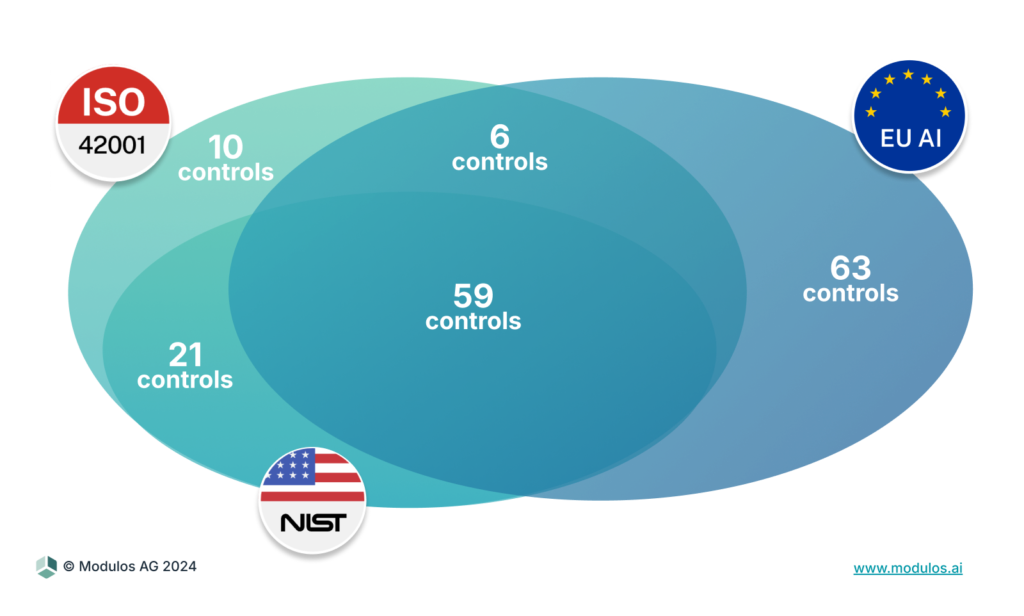

Our analysis of the three frameworks at the control level tells a different story. When you decompose each framework into atomic compliance controls (specific, practitioner-oriented tasks rather than high-level clauses), the overlap is substantial.

Across ISO 42001 (96 controls), the EU AI Act (128 controls), and NIST AI RMF (86 controls), 59 controls are shared by all three. A further 6 are shared between ISO 42001 and the EU AI Act. Only 10 controls are unique to ISO 42001, 63 to the EU AI Act, and 21 to NIST AI RMF.

What this means for an organisation moving from one framework to the next:

- Adopting ISO 42001 and then preparing for the EU AI Act: 96 controls done; 63 new controls to add for the EU AI Act instead of 128. Roughly 50% of the EU AI Act work is already covered.

- Adopting ISO 42001 and then preparing for NIST AI RMF: most of the work is already done. Fewer than 30 additional controls apply, and those are largely about lifecycle framing rather than net-new evidence.

The efficiency case for a cross-framework approach is straightforward: do the work once, reuse it across frameworks, maintain one evidence repository, and run one audit cycle.

A worked example

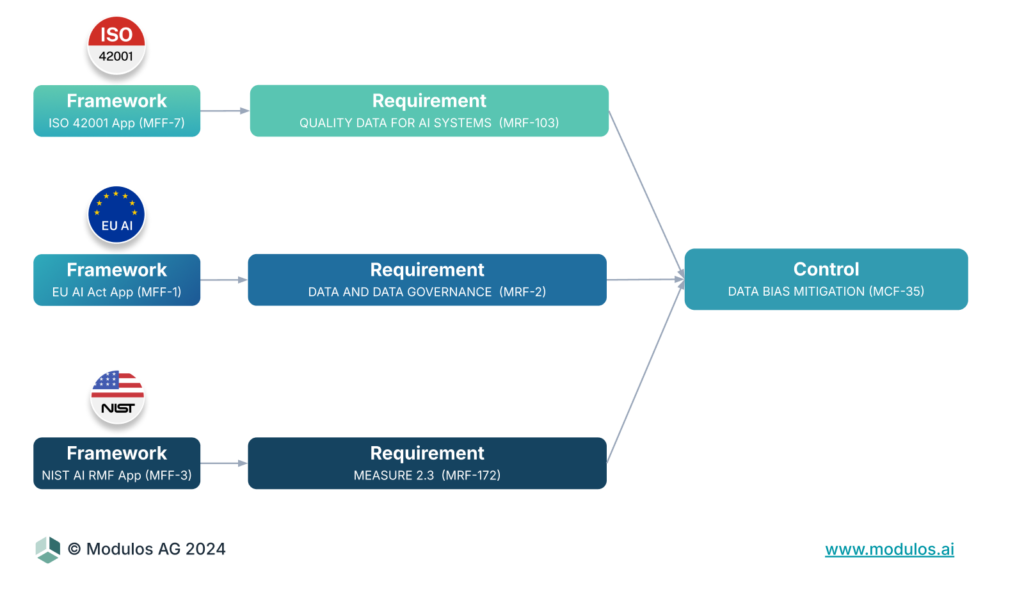

Consider control MCF-35: Data Bias Prevention and Mitigation. The requirement is to document the measures taken to manage undesired bias and their outcomes.

This single control satisfies:

- ISO 42001 Annex A requirements on AI system quality and fairness

- EU AI Act Article 10 on data governance and bias mitigation for high-risk systems

- NIST AI RMF Measure function, specifically the subcategories on bias testing

An organisation that runs bias audits once, documents the methodology, records the outcomes, and maintains the evidence file has satisfied three frameworks with one body of work. The compliance artefact is the same. Only the mapping into each framework's language differs.

How to sequence adoption of AI governance standards

Most organisations that are serious about AI governance end up needing all three. The question is not which one to adopt but in what order.

For organisations selling or deploying AI in the EU market, the EU AI Act is the binding floor. You do not choose to comply with it. Start there for any system that meets the high-risk criteria.

For organisations that want an auditable, certifiable governance wrapper, add ISO 42001. A certificate from an accredited body is externally verifiable in a way that self-attested compliance is not. ISO 42001 is particularly valuable in procurement contexts where your customers need evidence your AI governance is real.

For US-focused organisations without direct EU market exposure, NIST AI RMF is the most natural starting point. It is free, flexible, and increasingly referenced by US federal agencies and enterprise procurement.

The sequence that minimises total effort for organisations needing more than one framework: ISO 42001 first as the governance wrapper, then layer EU AI Act obligations for in-scope systems, and NIST AI RMF maps in largely for free.

Frequently asked questions

What are the main AI governance frameworks? Three dominate today: the EU AI Act (binding law for the EU market), ISO/IEC 42001 (voluntary, certifiable international management system standard), and the NIST AI Risk Management Framework (voluntary US guidance). Most enterprise programmes touch at least two.

What is the difference between the EU AI Act and ISO 42001? The EU AI Act is a regulation that classifies AI systems by risk and imposes specific obligations on high-risk ones, including conformity assessment and CE marking. ISO 42001 is a management system standard that defines how an organisation governs its AI activities. They operate at different levels. A well-run ISO 42001 management system produces most of the evidence the EU AI Act asks for.

What is the difference between ISO 42001 and NIST AI RMF? ISO 42001 is a certifiable management system with clauses and controls. NIST AI RMF is a voluntary lifecycle-oriented guidance document with four functions (Govern, Map, Measure, Manage). ISO is auditable and gets you a certificate. NIST gives you a vocabulary and a lifecycle model.

Do I need all three AI governance frameworks? Most enterprises selling AI into both the EU and US markets end up needing all three. The efficient path is one control taxonomy that satisfies all three, not three parallel programmes.

How much do AI governance frameworks overlap? At the control level, about 60% of controls are shared across all three. Another ~5% are shared between ISO 42001 and the EU AI Act. Only about 10 controls are unique to ISO 42001, 63 unique to the EU AI Act, and 21 unique to NIST AI RMF.

Which AI governance framework should I start with? For EU market exposure: EU AI Act is the floor. For auditable credibility across markets: ISO 42001. For US-focused programmes without EU exposure: NIST AI RMF. For organisations that will need more than one, ISO 42001 first is usually the efficient starting point.

What Modulos does with this

The Modulos AI governance platform is built on the control-level taxonomy this analysis uses. Organisations manage one set of atomic controls, assign evidence once, and see which frameworks are satisfied in real time. When a new regulation or standard arrives, the new requirements are mapped into the existing taxonomy rather than requiring a parallel programme.

For a practical introduction to running an AI governance programme across frameworks, see our guide to AI governance.