AI compliance in 2026: what changed, what's required, where to start

AI compliance in 2026 is categorically different from AI compliance in 2024. The EU AI Act moved from draft to enforceable law. Major carriers started excluding AI liability from corporate policies. Financial supervisors published AI-specific guidance and began training on how to audit it. OWASP published the Top 10 for Agentic applications, and the first legal paper arguing that high-risk agentic systems with untraceable drift cannot currently be placed on the EU market appeared.

Any one of those shifts would be material. Taken together, they made the 2024 version of most AI compliance programs obsolete.

This is the state of AI compliance in 2026. The purpose is to be correct about what actually changed, where programs break, and what to do about it, rather than to be exhaustive.

"Wait and see" is the single highest-risk compliance strategy on the board today. The Brussels Effect did not wait. Neither should you.

AI compliance in 2026, defined

AI compliance is a continuous operational posture demonstrated to regulators, insurers, and buyers.

The difference between a posture and a checklist is what can be seen from outside the organisation on any given week. A checklist shows you a set of ticked boxes from the last audit. A posture shows you what your AI is doing right now, what risks it currently carries, what controls are currently operating, what evidence has been generated in the last 30 days, what incidents have been opened and closed, who approved what, and where the stop authority sits.

The defining line, borrowed from the compliance literature but sharpened by 18 months of conversations with supervisors and auditors: compliance is a state of your organisation. A compliance deliverable is what a consultancy produces. A compliance state is what your organisation operates.

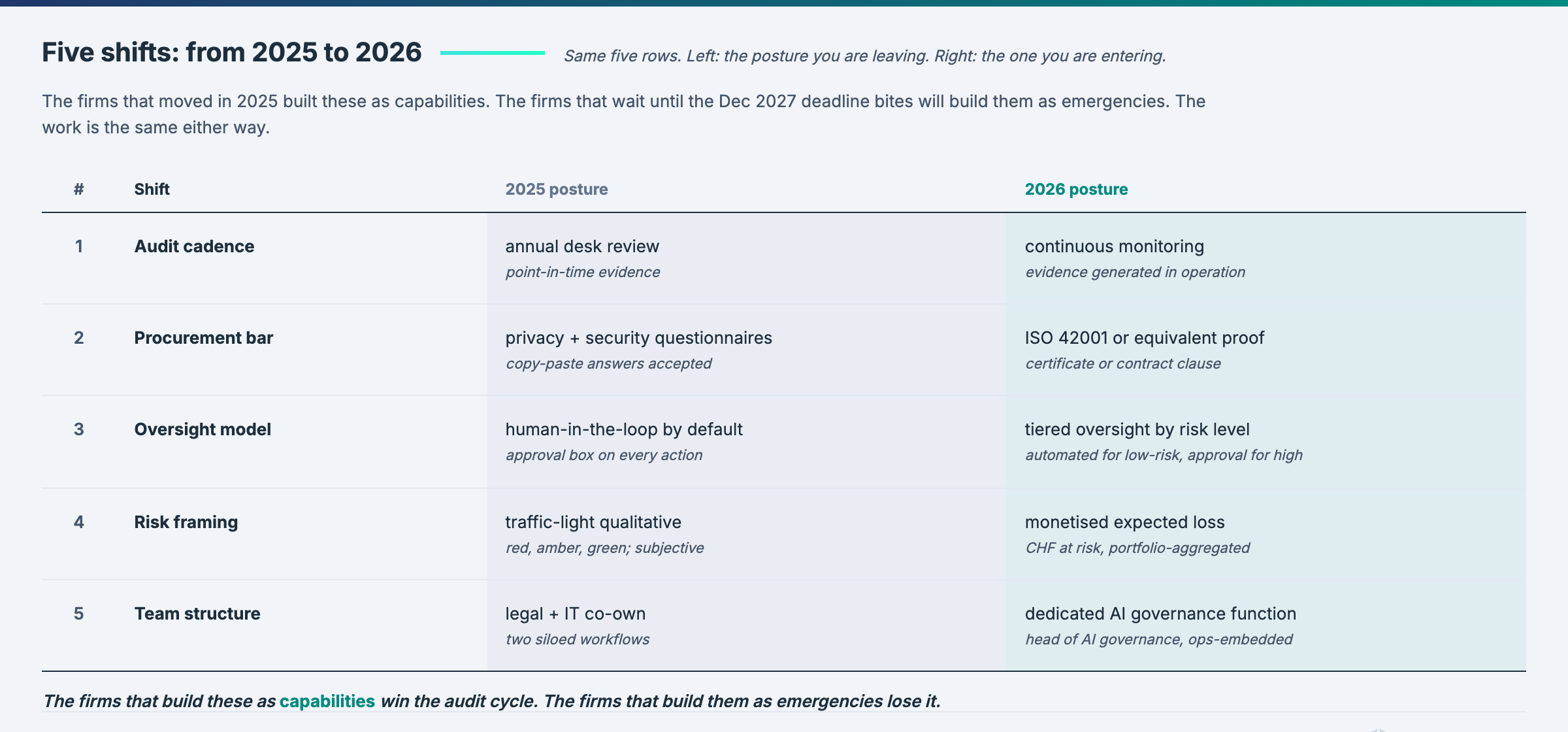

The organisations that have understood this are running AI in 2026 with approximately the same discipline they run their financial controls. The organisations that have not are discovering that their AI risk cannot be defended in the same conversations.

What changed in the last twelve months

Five shifts redefined the landscape; any one would be material.

Shift 1. The EU AI Act moved from future to present

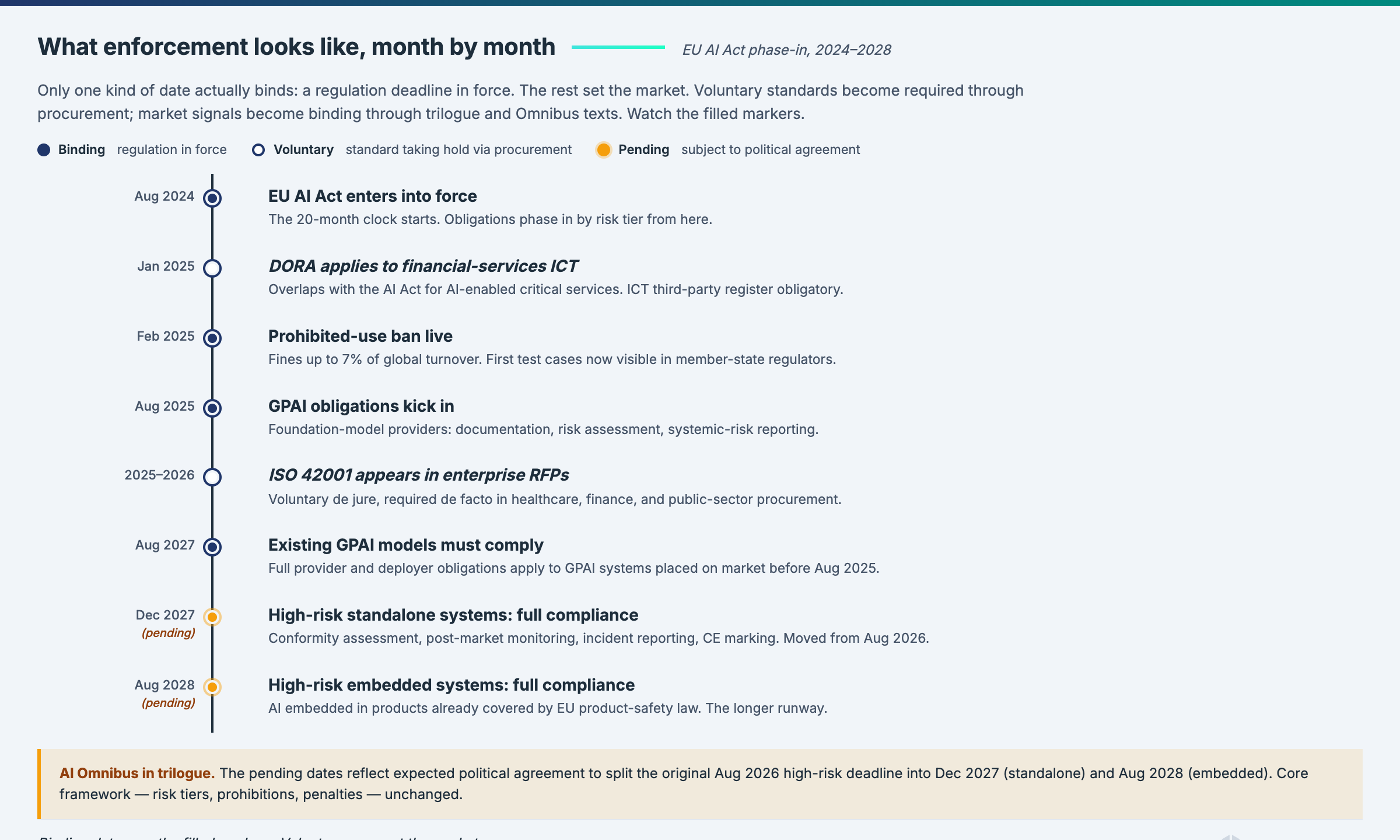

Prohibited practices went into force in February 2025. General-purpose AI obligations went into force in August 2025 for new models, with existing models following in August 2027. High-risk system obligations are on deck, with the baseline date of August 2026 and the Digital Omnibus proposal moving parts of this to December 2027 in certain configurations.

The Omnibus trilogue is active. Deadlines are moving. The obligations are not.

What this means operationally: the planning window for high-risk systems has shortened, regardless of whether specific deadlines shift. Building governance infrastructure takes 12 to 18 months. That is longer than the remaining time to the earliest compliance dates, even under generous Omnibus scenarios. The question is not when the deadline falls. The question is whether your infrastructure is ready.

Shift 2. Insurance became the forcing function

Major carriers started excluding AI liability from corporate policies during 2025 and early 2026. Cyber insurance went through the equivalent transition in 2019 to 2021: first the carriers excluded ransomware-related losses under certain conditions, then they started requiring specific controls before offering coverage at all, then premiums tied to the quality of the controls.

The AI compliance analogue is in motion. Once liability is excluded at the corporate level, the CFO and the general counsel become primary stakeholders in AI governance. The procurement side becomes explicit: the organisation cannot accept AI risk it cannot insure. Governance programs that were underfunded in 2024 get funded in 2026 because the insurance signal is sharper than the regulatory signal.

This is also the reason quantified risk in monetary terms has stopped being optional. Insurers do not negotiate over traffic lights. They underwrite quantified risk.

Shift 3. Supervisors moved from principles to operational expectations

Across jurisdictions, financial supervisors have spent the last twelve months publishing AI-specific guidance that sets concrete operational expectations, running surveys on actual AI adoption, and building internal capability to audit it. The common thread is that AI risk is being pulled inside existing supervisory frameworks (ICT risk, operational resilience, model risk) rather than left as a standalone ethics topic, and the expected evidence is the operating control, not the policy document.

Switzerland (FINMA). FINMA Guidance 08/2024 set out governance and risk-management expectations for AI use at supervised institutions, covering roles, risk classification, testing, explainability, and third-party AI. FINMA surveyed around 400 institutions between late 2024 and early 2025: roughly half already use AI or have applications in development, and the regulator has signalled it will refine expectations further under its 2025-2028 strategic goals, on a "same business, same risks, same rules" basis. A Swiss federal AI consultation draft is due by end of 2026.

Germany and the Eurozone (BaFin / ECB SSM). BaFin published "Guidance on ICT Risks in the Use of AI at Financial Entities" in December 2025. The 35-page document pulls AI use squarely into the DORA ICT risk-management regime for CRR institutions and Solvency II insurers. The explicit message is that AI is no longer an innovation or ethics topic but part of ICT risk, third-party risk, testing, and governance under existing supervisory authority. ECB-level supervision under the SSM is aligned with the same framing.

United Kingdom (FCA / PRA / Bank of England). The UK supervisors have been explicit that AI will be overseen through existing frameworks rather than bespoke AI rules, with the PRA and FCA rolling supervisory expectations forward. The FCA launched the Mills Review into AI in retail financial services in January 2026, with recommendations due to the Board in summer 2026. The Treasury Committee has recommended that the FCA publish comprehensive AI guidance for firms by end of 2026 and that the Bank of England and FCA introduce AI-specific stress testing.

Singapore (MAS). MAS consulted on Guidelines on AI Risk Management for financial institutions from November 2025 to January 2026. The Guidelines set high-level supervisory expectations on AI risk management systems, policies, lifecycle controls, and the capacity needed to operate AI responsibly. This builds on the FEAT principles and Veritas toolkit programme that MAS has been developing with a consortium of major banks since 2018.

European Union (Supervisory Digital Finance Academy). The EU's Supervisory Digital Finance Academy ran its first AI governance cohort for supervisors in early 2026, training on how to read a conformity assessment, how to test a risk quantification methodology, how to audit a control implementation, and how to evaluate whether human oversight actually functions. Modulos CEO Kevin Schawinski delivered the operational governance module. Supervisors asked engineering-level questions; they want to see control evidence, not policy documents.

Gulf region (CBUAE and others). The Central Bank of the United Arab Emirates published its AI Guidance Note in February 2026, aligning with EU supervisory expectations for financial-sector AI and adding specific requirements including an Article 6(f) immediate-stop capability. Equivalent supervisory activity is underway across the wider region.

The pattern across jurisdictions is the same. Document-first strategies are not going to hold. Supervisors will ask for operating evidence, and they will increasingly know what good looks like.

Shift 4. Agents arrived and broke the assumptions

OWASP Top 10 for Agentic applications published in December 2025. The Nannini, Smith, and Tiulkanov paper (April 2026) put the sharpest legal frame on the situation: high-risk agentic AI systems with untraceable behavioural drift cannot currently be placed on the EU market.

This is current legal reading.

Most existing governance frameworks were written for static models. Their assumptions about cybersecurity (the endpoint is the perimeter), oversight (human review in the loop), transparency (the affected person is the recipient), and conformity (the system stays within assessed boundaries) all break for agents. OWASP Top 10 for Agentic applications exists specifically to catalogue the attack surfaces agents create.

Operational consequence: if your governance program does not have an agent inventory, agent-specific controls, and mapping to OWASP Agentic, you are governing 2024 systems under 2026 deployment conditions. The gap is not theoretical. It shows up in conformity assessments.

Shift 5. The Brussels Effect carried the EU framework globally

The most ambitious US federal AI bill on the table reads like the EU AI Act ported into US political vocabulary. Canada's AIDA mirrors the high-risk classification. Singapore's Model AI Governance Framework updated in 2024 aligned with EU transparency requirements. Brazil's draft regulation follows the four-gates structure. The CBUAE AI Guidance Note published in February 2026 aligns with EU supervisory expectations.

Companies that thought they were opting out of EU regulation by not selling into Europe are wrong about the landscape. The landscape came to them. Compliance programs built for the EU AI Act now count in a dozen other jurisdictions, and compliance programs built for a single non-EU jurisdiction need to absorb EU-shaped expectations.

The unit of analysis is no longer "which jurisdiction is my AI deployed in" but "which framework stack describes the obligations I am accumulating across the organisations I operate in".

What is required today, framework by framework

Brief, with links to the deep-dive posts for each.

EU AI Act. The four gates: prohibited practices (Article 5), general-purpose AI (Chapter V), high-risk systems (Articles 6 through 29 plus Annex III), and transparency obligations for all AI (Article 50). If you operate AI in the EU market, all four apply. Deadlines vary by gate. For the deep dive on the four gates and what each requires, see the Brussels Effect stack post.

ISO 42001. The AI management system standard. Certifiable through accredited bodies. Not a substitute for AI Act compliance, but the natural scaffolding for it, and prEN 18286 is under development as the harmonised bridge. See the ISO 42001 certification post for the realistic timeline and cost.

NIST AI RMF. Voluntary, but adopted by US financial institutions as a baseline and increasingly by European teams as the engineering spec for risk management. Govern / Map / Measure / Manage. See the NIST AI RMF post for the engineering-spec framing.

Sectoral frameworks. DORA for EU financial services operational resilience. NIS2 for critical infrastructure cybersecurity. GDPR for data protection, now interpreted with AI-specific considerations. CBUAE AI Guidance Note for UAE banking. QCB guidance for Qatar. FINMA communications for Switzerland. MAS FEAT for Singapore. Each adds specific obligations on top of the horizontal frameworks.

Application-specific frameworks. OWASP Top 10 for LLM for large language model security. OWASP Top 10 for Agentic applications for agent-specific risks. Both should be in the control graph of any organisation operating those system types.

Modulos supports 13+ frameworks in one shared control graph. The practical consequence is that implementing a control once produces evidence that counts across multiple frameworks, reducing total compliance effort by 40 to 60% compared with running each program independently.

Where compliance programs typically break in 2026

Five failure modes recur across organisations preparing for 2026.

No agent inventory. The AI estate contains autonomous agents with tool use and memory. The inventory contains only registered models. Everything else is ungoverned. This is the fastest-growing gap in the landscape.

Qualitative risk only. Red / amber / green or 5x5 matrices, no monetary quantification. Prioritisation happens by political negotiation inside the organisation. Insurers, regulators, and increasingly CFOs do not accept this.

ISO 42001 treated as AI Act compliance. The category error that keeps costing audits. An ISO 42001 certificate says the organisation has a management system. It does not say a specific AI system conforms to Article 9. Conflating the two costs audits.

"Wait and see" as strategy. The Omnibus will move deadlines. The framework versions will change. That is not the question. The question is whether the organisation is building infrastructure that absorbs whatever trilogue produces, or gaming version numbers and hoping for the best. Infrastructure lead time is longer than the legislative schedule. Delay always costs more than it saves.

Buying compliance automation when what is needed is governance automation. The category is splitting. One side produces documents (the category that just had its SOC 2 Theranos moment). The other connects to the engineering stack, verifies deployment, and produces evidence. Buyers that cannot tell the difference will be retrofitting in 18 months.

Why "wait and see" is the single highest-risk strategy

The argument gets flattened in most compliance writing, so it is worth being specific.

The "wait and see" posture is usually rationalised as follows: the Omnibus will move deadlines. Framework versions will change. Therefore we should delay substantive investment until the requirements are final.

The flaw in the argument is a timing mismatch. The deadlines that move are the compliance dates. The infrastructure lead times for building governance capability (inventory, control graph, evidence collection, risk quantification, review workflow, agent coverage) are 12 to 18 months to reach steady state. That is longer than the typical deadline shift. Even if a deadline moves from August 2026 to December 2027, the organisation that starts building in mid-2026 does not make December 2027.

The second flaw is selection bias. The organisations that have been building since 2023 and 2024 are already in steady state. The organisations still running the "wait and see" calculation will arrive at the new deadline, whenever it is, with the same amount of time to build as if the deadline had not moved, because they did not use the extension.

The third flaw is regulatory reading. The Omnibus is an efficiency reform, not a rollback. Its stated purpose is to reduce administrative friction on the way to the same substantive obligations. "Wait and see" reads it as a weakening of the Act. The text does not support that reading.

The fourth flaw is insurance. The insurance market is moving on its own schedule, independent of the Act. Insurance-driven forcing functions do not pause for the Omnibus.

The result is that "wait and see" looks like prudence and behaves like denial. The organisations that adopt it will pay more, later, with less time.

The 90-day practical roadmap

Framed as phases, not months, so the advice does not age. The phases are the same even if the calendar shifts.

Phase 1: Inventory (weeks 1 to 3)

Build the complete AI inventory. Registered models, autonomous agents, SaaS AI features in vendor products, shadow usage by employees, shadow inference endpoints. Each category requires a different detection instrument. Expect the first honest inventory to be 3 to 5 times larger than the registered-model count.

Phase 2: Classify (weeks 3 to 5)

Classify each system against every framework in scope. EU AI Act gate, ISO 42001 application scope, NIST AI RMF risk category, sectoral frameworks. Quantify the risk for each system in monetary terms. This is where the platform pays back. A platform that maintains a shared control graph classifies once and propagates to every framework.

Phase 3: Gap analysis (weeks 5 to 7)

For each system and each framework in scope, identify the requirements already satisfied, partially satisfied, and not satisfied. The output is a gap register. Each gap has a monetised treatment cost estimate and a risk exposure estimate. Prioritise by the ratio of cost to risk reduction.

Phase 4: Evidence plan (weeks 7 to 9)

For each control in the graph, define the evidence source. Connect the platform to GitHub, GitLab, Jira, Google Drive, SharePoint, the runtime monitoring system. Schedule runtime tests for the governance-relevant properties of each system: performance, bias, security, drift. Wire test results back to the controls they satisfy.

Phase 5: Operate (weeks 9 to 12)

Turn on the cadences. Governance committee meetings, control reviews, risk quantification updates, incident drill, audit preparation. Produce the first audit-ready report. Run a retrospective. Adjust.

By the end of 90 days, the organisation has a live inventory, a shared control graph, an evidence stream, quantified risk, and the operational cadences to maintain the program. This is the starting line for continuous governance.

Insurance as the forcing function most teams are missing

The insurance signal is more reliable than the regulatory signal, and most compliance programs are under-weighting it.

Regulators operate on legislative timelines. They publish guidance, run consultations, phase in obligations over years, and carry the political weight of their jurisdictions. Insurers operate on underwriting timelines. They set premiums based on loss experience. When the loss experience changes, premiums change, and coverage tightens. There is no consultation period.

In AI, the loss experience is changing. Large language models have produced defamation, discrimination, and disclosure failures with litigation that reached settlement or judgement. Agentic systems have executed financial and operational actions that produced corporate liability. Data handling violations have produced regulatory fines. Insurers see the claim data. Their response is moving coverage.

Once liability is excluded at the corporate level, three things happen in sequence. The CFO becomes a stakeholder in AI governance because the risk is no longer transferred. The general counsel becomes a stakeholder because the company is now self-insured for AI losses. The procurement function becomes a stakeholder because AI vendor risk is now residual.

That is when compliance programs get funded: when the insurer sends the exclusion notice.

If you are building a business case for AI governance investment today, the insurance signal is the most credible external reference you have. Regulators will tell you what is required eventually. Insurers are telling you the cost of getting it wrong right now.

What good looks like in 2026

The end-state description. The operational picture an external reviewer (regulator, auditor, insurer, enterprise customer) should see if they audited your AI program today.

A live inventory of AI systems, including agents and shadow AI. Updated weekly at minimum. The inventory knows what is deployed, who owns it, what frameworks apply, and what risks it carries.

Risk quantified in monetary terms. Every risk in the register has an expected impact in euros (or operating currency), a confidence interval, and a scenario. Traffic-light dashboards are residual, not primary.

A shared control graph across the frameworks in scope, so that one control implementation satisfies requirements in EU AI Act, ISO 42001, NIST AI RMF, GDPR, NIS2, DORA, and the sectoral and application-specific frameworks that apply. The 40 to 60% efficiency gain from the shared graph is already realised.

Runtime Inspection tests running on a schedule and linked back to the controls they satisfy as automated evidence. Sources include Prometheus, Datadog, the Modulos Client, GitHub, and Azure. Tests cover bias, performance, security, drift, and the other governance-relevant properties.

Evidence collected at the source from the engineering stack: GitHub, GitLab, Jira, Google Drive, SharePoint. Not typed up after the fact. Not uploaded as screenshots. Produced by the systems that run.

Framework versioning that tracks regulatory change so the Omnibus trilogue, or any other deadline shift, does not silently invalidate six months of work. The platform notifies when versions change and flags which controls and requirements are affected.

Human-in-the-loop AI assistance that drafts, with humans approving through the same review workflow as non-AI work. Scout, Evidence agent, and Control assessment agent produce suggestions. Humans approve. The audit trail preserves the distinction.

Incident response and immediate-stop workflows wired into the deployment pipeline, with the stop authority defined and auditable. CBUAE Article 6(f) compliance for UAE operations. EU AI Act Article 14 operationalised, not just documented.

Board-level accountability. A named executive with personal responsibility for AI governance, reporting quarterly to the board, with authority to stop deployments.

An organisation that operates this way passes audits without retrofit, receives insurance coverage on reasonable terms, satisfies enterprise customer diligence, and absorbs regulatory changes without crisis. That is what good looks like. It is achievable. It is not where most organisations are today.

FAQ

What is the difference between AI governance and AI compliance? Compliance is the state of meeting specific requirements. Governance is the ongoing organisational capability that produces and maintains compliance. You can pass an audit without governance, but you will not pass the next one. Governance is the structural answer. Compliance is the observable result.

Is the EU AI Act's high-risk deadline going to move to December 2027? The Digital Omnibus proposal (introduced late 2025, now in trilogue) contains provisions that would move certain high-risk obligations to December 2027. The trilogue is active. Whether and how the date moves depends on negotiation. Plan for the infrastructure required regardless of deadline, because infrastructure lead time exceeds any plausible deadline shift.

How much does AI compliance cost? For a mid-size enterprise with a substantive AI estate, total annual cost typically runs €500,000 to €3 million, combining platform, internal headcount, external audits, legal advice, and insurance. Variance is wide depending on estate size, framework scope, and jurisdictional exposure. The cost of non-compliance under the EU AI Act ranges up to 35 million euros or 7% of global turnover.

Can we handle AI compliance with our existing GRC platform? Probably not, unless the GRC platform has AI-native capabilities: agent inventory, monetary risk quantification, evidence collection from the engineering stack, continuous runtime testing, and framework versioning. Most generic GRC platforms were built for financial controls and information security. Their "AI modules" tend to be questionnaires.

Where should a small startup start? Start with the inventory. A startup with 2 to 5 AI systems can build the initial inventory in a week. Classify against the EU AI Act first (if you have EU exposure), then layer ISO 42001 and NIST AI RMF. Invest in the platform early, because retrofitting infrastructure later is expensive.

How do we train our staff on AI compliance? Role-based training. Engineers need to know the specific obligations that affect the systems they build. Product owners need to know classification logic. The governance committee needs to know cross-framework implications. Leadership needs to know the strategic picture. Generic "AI ethics" training is not sufficient.

What should I tell my board about AI compliance? Three things. One, our AI estate is X systems, classified as follows, with quantified risk of Y euros expected annualised. Two, our governance infrastructure status against the frameworks in scope is Z percent complete with these gaps and these treatment plans. Three, our insurance coverage for AI liability is [current status], and the trajectory of the market is [exclusions / reduced coverage / specific controls required].

Closing

The organisations treating AI compliance as a 2026 problem will be ready for the 2027 pressure. The ones still treating it as a 2024 problem will be retrofitting while their insurance renewal expires and their supervisor asks for evidence they cannot produce.

"Wait and see" is the single highest-risk compliance strategy on the board today. The Brussels Effect did not wait, nor did the insurers, supervisors, or agents.

Neither should you.

Ready to see how Modulos handles AI compliance at enterprise scale? Request a demo and we will walk you through how the platform operationalises EU AI Act compliance, ISO/IEC 42001, NIST AI RMF, and the global AI compliance guide on one shared control graph.

About Modulos. Modulos is an AI governance platform supporting 13+ frameworks in one shared control graph, and Europe's first ISO/IEC 42001-certified AI governance platform. Modulos is a signatory of the European Commission's AI Pact and a member of NIST's Center for AI Standards and Innovation (CAISI) consortium. Modulos CEO Kevin Schawinski trained EU financial supervisors at the Supervisory Digital Finance Academy in March 2026 on operational AI governance.

Cross-links: EU AI Act summary, ISO 42001 certification guide, NIST AI RMF engineering spec, Agentic AI and the EU AI Act, ROI of AI, CBUAE AI Guidance Note, We Train the Regulators, Global standards for AI governance, AI governance assessment, AI governance tools in 2026, Your AI governance framework is a PDF.