NIST AI Risk Management Framework: the engineering spec for AI risk

If you read the NIST AI Risk Management Framework (NIST AI RMF) as a compliance document, you will find it thin.

If you read it as an engineering specification for managing AI risk across the full lifecycle, you will find it is the most rigorous public document of its kind in existence. Most teams are reading it as the first.

This is a problem. The consequence of treating NIST AI RMF as a US voluntary standard that "does not apply to us" is that European teams spend months inventing the risk taxonomy that NIST already published. Teams that adopt NIST first, then layer on the EU AI Act and ISO 42001, find the regulatory work substantially faster because the hard taxonomy work is already done. Teams that start from the Act often end up reinventing something like NIST in private, less well than the public version.

This post reframes the document. What it actually is, why it matters outside the US, how Govern / Map / Measure / Manage maps onto operational reality, and how it connects to the regulatory frameworks that do have legal force.

What NIST AI RMF is and is not

The framework was published in January 2023 by the US National Institute of Standards and Technology. It is voluntary. It was produced through a multi-year public consultation process. Companion documents include the Playbook (practical implementation guidance), a Generative AI Profile published in July 2024, and sector-specific profiles in development.

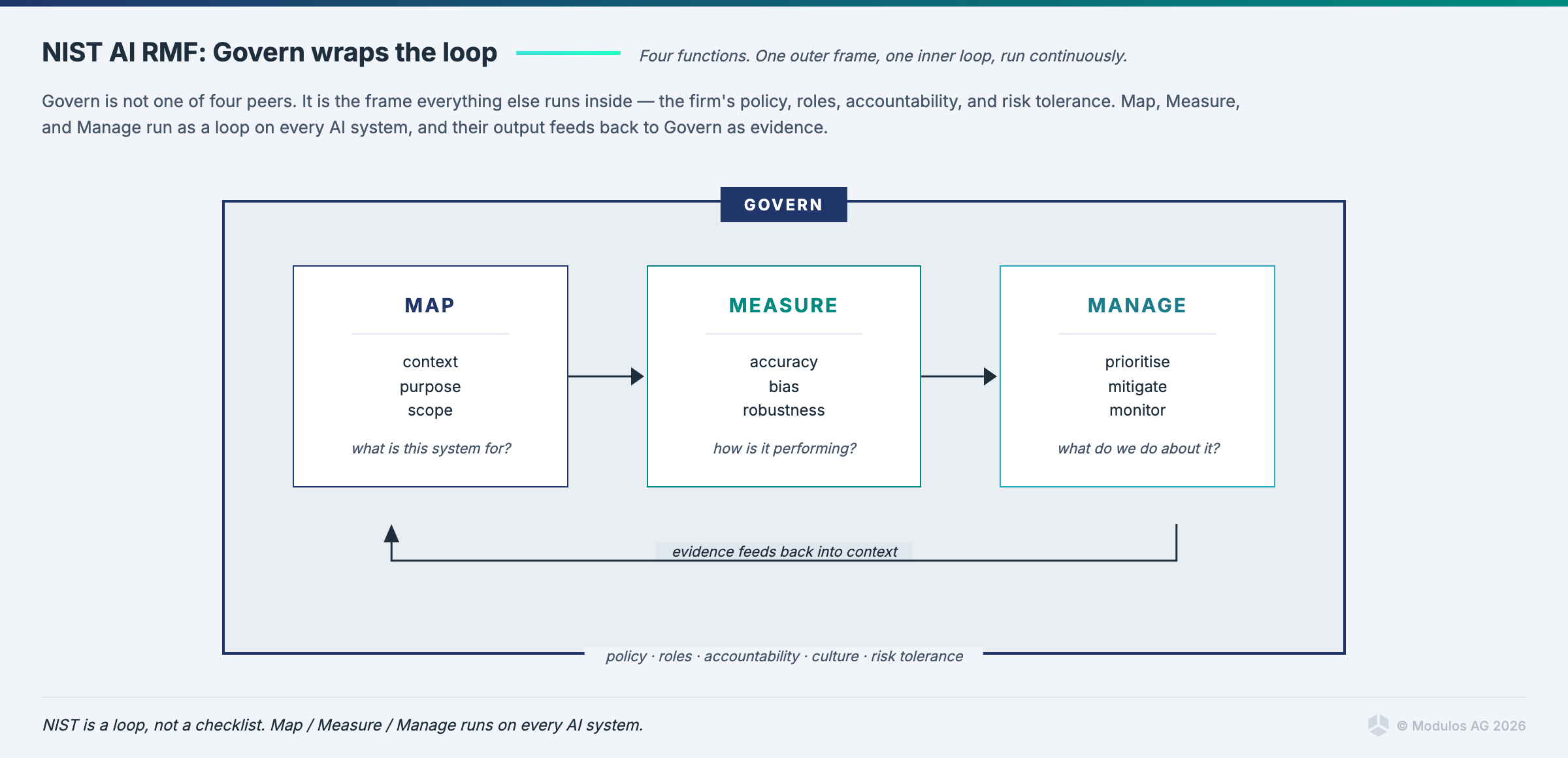

The structure has four core functions: Govern, Map, Measure, Manage. Each decomposes into categories and subcategories, giving roughly 200 specific practices. This is the engineering depth that distinguishes NIST from most risk-management documents.

What NIST AI RMF is not: it is not a regulation, a certification, or a product safety framework, and it does not produce conformity assessments or certificates. It is a document that a practitioner uses to organise the work of managing AI risk. Read it as you would read a technical specification, where value lies in adoption and implementation rather than compliance checking.

Why it still matters outside the US

The Brussels Effect gets most of the attention in AI regulation discussions, because the EU AI Act is a large regulation shaping global practice. The reverse flow also exists, and NIST AI RMF is the clearest example.

The framework is being adopted by European teams, not because it is required, but because the taxonomy and the lifecycle model are reusable. The best engineering gets adopted regardless of where it was produced. Three specific reasons European and other non-US teams should take NIST seriously:

The taxonomy is complete. EU AI Act Article 9 says "you shall establish, implement, document and maintain a risk management system." It does not tell you what categories of AI risk to consider, how to identify them, or how to relate them to each other. NIST does. The Map function in particular gives you a structured approach to context identification that Article 9 assumes but does not describe.

The lifecycle model matches engineering reality. The Govern / Map / Measure / Manage loop mirrors the way engineering teams actually plan, build, release, and monitor systems. Sprints, retros, incident reviews, capacity planning. The risk work fits into cadences that engineering organisations already have. Frameworks designed for compliance teams rather than engineering teams often fail to integrate.

It is adopted where the US financial sector is serious about AI. Most US banks, insurers, and investment firms operating AI at scale have aligned their programs to NIST. This creates a common vocabulary for cross-border AI governance conversations. Non-US teams that adopt NIST can talk to their US counterparts, regulators, and customers without translation.

Govern: establishing AI risk management policies

Govern is the organisational layer. What an AI risk management policy actually says, who owns it, what decisions it governs, and how it changes over time.

In practice, Govern produces a small number of concrete artifacts:

- An AI risk management policy document, typically 10 to 20 pages, owned by a designated accountable executive.

- A governance structure with named roles (AI governance committee, AI ethics function, model risk management function, data governance function) and defined authorities.

- A decision-rights matrix that says who approves AI system deployment, who approves model changes, who approves incident response, who approves policy changes.

- A set of organisational metrics that the AI governance function reports on (number of systems, risk profile, incident count, resolution time, audit findings).

- A review cadence: the policy is reviewed annually, the governance committee meets quarterly, the risk profile is updated monthly, and so on.

The failure mode in Govern is producing policy-statement text that never connects to operational decisions. "The organisation is committed to responsible AI" is text. "The AI governance committee approves the deployment of any model classified high-risk, with a quorum of three, and the decision is logged in the model registry within 48 hours" is governance.

If you cannot draw a line from the policy to a specific decision made in the last month, Govern is decorative.

Map: context and risk identification

Map is the step most programs skip, and it is why their Measure and Manage steps measure and manage imaginary systems.

The Map function asks four questions:

What is the context of the AI system? What business problem does it solve, what population does it affect, what regulatory regime applies, what organisational objectives does it serve, what other systems does it interact with? The context determines what risks even matter.

What systems are in scope? This is where inventory lives, including the dark matter AI that most inventories miss: shadow usage, SaaS features that quietly added AI, agents operating under employee credentials, inference endpoints deployed without review. If your Map step only captures the registered models, Measure and Manage are working on a subset of reality.

What can go wrong? Risk identification. Not just accuracy failure. Also bias, security, privacy, misuse, reliability, transparency, societal impact, legal exposure, reputational damage, operational drift. A structured Map step uses a taxonomy (NIST provides one in the Playbook) and produces a risk register that covers categories the team might not have thought of.

What is already in place? Existing controls, existing monitoring, existing incident response. Map documents them so the Manage step does not duplicate them.

The artifacts produced by Map are an AI system inventory, a risk register, and a control baseline. All three are living documents. All three get updated as the estate changes.

Where shadow AI discovery lives, if you are serious, is in Map. No amount of Measure or Manage fixes the hole left by an incomplete Map.

Measure: quantitative and qualitative assessment

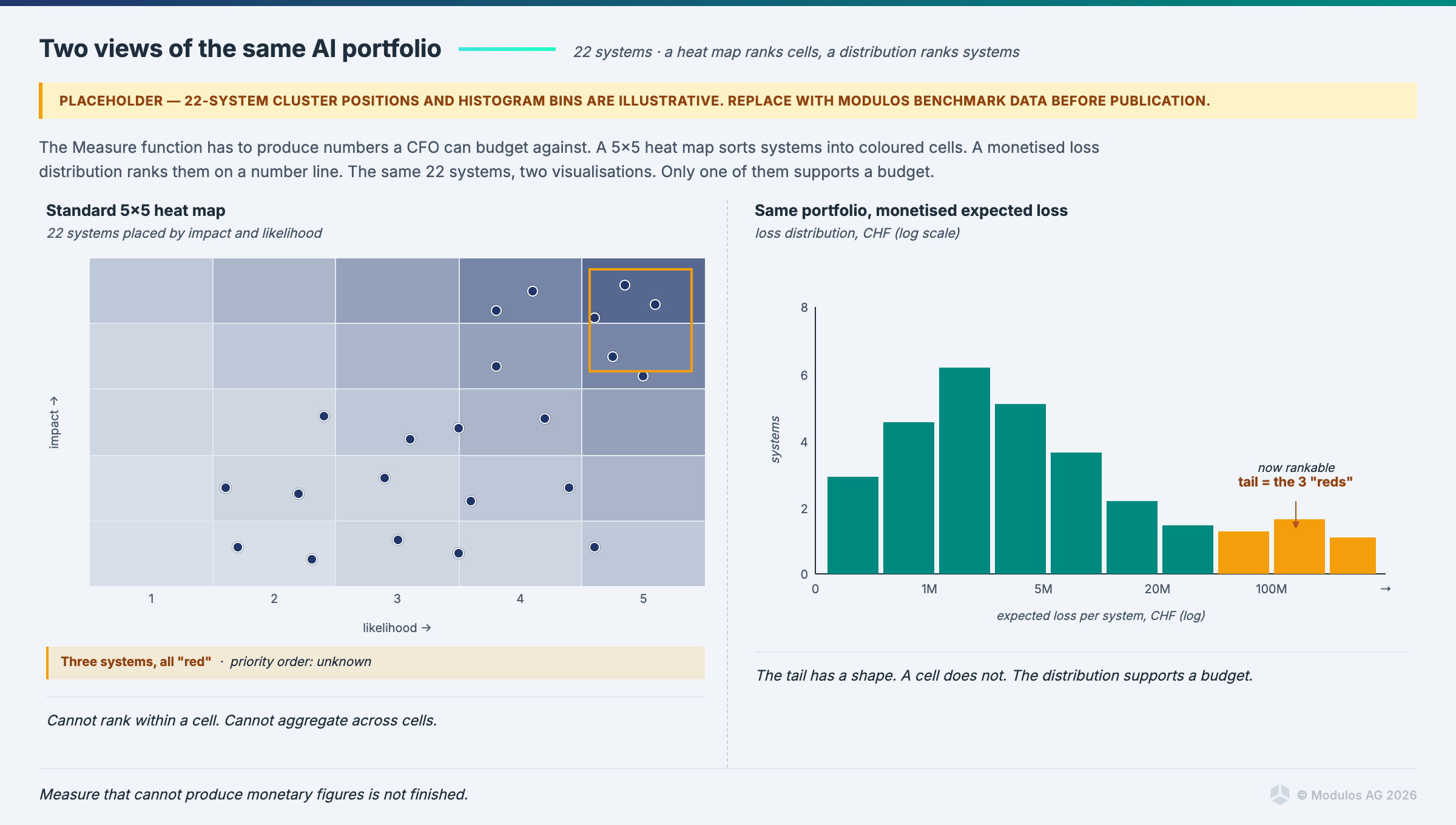

Measure is where the governance community has the loudest ongoing fight, and the fight is worth having.

The question is whether risk can be quantified in monetary terms, and if so, whether it should be. The productive answer is yes to both.

A Measure function that cannot put a euro figure on the downside of a given risk is not finished. Not because monetary quantification is always accurate (it is not), but because the discipline of asking "what would this actually cost" forces clarity. Traffic-light dashboards do not. A red traffic light and a red traffic light on different risks tell you nothing about which to treat first. €4.2 million of expected annualised loss versus €180,000 tells you clearly.

NIST AI RMF does not mandate monetary quantification, but the Measure function is written to accommodate it. The Playbook includes methodologies for expected loss calculations, scenario analysis, and Monte Carlo approaches. Teams that adopt these methodologies find their Manage function can actually prioritise.

The qualitative side of Measure is also essential: the risks that are hard to monetise (reputation, trust, mission impact) get assessed with structured qualitative methods, not dismissed. The NIST Playbook has solid guidance here too.

A good Measure output, for each risk in the register, includes:

- A quantified monetary impact range (best case, expected case, worst case).

- A qualitative severity assessment for dimensions that resist monetisation.

- A confidence interval on the quantification (this matters; overconfident estimates are worse than honest ones).

- The scenario under which the risk would materialise.

- The data or analysis supporting the estimate.

Manage: prioritise, treat, monitor

Manage is the continuous loop. The treatment decisions, the monitoring, the evidence collection, the incident response.

Four components, each of which needs operational machinery:

Prioritisation. Given a Measure output, which risks get treated in what order. The input is monetary quantification. The output is a treatment plan with resource allocation and deadlines. The failure mode is treating risks in the order they are discovered rather than in the order that cost justifies.

Treatment. The actual controls that reduce risk. Technical controls (model retraining, input validation, rate limiting, human-in-the-loop checkpoints). Process controls (review cadences, approval gates, change management). Organisational controls (training, role clarifications, policy updates). Treatment is where engineering time gets spent.

Monitoring. Ongoing observation of the AI system's behaviour, the environment it operates in, and the effectiveness of the treatments applied. This is where Runtime Inspection-style continuous testing lives. Metrics, tests, thresholds, alerts, feedback into the Measure and Map steps.

Incident response. What happens when something goes wrong. The stop authority. The communication protocol. The forensic process. The retrospective. The update to the risk register.

Where the kill-switch lives is in Manage. The stop authority, the incident response plan, the integration with the deployment pipeline, the audit trail on each stop action. CBUAE AI Guidance Note Article 6(f) makes this explicit for UAE financial sector deployments. The EU AI Act Article 14 implies it. NIST AI RMF Manage is where the machinery actually sits.

Where evidence collection lives is in Manage. Each treatment produces evidence of operation. Each test produces a result. Each incident produces a report. Evidence feeds the control records that the governance frameworks (42001, EU AI Act) require.

How NIST AI RMF relates to the EU AI Act

Important upfront: NIST AI RMF is voluntary and does not substitute for EU AI Act compliance. The Act imposes product-level conformity, post-market monitoring, and record-keeping obligations that NIST was not written to discharge. What NIST does provide is a lifecycle methodology that sits usefully underneath Act obligations, particularly the risk-management obligation in Article 9. The two are complementary, not equivalent.

With that caveat, the indicative relationships between NIST functions and AI Act articles are as follows.

| EU AI Act obligation | Relevant NIST AI RMF functions |

|---|---|

| Article 9 (risk management system) | Govern, Map, Measure, Manage collectively inform the risk process |

| Article 10 (data and data governance) | Map 2.3, Measure 2.7, data practices in the Playbook |

| Article 11 (technical documentation) | Govern 1.6 (documentation), Map 3 (artifact tracking) |

| Article 13 (transparency) | Govern 1.4 (stakeholder transparency), Manage 1.1 |

| Article 14 (human oversight) | Manage 2 (response and recovery), Govern 3 (roles) |

| Article 15 (accuracy, robustness, cybersecurity) | Measure 2 (quantified testing), Manage 2 |

| Article 61 (post-market monitoring) | Manage 4 (continuous monitoring) |

Treat this as a map of where the two documents are useful to each other, not as a completeness claim. Specific Act obligations (conformity assessment procedures, technical documentation Annex IV content, logging under Article 12, post-market monitoring plans under Article 61) go beyond what NIST specifies and need to be implemented directly against the Act. A team that has built its risk function around NIST has a head start on Article 9 in particular, but still has to do the Act-specific work.

The practical takeaway. Teams that implement NIST first tend to find the EU AI Act risk-management work easier to land in the organisation, because the lifecycle discipline is already in place. Teams that implement the Act first tend to produce a risk-management function structured around Articles rather than around the engineering lifecycle, which often gets refactored later. Neither order removes the obligation to read the Act on its own terms.

How to implement in practice

A short, opinionated sequence.

Start with Map, not Govern. This is counterintuitive. The framework presents Govern first. The practical order is Map first, because Govern without Map produces policies that govern imaginary systems. Inventory the AI systems, the agents, the shadow usage. Identify the risks. Then write the Govern layer with specific reference to what you discovered.

Quantify early. Do not wait to have perfect Measure methodologies before producing monetary estimates. Start with range estimates, document the assumptions, and refine. A team that has been producing monetised risk figures for six months is in a different conversation with leadership than a team that is still debating methodology.

Use the Playbook. The Playbook is the practitioner document. The framework itself is the architecture. Many teams bounce off the framework and miss the Playbook. The Playbook is where the specific guidance lives.

Integrate with engineering cadences. Map and Measure updates should align with release cycles. Manage monitoring should report into existing operational dashboards. Govern reviews should align with quarterly business reviews. The risk program loses credibility when it runs on its own schedule, separate from engineering reality.

Use a platform that knows the taxonomy. Modulos supports NIST AI RMF as a first-class framework, with the functions, categories, and subcategories mapped to controls and evidence. Risk quantification is built into the risk module, with organisation-level taxonomies and project-level threats expressed in money. Runtime Inspection provides the Manage-function monitoring layer, with tests wired to controls and results flowing back as evidence.

What NIST AI RMF does not cover

Scope honesty matters.

NIST AI RMF does not cover product safety, CE marking, or conformity assessments. Those are EU AI Act territory, with the harmonised standards (including prEN 18286) providing the bridge.

It does not cover certification. There is no "NIST AI RMF certified" status. Organisations adopt the framework and self-attest. Third-party assurance against NIST is possible but rare.

It does not cover sector-specific requirements in depth. The Generative AI Profile fills one gap. Other profiles are in development. For financial services, critical infrastructure, healthcare, and similar sectors, the framework provides the scaffolding, and sector-specific requirements need to be layered in separately.

It is not a regulation. Adoption is voluntary. Most serious US AI programs in regulated sectors adopt it anyway, because the alternative is inventing the taxonomy in-house.

FAQ

Is NIST AI RMF the same as NIST's Cybersecurity Framework (CSF)? Related but distinct. Both are lifecycle frameworks from NIST using similar function-based structures, but CSF covers cybersecurity risk generally while AI RMF is specific to AI systems. The two can be used together: AI systems have cybersecurity risks, and CSF covers the network and infrastructure layer while AI RMF covers the model, data, and lifecycle layer.

What is the NIST AI RMF Generative AI Profile? A companion document published in July 2024 that applies the AI RMF specifically to generative AI systems. It addresses risks that are specific to or elevated by generative AI: confabulation, CBRN uplift, dangerous capabilities, content integrity, environmental harms, and others.

Is NIST AI RMF certifiable? No. There is no certification. Organisations self-attest to implementation. Some third-party firms offer assurance against NIST AI RMF, but this is not a certification in the ISO sense.

How often is NIST AI RMF updated? Major version updates happen infrequently (the core framework is on version 1.0). Companion profiles and playbook updates happen more frequently. Organisations should track updates but do not need to retool every time a profile publishes.

Does Modulos support NIST AI RMF? Yes. It is one of the 13+ frameworks supported in the shared control graph. Controls map across NIST AI RMF, EU AI Act, ISO 42001, and the other frameworks in scope. A control implemented once populates evidence records in all applicable frameworks simultaneously.

Closing

Teams that implement the NIST AI Risk Management Framework first tend to find the ISO 42001 and EU AI Act risk-management work easier to land, because the lifecycle discipline is already in place. That is not the same as saying the regulatory work is done; ISO certification and Act conformity have their own obligations that need to be discharged on their own terms. But starting with the engineering spec is the harder path at the start and the shorter one overall.

The regulators have noticed.

Ready to see how Modulos operationalises the NIST AI Risk Management Framework for your organisation? Request a demo and we will walk you through how Govern, Map, Measure and Manage become real controls and real evidence on the AI risk management platform.

Cross-links: NIST AI RMF, AI risk management, EU AI Act compliance, guide to AI governance.