Global standards for AI governance: EU AI Act, ISO 42001 and NIST compared

US federal AI legislation is a moving target, so look at the states instead. The Colorado AI Act grants a rebuttable presumption of reasonable care to organisations aligned with ISO/IEC 42001 or the NIST AI Risk Management Framework. The Texas Responsible AI Governance Act, in force since January 2026, extends the same kind of affirmative defence. The Illinois frontier-model bill, SB 3444, treats compliance with EU safety and security requirements as a way to satisfy its own obligations.

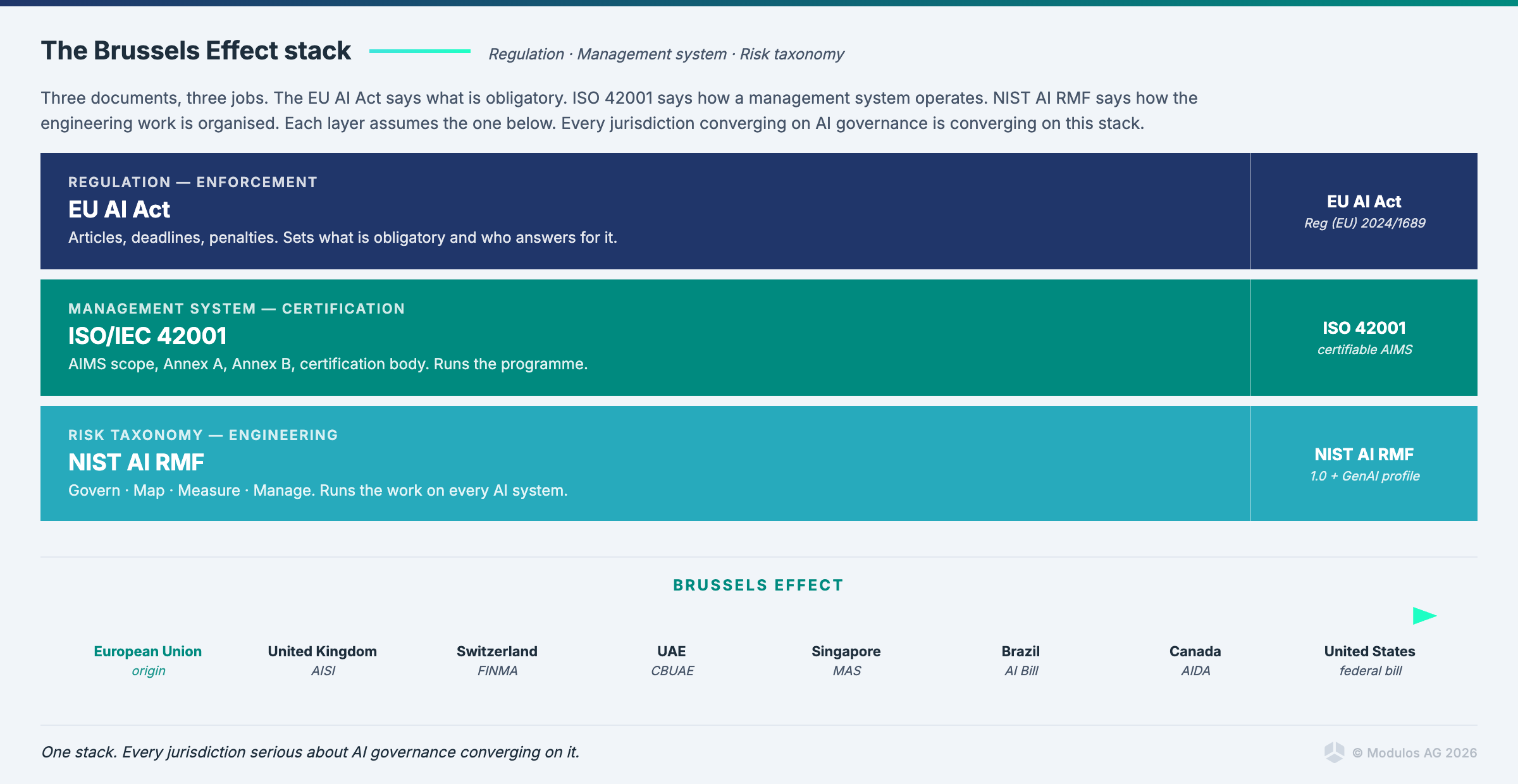

That is the Brussels Effect working in real time, and it is the reason I have stopped taking the question "should we adopt EU AI Act, ISO 42001, or NIST AI RMF" seriously.

It is the wrong question.

The three are not alternatives. They are the global standards for AI governance that any serious AI operation has to satisfy in parallel, each answering a different question. The regulation answers a different question than the management system standard, which answers a different question than the risk management lifecycle. If you treat them as a menu you pick from, you will end up with a half-built compliance program that none of the three was designed to produce.

This post is the map. What each one actually is, how they stack, where they overlap, and why the Brussels Effect means the global standards for AI governance apply to you whether you sell into Europe or not.

The three global standards for AI governance at a glance

| EU AI Act | ISO/IEC 42001 | NIST AI RMF | |

|---|---|---|---|

| What it is | Regulation | Management system standard | Risk management framework |

| Legal status | Binding law in the EU | Certifiable standard (voluntary unless contractually required) | Voluntary US framework |

| What it governs | Placement and use of AI systems in the EU | Your AI management system (AIMS) | Your risk identification and treatment process |

| What good output looks like | Conformity assessment, CE mark, post-market monitoring | Certification body issues a certificate | A working risk register with monetary quantification |

| Enforcement | National competent authorities, EU AI Office | None (you choose a certification body) | None |

| Penalties | Up to 7% of global turnover | None | None |

| The core question it answers | Are you allowed to place this system on the market? | Does your organisation have a reliable, auditable way of running AI? | Have you identified and treated the risks in a defensible way? |

Each column represents a different question, and none of the three answers the other two.

Why "pick one" is the wrong frame

The consulting pitch you will hear often is "start with ISO 42001 because it is the most comprehensive." That is not wrong exactly, but it misses the structure of the problem.

Consider a concrete case. A Swiss bank deploys a credit scoring model for retail customers. What does the bank actually need?

Under the EU AI Act, the model is high-risk (Annex III). The bank must run a conformity assessment, register the system, put in place a quality management system, keep technical documentation, enable human oversight, and monitor it after placement on the market. That is the regulation doing its job. It defines what is allowed and what is not.

ISO 42001 certification of the bank's AI management system tells the bank's customers, its regulator, and its auditors that the organisation has the machinery to run AI responsibly at scale. It does not tell anyone the credit scoring model itself is compliant. The certification says "this bank has an AIMS." It does not say "this bank's credit scoring model conforms to Article 9." Those are different claims, and confusing them is the single most expensive category error in the space.

NIST AI RMF is the lifecycle framework the bank should use to identify what can go wrong with the model, measure how likely it is and how much it would cost, and design the treatment. The Govern / Map / Measure / Manage structure is the engineering framing that turns Article 9's requirement to "establish, implement, document and maintain a risk management system" into something a risk engineer can actually execute.

They represent three different jobs, three different deliverables, and three different reviewers, and pretending one covers the others is how compliance programs end up passing audits on paper and failing them in operation.

EU AI Act: regulation

The Act was published in August 2024, came into force 1 August 2024, and enters application in staged waves.

The four gates (not four tiers, because the pyramid framing misleads) are these:

- Prohibited practices under Article 5. In force since February 2025. Social scoring, exploitation of vulnerabilities, real-time biometric identification in public spaces with narrow exceptions.

- General-purpose AI models under Chapter V. In force since August 2025 for new models, August 2027 for models placed on the market before August 2025. Transparency and systemic risk obligations.

- High-risk systems under Annex III and Article 6. Obligations include Article 9 (risk management), Article 10 (data governance), Article 11 (technical documentation), Article 13 (transparency), Article 14 (human oversight), Article 15 (accuracy, robustness, cybersecurity). Application date: August 2026 baseline, with the Digital Omnibus proposal moving parts of this to December 2027 in certain configurations. The trilogue is active. Deadlines are moving. The obligations are not.

- Transparency for all AI under Article 50. Emotion recognition, biometric categorisation, deepfakes, AI-generated content disclosure. Same timelines apply for deployer and provider-side disclosure.

The Act is enforced by national competent authorities and the EU AI Office at central level. Penalties are up to 35 million euros or 7% of global turnover for prohibited practices. This is not a guideline.

What the Act does not do: it does not tell you how to build your AI management system. It does not give you a risk management methodology. It says "you must have one" and "it must do these things." That is where the other two come in.

ISO 42001: management system standard

ISO/IEC 42001 is the first international management system standard specifically for AI. Published December 2023.

It is modelled on ISO 9001 (quality), ISO 27001 (information security), and the other ISO management system standards. Same architecture. Clauses 4 through 10 cover context, leadership, planning, support, operation, performance evaluation, and improvement. Annex A lists 39 controls for AI. Annex B gives implementation guidance. Annex C and D give application-specific guidance.

What ISO 42001 certifies is an AI management system. Not a product. This is the line that keeps getting crossed in marketing copy and will keep costing companies audits. A certified AIMS demonstrates that the organisation has the governance machinery: policies, roles, lifecycle controls, change management, risk treatment, monitoring, continuous improvement. What it does not demonstrate is that a specific AI system conforms to specific regulatory requirements.

ISO 42001 matters right now for three buyer patterns. EU market access preparation (because a certified AIMS makes your conformity assessments for the AI Act substantially smoother). Enterprise sales, where large customers increasingly require certification as a procurement gate. Financial sector supervisory pressure, where supervisors are asking for it as a baseline without yet mandating it.

Modulos powered the first German ISO 42001 certification at Xayn (four weeks, via SGS). Four weeks is not typical. The typical timeline is three to six months. The reason Xayn made it in four was that the management system was already in place before the clock started.

NIST AI RMF: the risk management lifecycle

NIST AI Risk Management Framework was published in January 2023. It is voluntary. It was produced by the US National Institute of Standards and Technology, with a companion Playbook, a Generative AI profile, and sector-specific profiles in progress.

The structure is Govern, Map, Measure, Manage. This is where NIST genuinely outperforms the other two for operational use.

Govern establishes the policies, accountability structures, and culture for AI risk management. This is the organisational layer.

Map identifies what AI systems exist, what they do, what context they operate in, and what can go wrong. This is the step most programs skip, which is why their Measure and Manage steps are measuring and managing imaginary systems.

Measure is quantitative and qualitative assessment of the risks identified in Map. Good Measure produces monetary impact estimates, not traffic lights. A Measure function that cannot express the downside in money is incomplete.

Manage is prioritisation, treatment, and monitoring. The continuous loop. Where the kill-switch design lives. Where evidence collection lives.

The reason serious US financial sector AI programs have converged on NIST AI RMF as a baseline is that the lifecycle framing survives contact with engineering reality in ways that regulatory obligations rarely do. The Govern / Map / Measure / Manage loop maps cleanly onto standard engineering review cadences. Sprints, post-mortems, incident response, capacity planning. The risk work fits into the way the technical organisation already thinks.

For European teams, the mistake is dismissing NIST as "American and therefore not our problem." The Act requires a risk management system. NIST AI RMF is the best-documented public blueprint for how to build one.

How the three stack in practice

Back to the credit scoring model.

The EU AI Act is the reason the bank is governing the model at all. Article 6 says it is high-risk. Article 9 says there must be a risk management system. Articles 10, 11, 13, 14, 15 say what that system must produce.

ISO 42001 certification demonstrates that the bank has the organisational capability to run that system. The AIMS is the machinery in which the specific controls for the credit scoring model operate. Certification is the external proof the machinery works.

NIST AI RMF is the lifecycle framework the risk engineer on the team uses to populate the risk register, quantify the downside, and design the treatment. The Map step produces the inventory of threats. The Measure step produces the euro figures. The Manage step produces the monitoring plan that runs in production.

Each does a different job. Each produces a different artifact. Together they produce a defensible compliance posture.

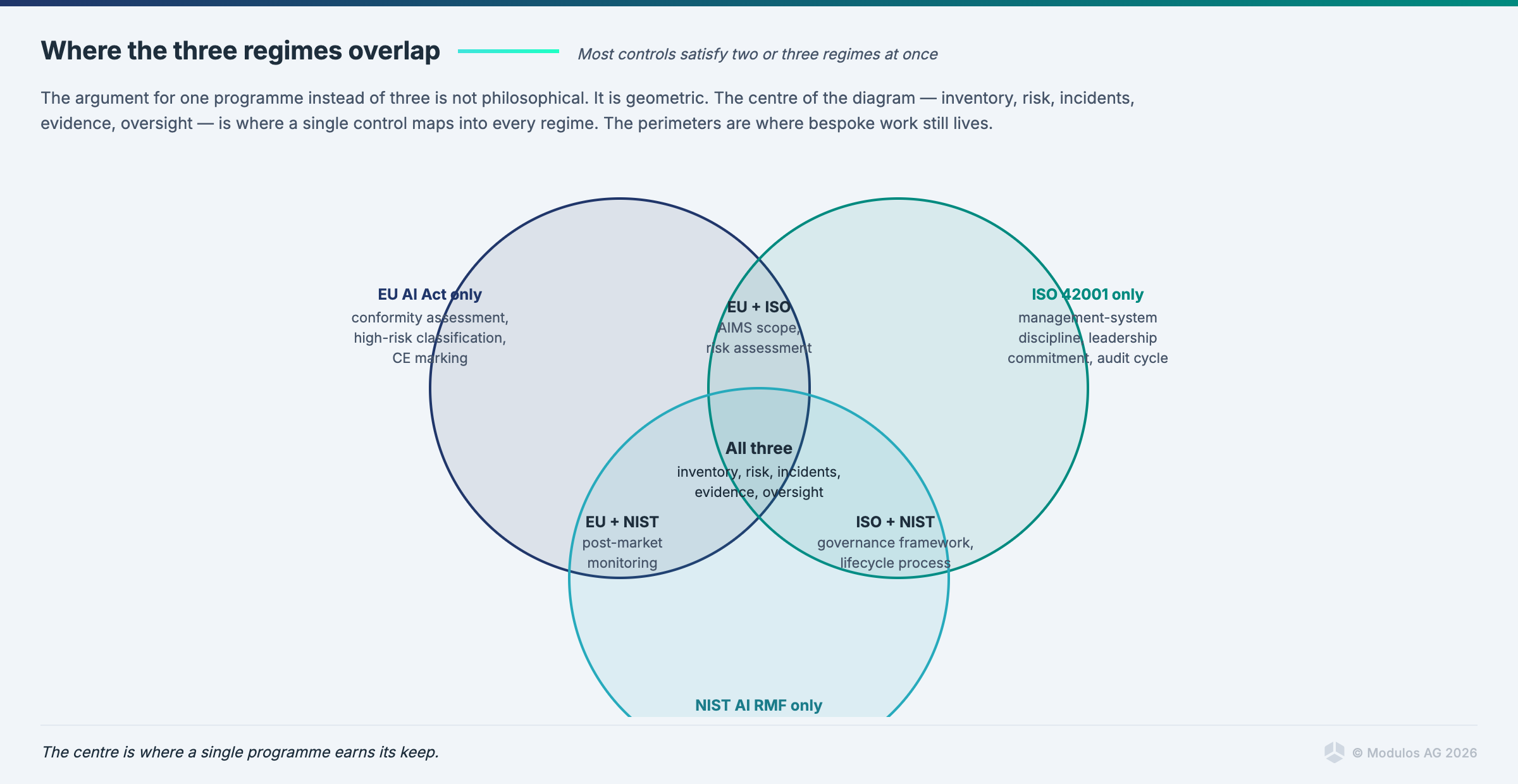

The control overlap that makes stacking efficient

This is where the Modulos argument has a specific, defensible form.

A well-designed control satisfies requirements from multiple frameworks at once. One data-governance control, written to ISO 27001 plus ISO 42001 Annex A plus EU AI Act Article 10 plus NIST AI RMF Map 2.1, produces one set of evidence. That evidence is reviewed once. It clears requirements in four frameworks simultaneously.

The numbers in practice:

Roughly 50% of ISO 42001 application-level controls overlap with EU AI Act articles. NIST AI RMF Map and Measure functions map cleanly onto EU AI Act Article 9. A credit scoring system governed for all three shows control reuse in the 40 to 60% range when the platform actually maintains a shared control graph rather than three parallel spreadsheets.

Modulos supports 13+ frameworks in one shared graph today. EU AI Act, ISO 42001, NIST AI RMF, GDPR, NIS2, DORA, ISO 27001, ISO 27701, OWASP Top 10 for LLM, OWASP Top 10 for Agentic applications, UAE AI Ethics, MAS FEAT, Microsoft Supplier DPR. One control can contribute to several at once. The 40 to 60% total effort reduction, compared with running each program independently, is the quantified argument for stacking.

The unit economics are not the full story, though. The deeper win is structural. If your ISO 42001 AIMS is the scaffolding, and your EU AI Act Article 9 risk management sits inside it, and your NIST Map step produces the data that Article 9 needs, you have built one organisation doing one job. Not three organisations each optimising for their own auditor.

The Brussels Effect, specifically

The 2008 New Legislative Framework was a procedural reform the EU passed quietly. It defined how products get CE-marked and placed on the market. It shaped the Medical Device Regulation. The MDR shaped how the EU AI Act was drafted. The AI Act is now shaping AI regulation globally.

The phrase "Brussels Effect" was coined by Anu Bradford at Columbia Law. The mechanism is this: the EU is a large enough market that exporters do not maintain separate product lines for the EU and for the rest of the world. They build for the EU standard and sell it everywhere. Regulators in other jurisdictions then adopt the EU framing, because their domestic industry is already building to it.

The evidence for AI specifically:

- Colorado's SB24-205, the most comprehensive US state AI law, grants a rebuttable presumption of reasonable care to organisations aligned with ISO/IEC 42001 or the NIST AI Risk Management Framework, both of which interlock with the EU AI Act.

- Texas's Responsible AI Governance Act, in force since January 2026, provides an affirmative defence on the same terms.

- Illinois SB 3444, currently moving, treats compliance with EU safety and security requirements as a direct route to compliance with its own obligations.

- The Canadian AIDA framework mirrors the EU's high-risk system classification.

- Singapore's Model AI Governance Framework updated in 2024 aligned with EU AI Act transparency requirements.

- Brazil's draft AI regulation follows the EU four-gates structure.

- The UAE CBUAE AI Guidance Note published February 2026 aligns closely with EU supervisory expectations for financial sector AI.

- MAS FEAT (Singapore) and Microsoft Supplier Data Protection Requirements both pull from the same EU-shaped vocabulary.

Companies that thought they were opting out of EU regulation by not selling into Europe are wrong about the landscape. The landscape came to them. The compliance you build for the EU AI Act now counts in a dozen other jurisdictions; this is the Bradford thesis playing out on schedule.

Which one to start with, and why it depends on market position

If you have EU market exposure, start with the EU AI Act. The deadlines force the issue. ISO 42001 and NIST AI RMF become the instruments you use to actually produce what the Act requires.

If your primary pressure is enterprise customers demanding certification, start with ISO 42001. The AIMS you build will contain the machinery the Act will later audit.

If your organisation is US-based, heavily engineering-cultured, or you just want a clean mental model before adding regulatory scaffolding, start with NIST AI RMF. The Govern / Map / Measure / Manage loop is the most practitioner-friendly entry point. Then map the resulting controls onto ISO 42001 and the EU AI Act.

All three paths converge. The organisations that have built the shared control graph early are already through the refactoring phase that the parallel-program organisations are only just starting.

FAQ

Do I have to comply with all three? The EU AI Act is law if you operate in the EU. ISO 42001 is voluntary unless a customer contract requires it. NIST AI RMF is voluntary everywhere. The practical answer is that any AI system operating at enterprise scale benefits from all three because each covers what the others do not.

Can ISO 42001 certification substitute for EU AI Act compliance? No. ISO 42001 certifies your management system, not your AI products. A certified AIMS makes Article 9 substantially easier to satisfy, but it does not constitute conformity assessment. Conformity assessments for high-risk systems are product-level and framework-specific.

Is NIST AI RMF only relevant for US companies? No. The framework is lifecycle and engineering-native. European and Asian teams that adopt the Govern / Map / Measure / Manage structure typically move faster on EU AI Act Article 9 than teams that started from the regulation itself.

How does the Digital Omnibus affect this? The December 2027 proposal adjusts several EU AI Act deadlines. It does not change the obligations themselves or the structure of the stack. If anything, the Omnibus increases the premium on having built the shared control graph early, because the organisations with infrastructure absorb deadline changes and the organisations with one-off programs refactor each time.

What is EN 18286 (currently prEN 18286)? A harmonised European standard being developed to bridge ISO/IEC 42001 and EU AI Act conformity assessments for quality management. It entered CEN public enquiry in October 2025, the enquiry closed in December 2025, and final publication is expected in 2026. Once the final version is cited in the Official Journal it drops the "pr" prefix and becomes EN 18286; a provider following it is presumed to meet the matching AI Act obligation. It is also not the only one. CEN-CENELEC JTC 21 has a broader pipeline of AI Act harmonised standards in progress covering risk management, data governance, transparency, human oversight, accuracy and robustness. Organisations that have built a shared control graph will be ready for the whole pipeline. Those that have not will have an integration project per standard.

Closing

The standards are converging faster than most organisations realise. EN 18286 (currently in prEN draft) is the first bridge between ISO 42001 and AI Act conformity assessments, with more AI Act harmonised standards from CEN-CENELEC JTC 21 following behind it. The Brussels Effect is driving the same patterns through a dozen other jurisdictions in parallel.

The companies that have built the shared control graph are ready for whatever trilogue produces. The companies that have built parallel compliance programs will have to refactor, because the Brussels Effect did not wait for them to catch up.

Boring EU concepts have global impact. The question is whether you are building infrastructure that absorbs that, or running three spreadsheets and hoping the auditor is lenient.

Ready to see how Modulos handles the global standards for AI governance in one place? Request a demo and we will walk you through how the platform operationalises EU AI Act compliance, ISO/IEC 42001, and NIST AI RMF on one shared control graph, against the August 2026 deadline.

About the author. Kevin Schawinski is the CEO of Modulos, the AI governance platform that supports 13+ frameworks in one shared control graph. He is a former astrophysicist who held appointments at Oxford, Yale, NASA, and ETH Zurich. He trained EU financial supervisors at the Digital Finance Academy in March 2026 on operational AI governance. Modulos is a signatory of the European Commission's AI Pact and a member of NIST's Center for AI Standards and Innovation (CAISI) consortium.

Cross-links: EU AI Act compliance, ISO/IEC 42001, NIST AI RMF, compliance platform, guide to AI governance.