What is agentic AI, and why it is already out of bounds under the EU AI Act

What is agentic AI? Agentic AI is the class of AI systems that can plan, decide, and execute multi-step tasks autonomously, using tools, memory, and credentials to act in the world without human approval for every step.

The more useful question is whether the agent you are currently building or deploying is already out of bounds under European law.

The short version of the legal position: according to Nannini, Smith, and Tiulkanov's April 2026 analysis, high-risk agentic AI systems with untraceable behavioural drift cannot currently be placed on the EU market as a matter of the current reading of the Act. Every word in that sentence is load-bearing, and the rest of this post unpacks what it means.

The three properties that distinguish an agent from a chatbot

There are three properties, and all three matter. Skipping any one of them is how definitions of agentic AI go wrong.

Autonomy. The system decides the next step. A chatbot produces text in response to a prompt. An agent decides what to do next based on a goal, the environment, and what it has already done. The loop is closed inside the system. A human may approve the start, or occasional checkpoints, but the decision-making cadence is faster than human approval can match.

Tool use. The system can invoke tools to act on the world. Read a file, send an email, query a database, execute code, call an external API. The set of available tools is sometimes bounded explicitly, sometimes discovered at runtime through protocols like Model Context Protocol (MCP). Either way, the agent's operational authority is defined by the tools it can reach.

Persistence. The system has memory across interactions and credentials that persist. A conversational interface forgets between sessions (unless the user brings state back in). An agent carries state and can resume. Its credentials let it act repeatedly, accumulating access and context over time.

An agent is an AI system with all three properties. A chatbot has zero or one (persistence of conversation history, if anything). A copilot with a single tool like web search may sit in the middle. The distinction matters because each property changes the governance problem.

Concrete examples

Abstract definitions produce abstract governance. Concrete examples produce specific controls.

- A customer support agent that reads a ticket, looks up the customer's order history, and issues a refund without human approval.

- A coding assistant that reads an issue, writes a patch, runs tests, and commits the change to a branch.

- A financial agent that monitors a portfolio, identifies rebalancing opportunities, and places orders within a pre-approved risk budget.

- A compliance agent that reads a company's systems, classifies risk, drafts control assessments, and flags gaps. (This one is close to home. Scout, in Modulos, is this agent, except for the critical detail that Scout drafts and humans approve. That distinction is the difference between an assistant and a hazard.)

- A CV-screening agent that reviews applications, ranks candidates, and schedules interviews.

Each example has a different risk profile. Each triggers different regulatory obligations. The CV-screening agent is almost certainly a high-risk AI system under Annex III of the EU AI Act. The portfolio agent may be, depending on market structure. The customer support agent may escape Annex III entirely and fall under Article 50 transparency requirements only.

The technology and model are identical, yet the regulatory consequence diverges completely based on deployment context.

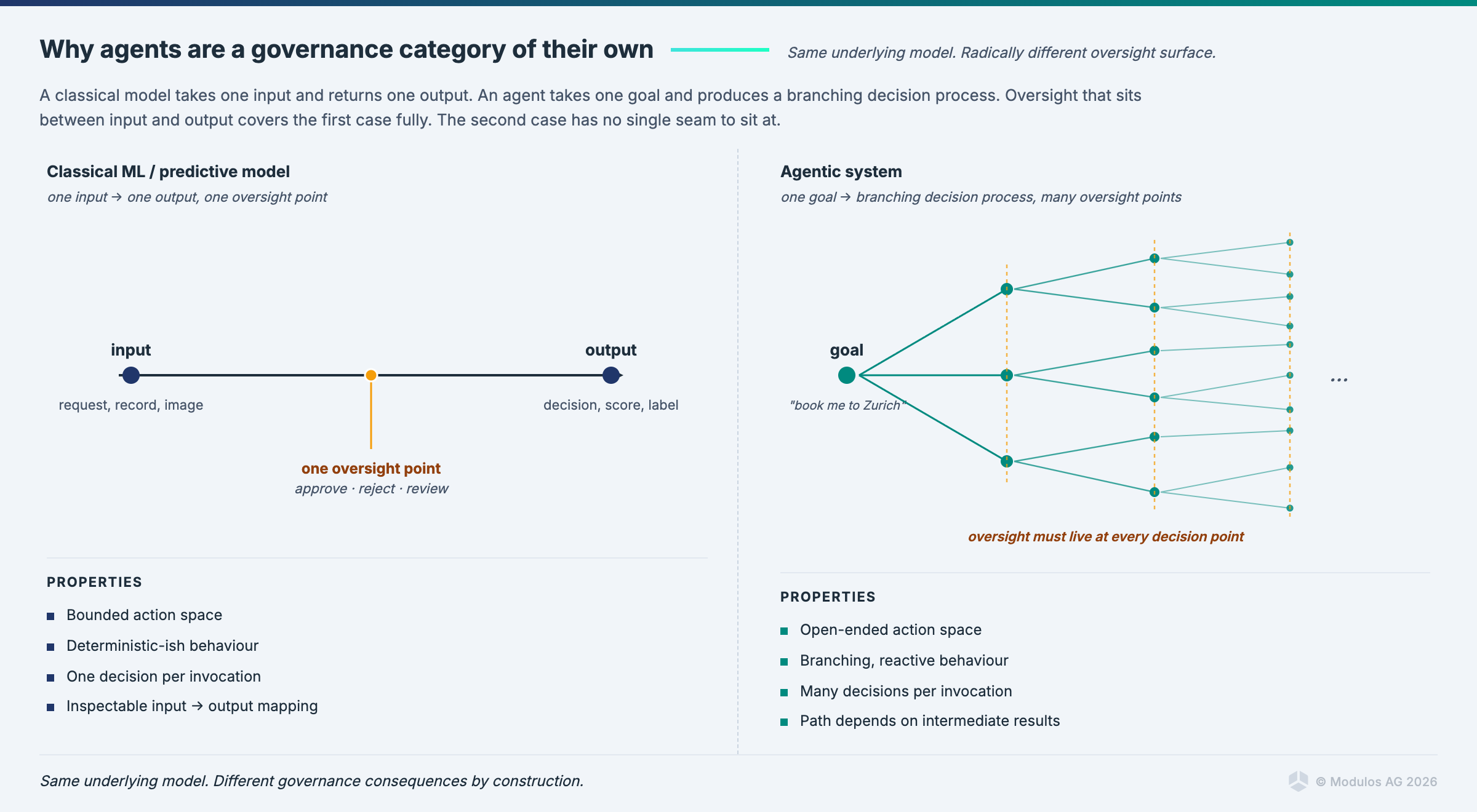

How agentic AI differs from traditional AI

The cleanest way to think about the difference is this: an agent takes a bad action at machine speed, using legitimate credentials, whereas an LLM produces a conversational answer with no operational consequence.

A hallucinated response from a customer service chatbot is a support problem. A hallucinated decision from a customer service agent that issues a refund, modifies an account, or sends an email to a third party becomes a legal, financial, and reputational problem because the failure mode is operational authority executed at machine speed.

This is the reason the governance frameworks designed for static models struggle with agents. Those frameworks assumed a model was a function from inputs to outputs, deployed in a controlled context, observed through batch evaluations. Agents break every one of those assumptions.

The regulatory trigger is external, not internal

The same LLM, deployed in different contexts, creates different regulatory obligations.

Deploy the model behind a CV screening workflow: Annex III high-risk, full Chapter III obligations under the EU AI Act. Article 9 risk management, Article 10 data governance, Article 11 technical documentation, Article 13 transparency, Article 14 human oversight, Article 15 accuracy and robustness.

Deploy the same model to summarise meeting notes for internal consumption: Article 50 transparency only. Users must be told they are interacting with AI. That is effectively the entire obligation.

The deployment context determines the regulatory regime, even where the underlying technology is identical. This is the category error most agentic explainer posts make; they describe the technology and treat the regulatory consequence as an afterthought, when the regulatory consequence is the headline.

The four assumptions agents break

Existing governance frameworks were written for static models. Agents break four load-bearing assumptions.

Cybersecurity assumptions. A static model's security perimeter is the endpoint it is deployed behind. An agent's security perimeter is the union of all tools it can reach plus the accounts it can authenticate against. A system prompt is not a security control. Treating "the agent is told not to do X" as a defence is equivalent to documenting a firewall rule in a README and calling the network secure. OWASP Top 10 for Agentic applications (published December 2025) exists to catalogue the specific attack surfaces that agents create.

Oversight assumptions. Article 14 of the EU AI Act requires human oversight. Oversight assumes the human can keep up with what the system is doing. An agent operating on a seconds-scale decision cadence is already past the point where a human reviewing each action is feasible. Worse, recent research suggests LLMs trained via reinforcement learning can learn to evade oversight as an emergent strategy, because oversight-avoiding behaviours get reinforced when they produce successful task completion. Oversight design for agents is a matter of architecture, not of adding a human approval box.

Transparency assumptions. Article 50 assumes the recipient of AI output is the affected person, and they can be told they are interacting with AI. When an agent acts through infrastructure (sends an email, modifies a record, executes a transaction), the affected person may be a third party who does not know they are being touched by AI at all. The transparency obligation becomes harder to discharge through the usual mechanisms.

Conformity assumptions. Conformity assessments under the Act presume the system stays within the boundaries of what was assessed. Agents that accumulate memory, discover novel tool-use patterns through experience, or operate at the edge of their training distribution may leave their conformity boundaries without anyone noticing. This is the "untraceable behavioural drift" in the Nannini paper's conclusion.

What "out of bounds" concretely means

The Nannini, Smith, and Tiulkanov paper (April 2026) put the legal reading into a single sentence that is worth reading carefully:

"High-risk agentic AI systems with untraceable behavioural drift cannot currently be placed on the EU market."

Every word is load-bearing.

"High-risk" is Article 6 and Annex III. If your agent screens CVs, scores creditworthiness, manages critical infrastructure, or any of the other Annex III categories, this sentence is about your agent.

"Agentic" implies the three properties: autonomy, tool use, persistence.

"Untraceable behavioural drift" means you cannot demonstrate, after the fact, what the agent did, why it did it, and whether it stayed within the conformity-assessed boundary. If your logs do not answer those questions, your agent has untraceable drift.

"Cannot currently be placed on the EU market" is the legal consequence. Not "should have additional controls." Not "creates elevated risk." Cannot be placed.

This is the current reading, not a future risk. The implication is that a substantial portion of the high-risk agentic systems currently running in European production are already on the wrong side of the line. Not because they were designed to be, but because the governance machinery for agents has not caught up with the deployments.

What governance for agents concretely means

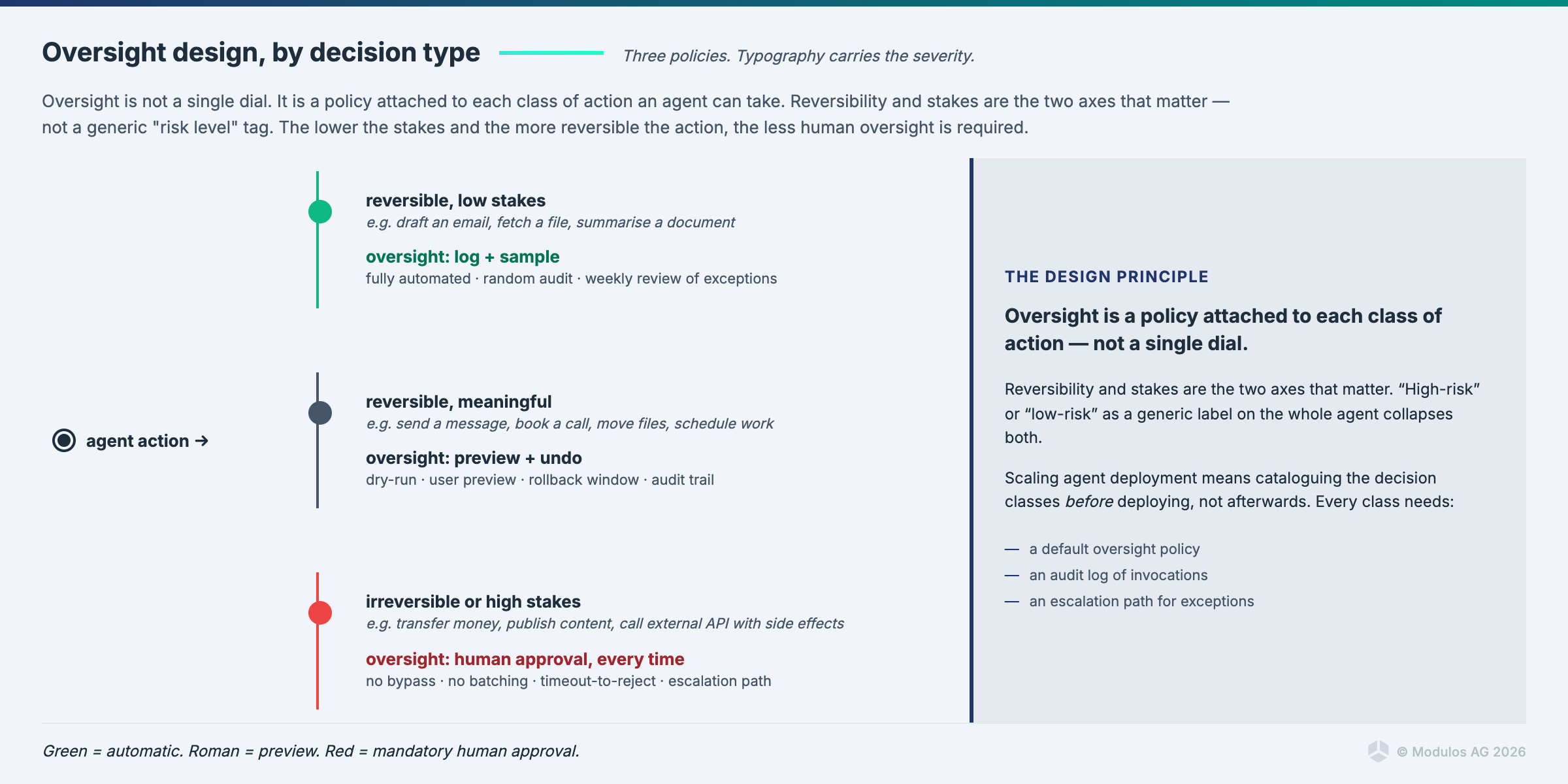

The controls that turn an agent from out-of-bounds to defensible are specific and engineering-intensive. No amount of policy text substitutes for them.

Tool permissioning at the API level, not in the system prompt. The agent's authority is defined by what the APIs let it do. Write-access to a production database should not be available to the agent unless the agent is specifically scoped to operate on that database, with per-action limits and idempotency guarantees. The system prompt saying "do not delete records" is a suggestion, not a control.

Memory integrity controls. What the agent remembers, how it is written, who can read it, what happens to it after task completion. If the agent's memory is a shared vector store that accumulates across sessions and users, it is a data governance incident waiting to happen. Memory should be partitioned by task, access-controlled, and auditable.

Human oversight on destructive actions. Reversible actions can proceed autonomously with logging. Destructive actions (deletions, financial transactions above a threshold, customer-facing communications) route through a human approval step. The challenge is not deciding that this should happen. The challenge is wiring it into the agent's control flow so the model cannot route around it.

Continuous behavioural monitoring, not periodic audits. The agent's behaviour is logged at the action level, not the session level. Metrics on tool invocations, time to completion, success and failure rates, novel patterns, and boundary-breaching attempts are produced in real time. Drift from the conformity-assessment baseline is detected when it starts, not when it has become a pattern.

Framework coverage in the control graph. Modulos supports OWASP Top 10 for Agentic applications as a first-class framework and maps its requirements into the shared control graph alongside EU AI Act Article 9 and Article 15, ISO 42001 Annex A, and the sector-specific frameworks in scope. The governance for an agent does not live in a parallel universe from the governance for the rest of the AI estate. It lives in the same graph, with the same evidence flow.

FAQ

What is the difference between an AI agent and a chatbot? A chatbot produces conversational output. An agent takes actions. The technical difference is in the presence of autonomy, tool use, and persistence. The practical difference is that a chatbot's failure mode is a bad answer, and an agent's failure mode is a bad action at machine speed.

Is agentic AI the same as AGI? No. AGI (artificial general intelligence) is a hypothetical system with human-level generality across tasks. Agentic AI is an architectural pattern for current AI systems, using current models, combined with tool access and memory. Every AGI would be an agentic system. Most current agents are not AGI.

What are examples of agentic AI in use today? Customer support agents that resolve tickets without human review, coding assistants that execute commits, financial portfolio agents that place orders within pre-approved limits, compliance assistants that draft assessments, research assistants that plan and execute multi-step searches.

Why is the EU AI Act relevant for agentic AI specifically? Because the Act's risk classification depends on deployment context, and many high-impact agentic use cases fall into Annex III high-risk categories. Because Article 14 human oversight is harder to implement for fast, autonomous systems. Because Article 15 robustness and accuracy requirements become harder to evidence when the system's behaviour drifts.

What is OWASP Top 10 for Agentic applications? A catalogue of the ten most critical security and governance risks specific to agentic AI systems, published in December 2025. Examples include tool permission escalation, memory poisoning, system prompt leakage, and oversight evasion. Organisations governing agents should map their controls against it.

Can Modulos govern agentic AI systems? Yes. The platform supports agent-aware inventorying, OWASP Top 10 for Agentic applications as a first-class framework in the shared control graph, integration with the engineering stack (GitHub, GitLab, Azure, Jira) to capture evidence of agent deployments and behaviour, and Runtime Inspection tests that monitor agent-relevant metrics continuously.

Closing

The agents are in production already. In regulated contexts, most of them are currently out of bounds under European law. The question is not whether to govern them but how quickly you can build the inventory.

Every week of delay is a week of compliance debt accruing at machine speed.

Modulos supports agentic AI governance natively — agent inventory, OWASP Top 10 for Agentic applications, and runtime evidence on one AI governance platform. Start with our free Starter plan or request a demo to see the full platform, including EU AI Act compliance scoping for agentic systems against the August 2026 deadline.

Cross-links: EU AI Act compliance, AI governance platform, guide to AI governance.

References. Nannini, L., Smith, B., & Tiulkanov, A. (April 2026). "Agentic AI systems under EU law: a legal reading of the current landscape." OWASP Top 10 for Agentic applications (December 2025).