Stop Guessing

at AI Risk

Quantify AI risk exposure in monetary terms. AI agents assist with risk identification and scoring, all connected to mitigating controls.

Request a DemoWhy Traditional AI Risk Assessment Falls Short

Qualitative methods can't answer the questions leadership is asking.

Qualitative and stale assessments

"High/Medium/Low" doesn't cut it when the board asks what your actual exposure is. And by the time the assessment is done, it's already outdated.

No portfolio view

Risk assessed per-project, but nobody sees the aggregate exposure across all AI systems.

Disconnected from controls

Risks identified but no clear link to what's actually mitigating them.

Audit trail gaps

Risk decisions made in meetings with no documentation of rationale or methodology.

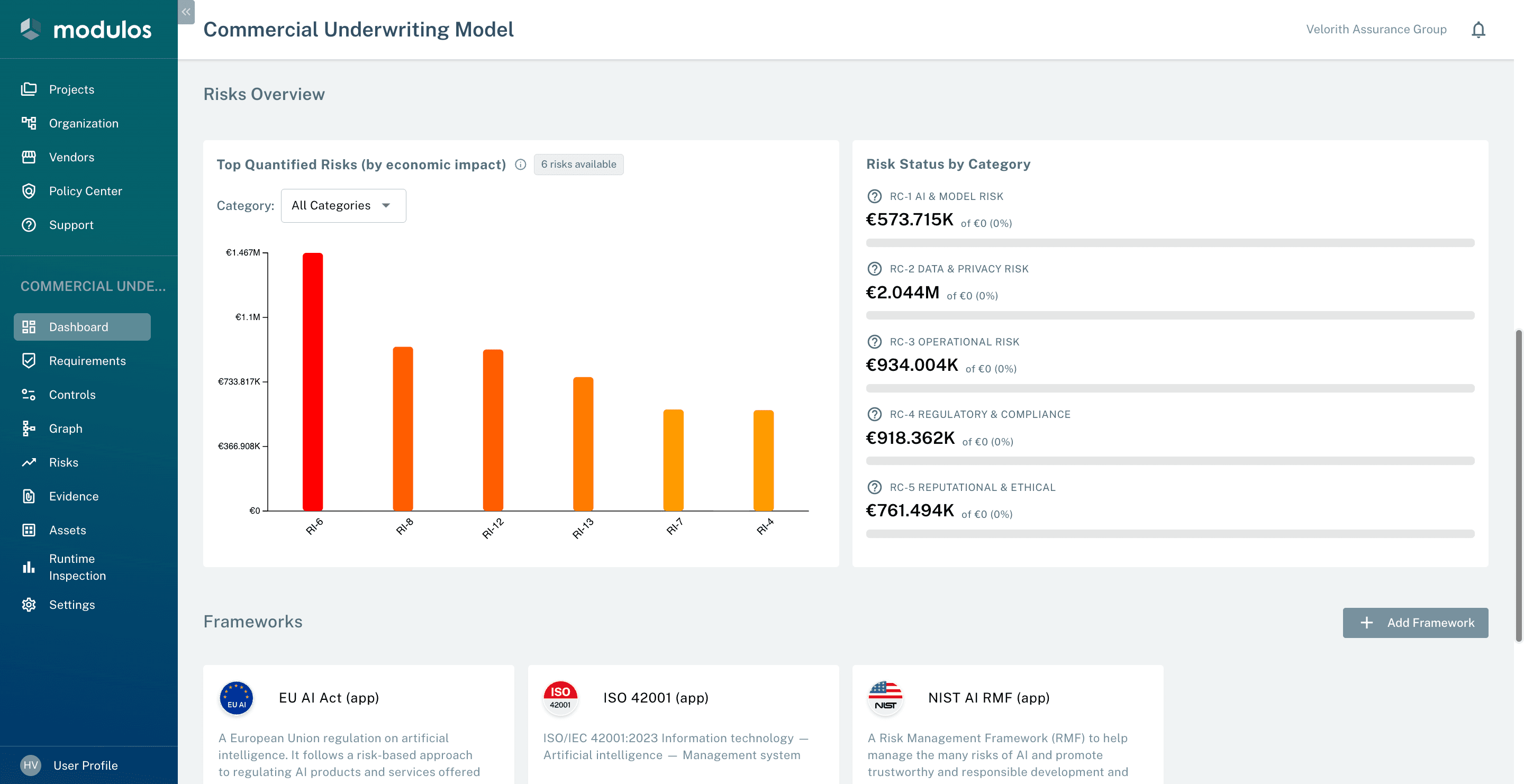

Quantify, scope, and prove every AI risk

Score AI risks in monetary terms. Hold them inside organization-wide limits. Map each one to the controls and evidence that mitigate it.

Top quantified risks

Every risk scored in monetary terms via Monte Carlo, scenario analysis, or manual entry. Bar height is € exposure.

Quantify risk in monetary terms

Express AI risk exposure in EUR, CHF, or USD. Four methods, one ledger of quantification runs, including the Fermi Risk Agent, our AI-native flagship. Modulos explicitly does not treat risk matrices as quantification.

- 15.05.26€517KRisk Agent · Fermianchored on E-12 records exposed · 2 controls

- 11.05.26€41KMonte Carlo

- 08.05.26€34KRisk Agent · Fermianchored on MCF-4 active users · 3 controls

- 06.05.26€6.7KManual entry

Risk Appetite & Limits

Hierarchical limits (organization, project, category) that sum to 100% of total appetite. Inconsistent limits block quantification until corrected.

Risk value over time

Set a cadence (weekly, monthly, custom) and Modulos re-quantifies every risk in the project. Stable inputs reuse prior assumptions, so the trend reflects real change, not noise.

Threat vector tracking

Each vector traces to its canonical reference: OWASP LLM, EU AI Act, NIST. Add your own.

Risk → Control → Evidence

Automated Risk Quantification

Point the Risk Agent at any risk. It inspects your code, logs, controls, and evidence, then produces a justified monetary estimate. Schedule it to run weekly — get a risk trend, not a risk snapshot.

Investigate

Pulls structural data from your projects — users, revenue, data volumes, frameworks mapped

Audit

Maps existing controls to threats and calculates a mitigation factor from your evidence and test results

Quantify

Runs Fermi estimation using platform data and industry benchmarks to produce a monetary risk value

Justify

Delivers a full analysis breakdown with sources, methodology, and reasoning you can hand to an auditor

Every run is logged with full reasoning. When the agent can't quantify, it tells you exactly what data is missing — no made-up numbers.

Four Pillars. One Platform.

Discover more about the other pillars: Governance, Compliance and AI Agents.

Governance

Run AI governance like an operating system

Project dashboards, AI lifecycle tracking, ownership workflows, and complete audit trails.

Compliance

Multi-framework compliance without duplicate work

One control satisfies many frameworks. Evidence browser with full audit trail.

Agents

Human-in-the-loop AI agents for GRC work

Scout Assistant, evidence automation, control assessments — all with human oversight.

See Risk Quantification in Action

Book a demo to see how Modulos transforms AI governance from a compliance burden into a strategic value driver.